Kintsugi Shuts Down, Opens AI Depression Detection Tech to Public

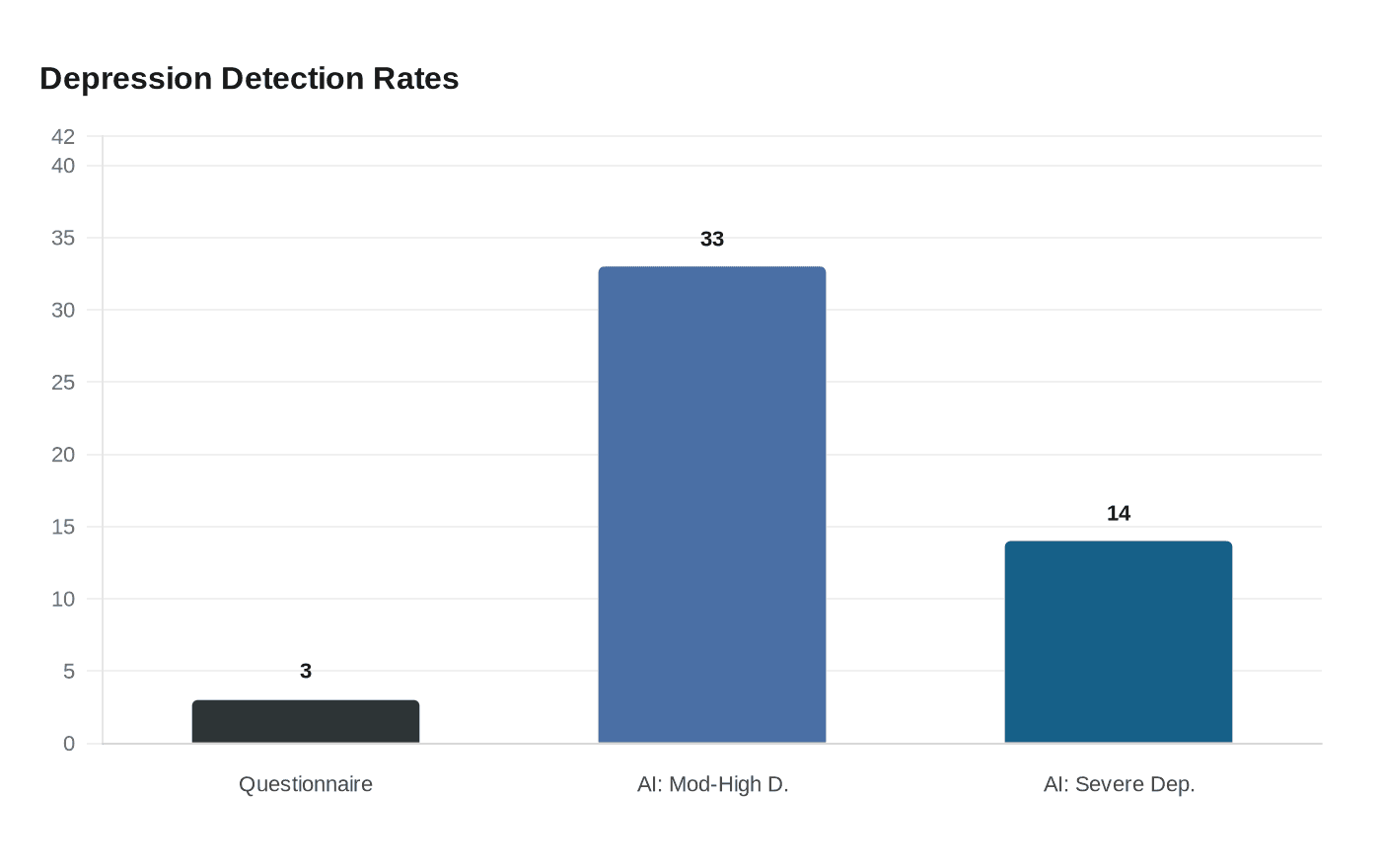

Kintsugi's voice AI detected depression in 33% of screened patients where standard questionnaires found only 3%, yet the Berkeley startup still couldn't survive four years waiting for FDA clearance.

When one health plan screened its patients the traditional way, using standard questionnaires, 3% came back with signs of depression. When the same population was run through Kintsugi's voice-analysis AI, the tool flagged 33% for moderate-to-high depression and 14% for severe depression. That gap captures both the promise and the peril of the Berkeley, California startup, which has now shut down its commercial operations after burning through $30 million and nearly four years navigating the FDA's De Novo clearance process without ever crossing the finish line.

Founder and CEO Grace Chang announced the closure in a company blog post, framing the open-sourcing of Kintsugi's core technology as a deliberate choice rather than a defeat. "We are choosing the integrity of the science over the limitations of a distressed market," Chang wrote. "We refuse to let these breakthroughs sit on a shelf or disappear into a closed acquisition." The company posted its foundational voice biomarker AI models, scientific methodologies, and formative research connecting vocal patterns to depression and anxiety on Hugging Face, making them freely available to researchers worldwide. Its funding came from investors including Insight Partners, Acrew Capital, and Darling Ventures.

The technology works by analyzing as little as 20 seconds of free-form speech, detecting subtle shifts in pitch, tone, energy, and rhythm that correlate with depressive episodes. The company validated its approach in a prospective, double-blind pivotal study benchmarked against the SCID-5 structured clinical interview, and published peer-reviewed findings in the Annals of Family Medicine. A separate cross-sectional study of 14,898 adults in the United States and Canada compared its AI against the PHQ-9 self-report questionnaire.

But clinical validation, it turned out, was only the beginning of what the FDA required. The agency classifies AI diagnostic tools as Class II software-based medical devices, meaning Kintsugi had to satisfy the full De Novo pathway, a process the company worked through over four years and multiple pre-submission consultations. The regulatory timeline is where the math became fatal. Venture capital runs on three-to-five-year return horizons; FDA clearance for a novel AI biomarker can take far longer. "I would say the challenge in this intersection of AI and regulatory can be a really big hurdle for startups," Chang told Healthcare IT News.

The FDA's caution reflects a real clinical risk. A tool that detects depression in 33% of patients where a questionnaire found 3% will inevitably generate false positives, routing patients who are not depressed into treatment pathways, medication evaluations, and follow-up appointments that carry their own costs and harms. False negatives carry the opposite danger: patients screened as healthy who are quietly deteriorating. For a tool designed to embed in routine primary care, telehealth encounters, and insurance intake calls, those error rates compound at scale across millions of interactions. The FDA requires evidence not just that a tool works in controlled studies, but that it performs consistently across demographic groups, clinical settings, and real-world acoustic conditions.

Pelu Tran, CEO of health AI governance firm Ferrum Health, identified a structural flaw in the current framework: "Right now, the FDA treats AI like a traditional medical device," he said, "once it's approved, it's essentially locked in place." For voice biomarker AI, which can and should be retrained as datasets grow, that rigidity creates a mismatch between how the technology actually improves and how the agency currently measures it.

A workable approval path for the next company attempting this space would require closer early coordination between FDA and the Centers for Medicare and Medicaid Services, since reimbursement codes for brief behavioral assessments already exist under CPT 96127 but CMS alignment has historically lagged years behind clearance. Without a credible coverage commitment on the other side of approval, the financial case for surviving the regulatory marathon collapses, which is precisely what happened to Kintsugi.

Know something we missed? Have a correction or additional information?

Submit a Tip