Leaked Anthropic code spreads across GitHub as AI rewrites it further

Anthropic’s 59.8MB code leak spread across GitHub in hours, then AI rewrote it into new languages, turning one mistake into a copyright enforcement test.

Anthropic’s leaked Claude Code source showed how fast digital work can escape control once it leaves the vault. A 59.8MB release package exposed internal code on March 31, 2026, and within hours it had spread across GitHub, where programmers used AI tools to rewrite the material into languages such as Python and Bash, multiplying the copies faster than takedowns could catch them.

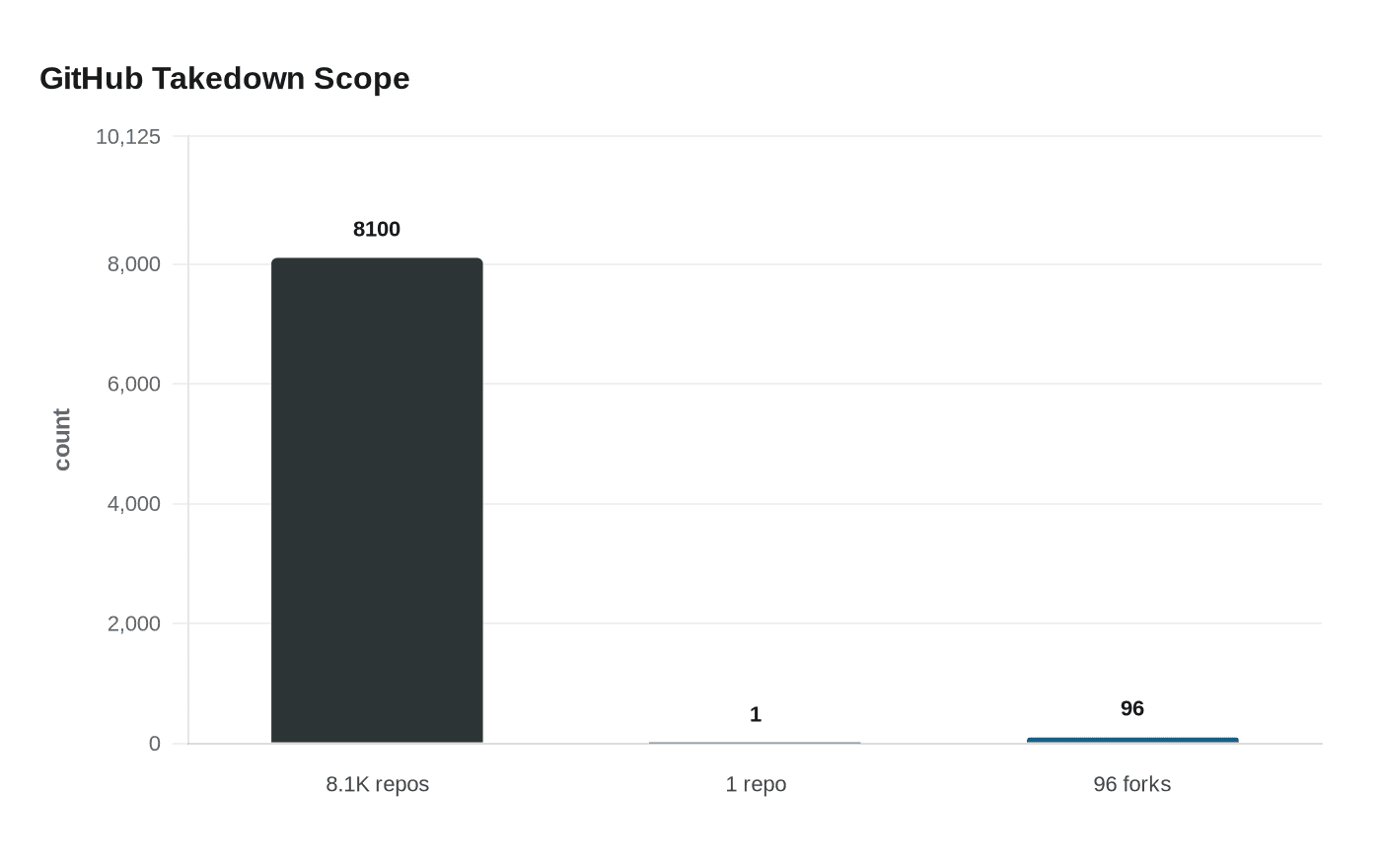

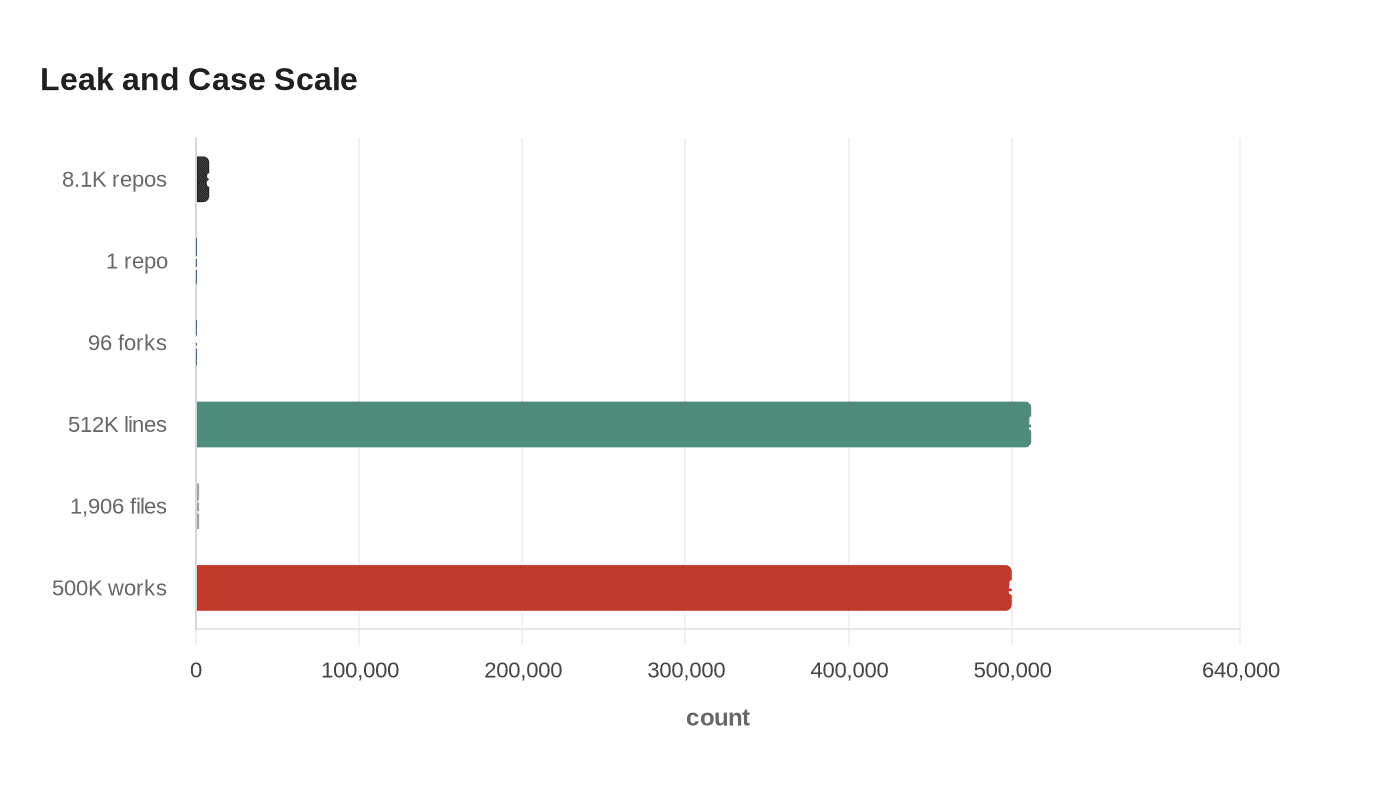

Anthropic said the exposure came from human error in packaging the since-deleted Claude Code 2.1.88 release, not from a security breach. The company said no customer data or credentials were involved. GitHub initially said its takedown processing covered an entire network of 8.1K repositories, before Anthropic later narrowed enforcement to one repository and 96 fork URLs, a sequence that captured just how quickly one leak can become a distributed compliance problem.

The codebase itself was large enough to make the point. Security researchers said it contained about 512,000 lines across roughly 1,906 files. The leaked material reportedly exposed internal safeguards, including a technique meant to prevent bad actors from cloning Claude Code and a mode designed to strip evidence that an AI produced output. That combination gave rivals and researchers a rare look inside one of Anthropic’s most valuable products, while also showing how much of modern software can be repurposed once it is copied.

The timing sharpened the legal stakes. On June 23, 2025, Judge William Alsup ruled in the Northern District of California that training Anthropic’s models on lawfully purchased books was fair use, while rejecting fair use for pirated books as inherently, irredeemably infringing. In August 2025, Anthropic agreed to a $1.5 billion settlement in Bartz v. Anthropic, covering about 500,000 works and described as the largest publicly reported copyright recovery in history. The plaintiffs included Andrea Bartz, Kirk Wallace Johnson, and Charles Graeber.

That history leaves Anthropic in an unmistakable irony. The company has argued in court that training AI can be transformative, yet it is now relying on copyright law to protect its own code from copying and redistribution. The leak suggests copyright still has force when a rights holder moves quickly and platforms cooperate, but AI-assisted rewriting makes enforcement harder with every passing hour. In practice, the law can still draw a line; in the AI era, keeping material on the right side of that line is becoming the harder task.

Know something we missed? Have a correction or additional information?

Submit a Tip