Microsoft unveils Maia 200 chip and developer tools to challenge Nvidia dominance

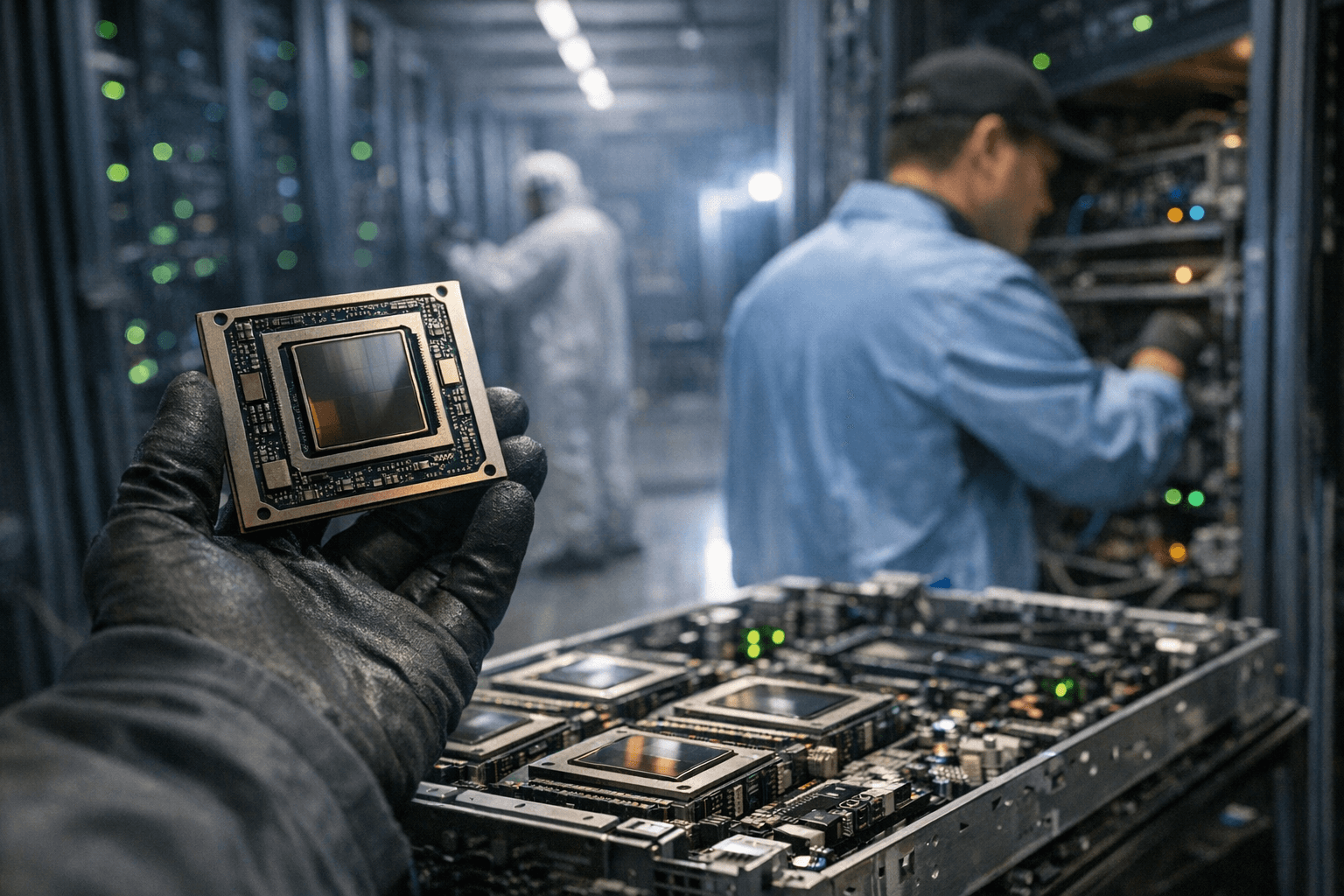

Microsoft launched the Maia 200 AI accelerator and a developer tool package to narrow Nvidia’s software lead, saying the chip is already running in an Iowa data center.

Microsoft unveiled the second generation of its in-house AI accelerator, the Maia 200, and a suite of developer tools in a bid to close a persistent software gap with Nvidia. The company said the Maia 200 is already running in a data center in Iowa, signaling an early move from prototype to operational deployment.

The announcement places Microsoft squarely in the intensified contest over AI infrastructure. Nvidia has dominated the market for years with its GPUs and an expansive software ecosystem that includes CUDA and a broad array of libraries and frameworks optimized for its hardware. Microsoft’s strategy pairs its own silicon with developer tooling to reduce the friction that has long driven customers to Nvidia-based systems.

Details about Maia 200’s architecture, performance metrics and power efficiency were limited in the rollout. Instead, Microsoft framed the release as an exercise in co-design: building hardware and software together so that models can be trained and served efficiently on its cloud platform. Industry observers say that if Microsoft can deliver a competitive hardware-software stack, it could alter economics for large-scale model training and inference, and give customers more leverage in procurement conversations.

Running the Maia 200 in an Iowa data center gives Microsoft a controlled environment to stress-test the chips at scale and begin migrating internal workloads. Early deployment in a production data center typically serves both technical and commercial aims: it allows the company to optimize systems for latency, throughput and reliability, and it demonstrates operational readiness to potential cloud customers. Microsoft did not disclose a timetable for broader availability or customer pricing.

Central to the announcement was a package of developer tools intended to ease transitions from other platforms. Microsoft framed these tools as an attempt to reduce migration costs and provide productivity benefits to engineers and researchers. Software compatibility and the availability of high-quality runtimes, compilers and model-porting utilities will determine whether developers adopt an alternative to the entrenched Nvidia ecosystem. Historically, developer mindshare and third-party library support have been decisive in platform choice, and Microsoft faces the task of matching or surpassing that breadth.

The move has broader implications for the AI supply chain and for cloud competition. Greater diversity in accelerator suppliers could ease capacity constraints and put downward pressure on prices for compute-heavy AI workloads. It could also encourage innovation in chip design, interconnects and memory architectures as competitors respond. For enterprises and cloud customers, more options may reduce single-vendor risk and create new opportunities for workload optimization.

At the same time, risks remain. Transition costs for enterprises can be high, and differences in tooling or model behavior across accelerators can complicate validation and compliance. Energy consumption and environmental impact will also be scrutinized as new data center hardware rolls out at scale.

Microsoft’s unveiling of Maia 200 signals a step toward vertical integration of cloud, hardware and software. Whether the company can translate that integration into widespread developer adoption will depend on the depth of its software ecosystem, third-party benchmarks and how quickly Microsoft can demonstrate clear cost or performance advantages for real-world AI workloads.

Sources:

Know something we missed? Have a correction or additional information?

Submit a Tip