OpenAI, Anthropic, Google Team Up to Block Chinese AI Distillation Efforts

Three rival U.S. AI giants began sharing attack data to block Chinese labs from cloning their models, after Anthropic documented 16 million fraudulent query exchanges targeting Claude.

OpenAI, Anthropic, and Google began sharing intelligence to detect and block coordinated efforts by Chinese AI laboratories to clone U.S. frontier models through a technique known as adversarial distillation, marking a rare alliance among companies that compete fiercely on nearly every other front.

The coordination runs through the Frontier Model Forum, an industry nonprofit the three companies co-founded with Microsoft in 2023, to detect adversarial distillation attempts that violate their terms of service. The sharing is modeled on how cybersecurity firms exchange threat intelligence: when one company detects an attack pattern, it flags the pattern for the others.

The practice under scrutiny works by flooding a powerful "teacher" model with queries and using the outputs to train a cheaper "student" model that approximates the original's capabilities. The commercial consequences are direct: a competitor that rapidly extracts a frontier model's outputs can replicate years of investment at minimal cost, potentially without the safety and alignment guardrails built into the original.

The practice of AI distillation first drew significant scrutiny in 2025 after DeepSeek released its R1 reasoning model, prompting Microsoft and OpenAI to investigate whether the Chinese startup had improperly exfiltrated large amounts of data from their models. White House AI adviser David Sacks stated publicly that there was "substantial evidence" that DeepSeek had distilled from OpenAI's models. OpenAI formalized its position in a February 12 memo to the U.S. House Select Committee on China, stating that DeepSeek's practices should be understood "in the context of its ongoing efforts to free-ride on the capabilities developed by OpenAI and other US frontier labs."

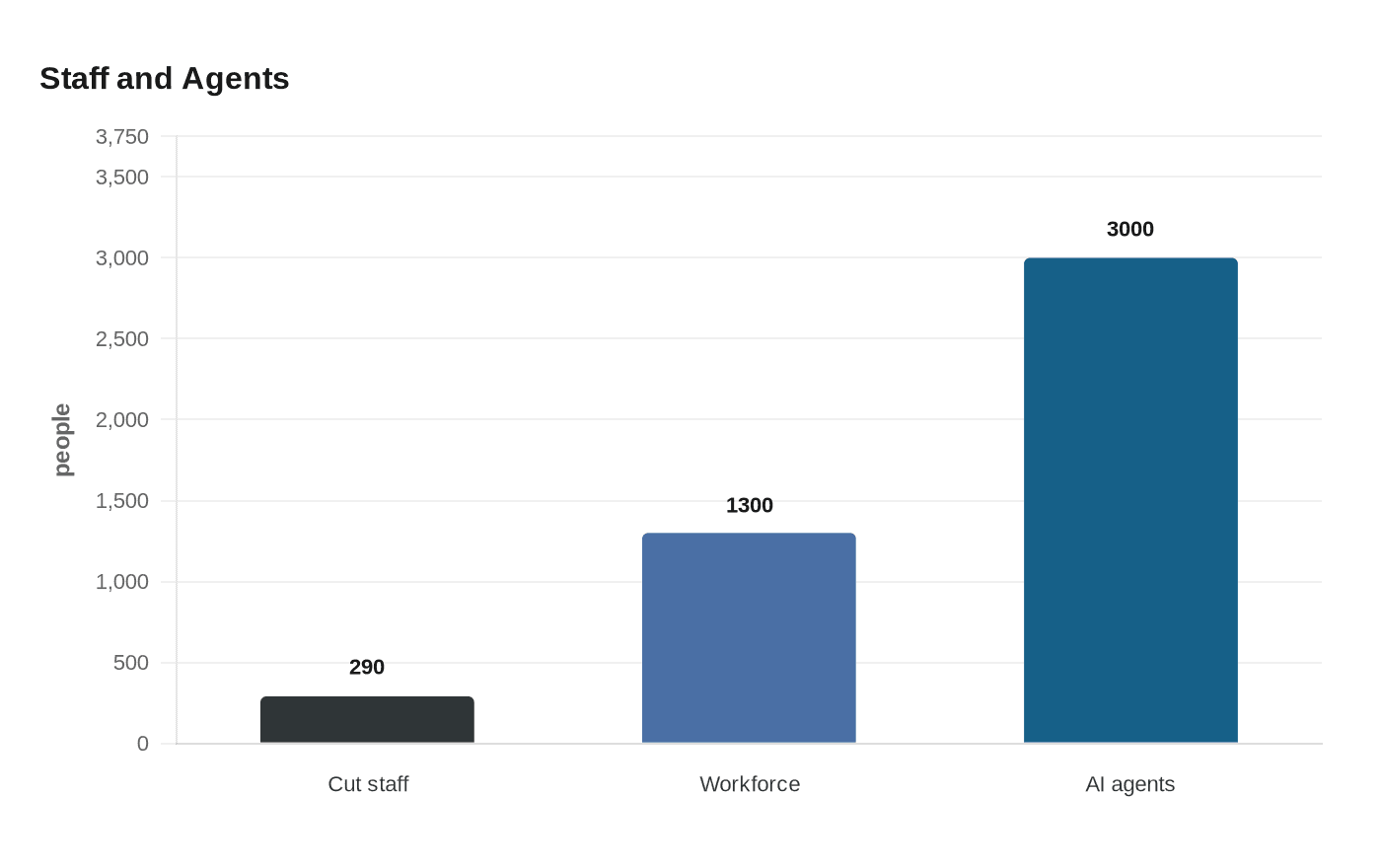

The scale became concrete in February 2026. Anthropic publicly accused DeepSeek, Moonshot AI, and MiniMax of orchestrating coordinated, industrial-scale campaigns to siphon capabilities from its Claude models using tens of thousands of fraudulent accounts. The three labs collectively generated more than 16 million exchanges with Claude through approximately 24,000 fake accounts, all in violation of Anthropic's terms of service and regional access restrictions. The breakdown was uneven: DeepSeek generated over 150,000 exchanges with Claude, while Moonshot AI generated over 3.4 million and MiniMax generated over 13 million. Google's Threat Intelligence Group separately reported disrupting a model extraction attempt against Gemini, including a campaign of more than 100,000 prompts aimed at coercing reasoning traces.

Dmitri Alperovitch, co-founder of CrowdStrike and chairman of the Silverado Policy Accelerator, put the pattern bluntly: "It's been clear for a while now that part of the reason for the rapid progress of Chinese AI models has been theft via distillation of U.S. frontier models."

The collaboration arrives with complications. Information sharing among direct competitors is unusual and will draw antitrust scrutiny even as it signals the seriousness with which the industry views the threat. The effort also exposes a legal gray zone: distillation is an accepted technique in AI development, and regulators have not yet drawn a clear line between legitimate use and adversarial extraction at scale.

Chinese models cost roughly 14 times less than U.S. equivalents, and API volumes have surpassed the U.S., meaning the economics driving distillation may outpace any defense. Chinese state media framed the coordination as evidence of U.S. anxiety over China's progress. Whether the effort holds will depend on how reliably firms can identify anomalous query patterns at scale, and whether regulatory tools, from export controls to new AI-specific enforcement mechanisms, materialize fast enough to supplement what is, for now, a private-sector defense.

Know something we missed? Have a correction or additional information?

Submit a Tip