OpenAI expands nonprofit mission, healthcare push amid Musk lawsuit

OpenAI put a $130 billion nonprofit stake behind its mission as Elon Musk challenges the shift in Oakland. Its new healthcare push is colliding with big questions about safety, control and equity.

OpenAI has recast its nonprofit arm as the OpenAI Foundation, given it equity in the for-profit valued at about $130 billion, and promised at least $1 billion over the next year for diseases, jobs, AI resilience and community programs. That expansion is unfolding alongside Elon Musk’s federal lawsuit in Oakland, where the fight is over whether OpenAI abandoned its nonprofit mission or is still using commercial growth to serve the public good.

The company has also moved more aggressively into medicine. In January 2026, OpenAI launched OpenAI for Healthcare, a dedicated offering for secure AI products meant to help hospitals and health systems scale care, cut administrative work and build custom clinical tools while protecting health data. In late April, OpenAI and Microsoft amended their agreement to simplify the partnership and provide long-term clarity, while preserving Microsoft’s exclusive IP access and support for OpenAI’s public-benefit structure.

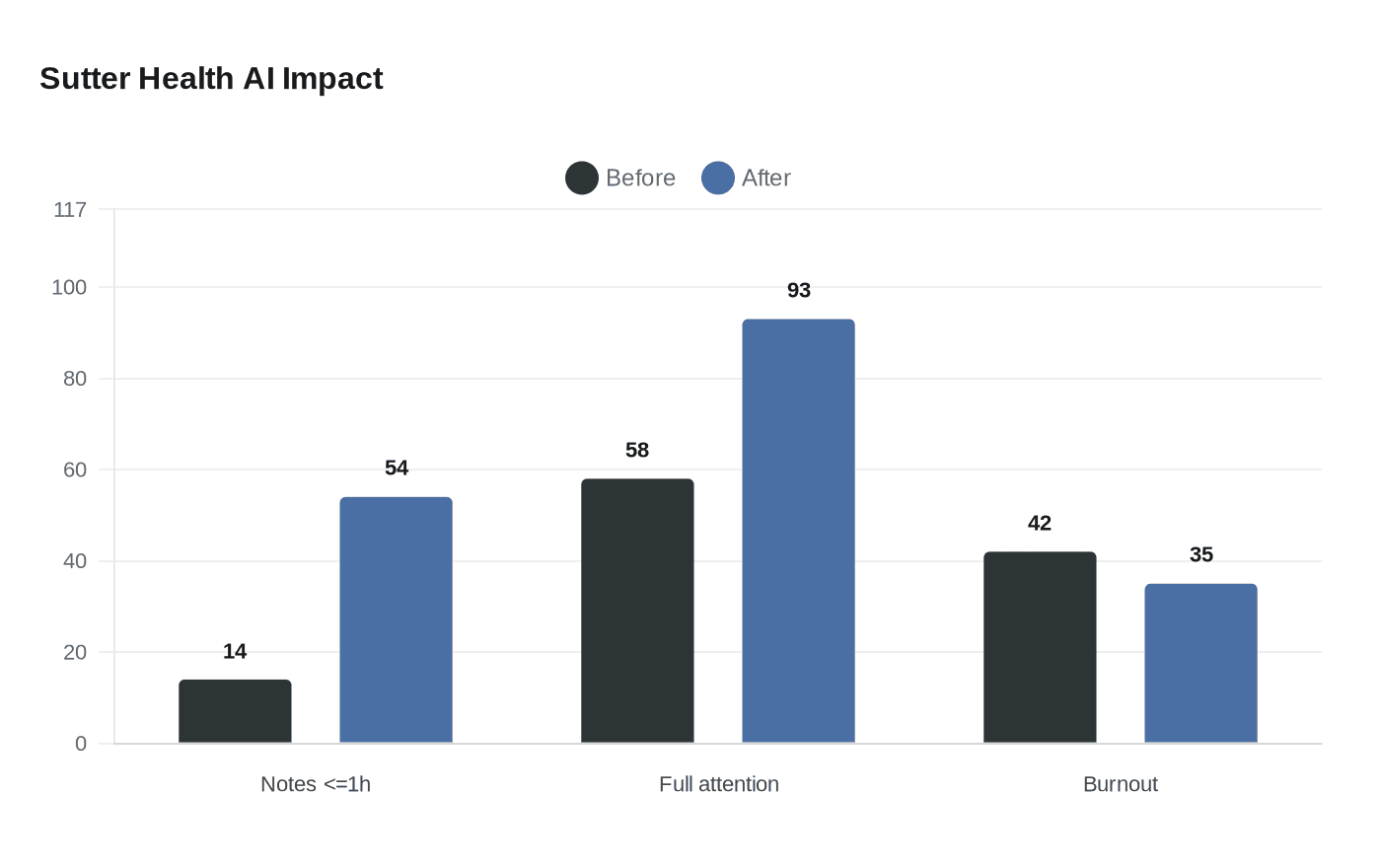

The timing matters because AI is already moving from experiment to routine use in clinical settings. The American Medical Association said in 2025 that two in three physicians were using health AI, but doctors still wanted stronger oversight and better integration into everyday workflows. At Sutter Health, the share of doctors spending an hour or less per week on after-hours notes rose from 14% to 54% with ambient AI, while the share saying they could give patients their full attention climbed from 58% to 93% and burnout scores fell from 42% to 35%.

Other studies point to similar efficiency gains, but not a clean solution. One prospective study at a large academic medical center followed 45 physicians across 17,428 encounters and found an ambient AI scribe was used in 9,629 visits, cutting median time per note by 0.57 minutes. A separate cohort study found 125 AI-scribe users spent less time in the electronic health record and on notes than 478 matched nonusers. Yet a diagnostic study in JAMA Network Open found a dedicated AI expert system outperformed two general-purpose large language models in differential diagnosis, reinforcing that general-purpose systems are not interchangeable with clinical tools.

The caution extends beyond accuracy to trust. Research in Nature found users often overestimated large language models’ accuracy, especially when explanations sounded convincing. Another Nature study found 73% of healthcare workers preferred TalkToModel over existing systems when trying to understand a disease prediction model, suggesting clinicians want clearer explanations, not just faster output. The comparison to the rise of talkies is apt: after The Jazz Singer premiered on October 6, 1927, sound films quickly became a global phenomenon, but the shift redistributed power, labor and profit unevenly. AI in medicine may do the same, and the question in Oakland is whether OpenAI’s nonprofit promise can still govern a business built for scale.

Sources:

Know something we missed? Have a correction or additional information?

Submit a Tip