OpenAI Widens GPT-5.5 Access, Signaling Compute Advantage Over Anthropic

OpenAI pushed GPT-5.5 into its API a day after launch, while telling investors it is building far more compute than Anthropic.

OpenAI moved quickly to widen access to GPT-5.5, putting both GPT-5.5 and GPT-5.5 Pro into its API the day after unveiling the model and calling it its “smartest and most intuitive” release yet. The pace mattered as much as the model itself: in a year when AI firms are fighting over chips, power and deployment capacity, OpenAI’s latest rollout signaled that access to computing has become one of the industry’s sharpest competitive advantages.

Anthropic took a different public posture with Claude Opus 4.7, which it released on April 16 and described as generally available, with particular strength in advanced software engineering. Its model pages also emphasized deployment to Claude Pro, Max, Team and Enterprise users, along with safety evaluation infrastructure such as system cards and transparency hubs. The contrast between the two companies is not just about product design. It reflects two different ways of proving readiness to market: OpenAI by widening access, Anthropic by foregrounding safeguards and controlled deployment.

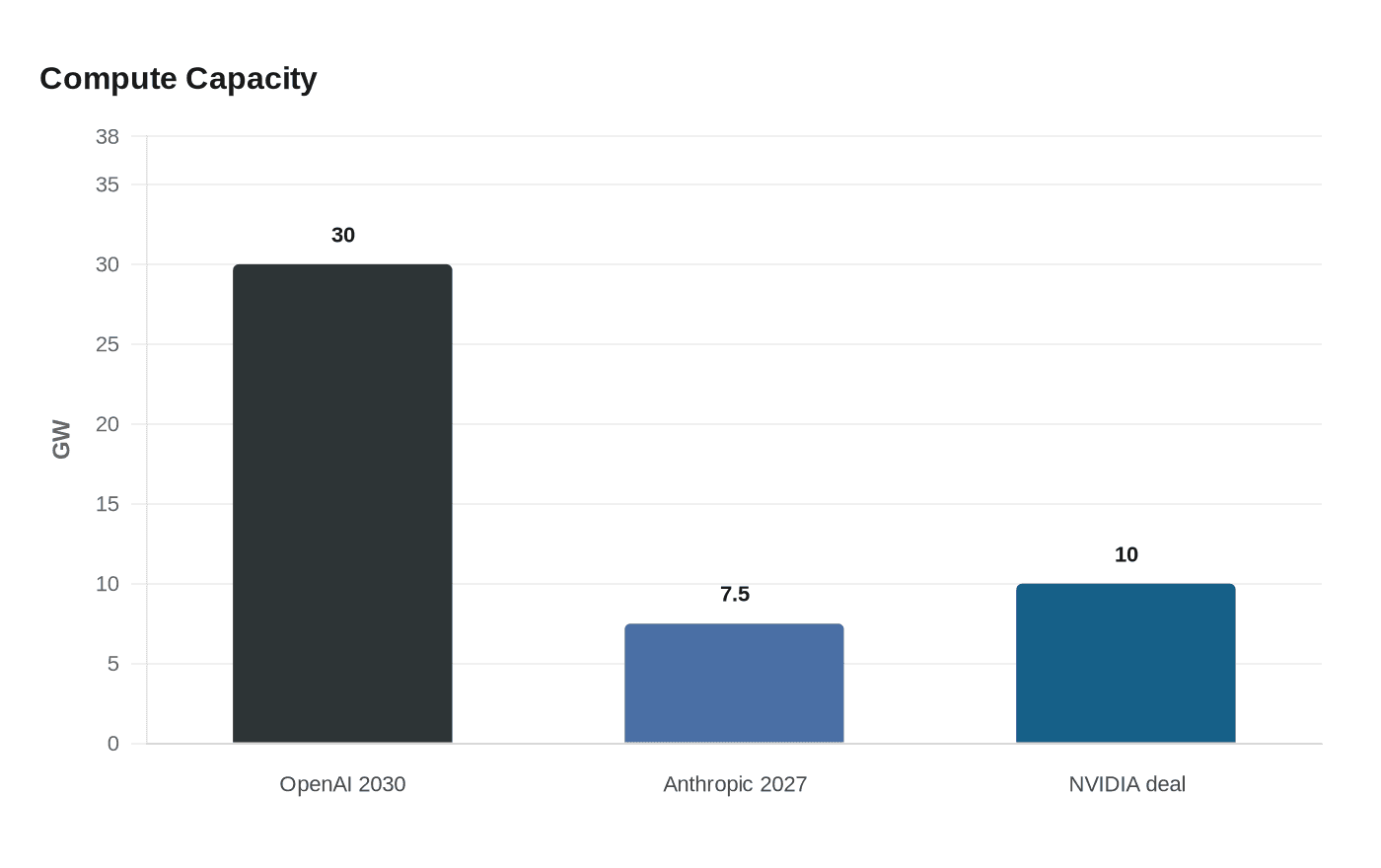

The dispute has grown more pointed around raw capacity. In April, OpenAI told investors it expected to have 30 gigawatts of compute by 2030, while projecting that Anthropic would reach about 7 to 8 gigawatts by the end of 2027. That gap framed Anthropic as the smaller and more compute-constrained rival, and it underscored the broader argument now shaping the AI race: who can secure enough infrastructure to ship models at scale, not just who can build the strongest benchmark performer.

OpenAI has spent the past year locking in that advantage. On September 22, 2025, the company and NVIDIA announced a partnership to deploy at least 10 gigawatts of NVIDIA-powered AI datacenters. OpenAI’s newsroom also said a new phase of its Microsoft partnership was announced on April 27. Together, those moves point to a company building an ecosystem around compute, distribution and cloud capacity, not just model releases.

Anthropic, meanwhile, entered late April in a different position: it was in talks for a funding round that could value it at about $900 billion. That figure shows how much investors still value the company’s position in the market, even as the contest shifts toward infrastructure. For AI’s largest players, the next decisive battle may not be over who can talk most convincingly about model quality. It may be over who can buy, power and deploy enough compute to keep serving the market at scale.

Know something we missed? Have a correction or additional information?

Submit a Tip