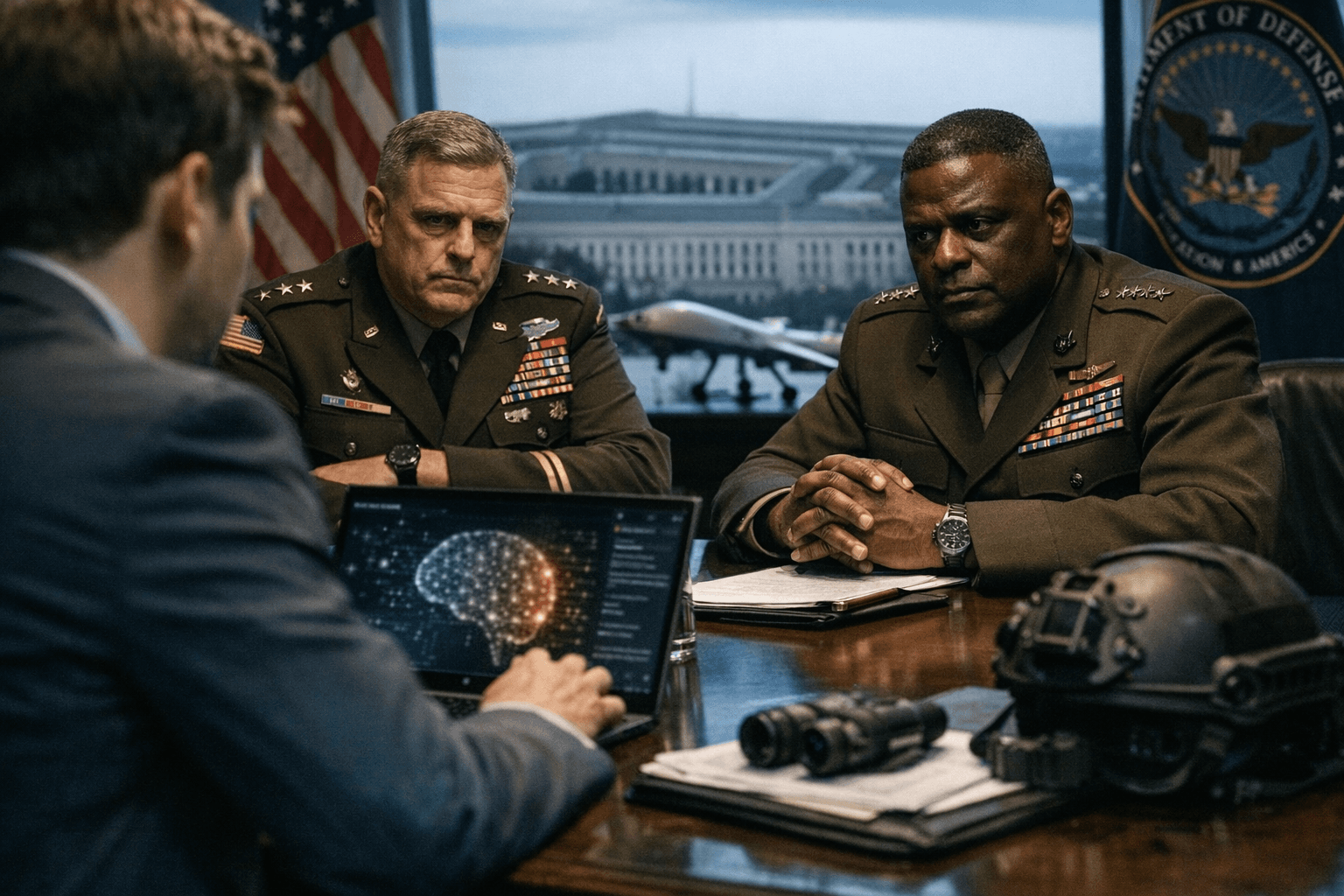

OpenAI reaches Pentagon deal to deploy models with explicit red lines

OpenAI said it will run its AI models inside Department of Defense classified networks under written prohibitions on mass domestic surveillance and autonomous weapons.

OpenAI announced that it has reached an agreement with the Department of Defense to deploy its AI models inside the Pentagon’s classified networks, promising written “red lines” and a multilayered set of technical, personnel and contractual safeguards. Sam Altman, OpenAI’s chief executive, said in a post on X that the Defense Department, which he referred to as the Department of War in his message, showed “a deep respect for safety and a desire to partner to achieve the best possible outcome.”

OpenAI described the pact as enforcing explicit limits on how its systems can be used. The company said the contract bars its technology from being used for mass domestic surveillance and from directing autonomous weapons, and it also prohibits use in “high‑stakes automated decisions.” Altman highlighted two of what he called OpenAI’s most important safety principles: prohibitions on domestic mass surveillance and human responsibility for the use of force, including for autonomous weapon systems.

OpenAI characterized its protections as multi-layered. “In our agreement, we protect our red lines through a more expansive, multi-layered approach. We retain full discretion over our safety stack, we deploy via cloud, cleared OpenAI personnel are in the loop, and we have strong contractual protections,” the company said. The pact will include technical guardrails on systems, cloud-based deployment constraints and a requirement that cleared OpenAI staff remain involved in operations inside classified enclaves, the company said. OpenAI also asserted that the agreement contains more guardrails than prior classified AI deployments.

The deal includes a Pentagon requirement that OpenAI allow its systems to be used “for any lawful purpose,” a clause the company accepted as part of the contract. The announcement came within hours of President Donald J. Trump directing federal agencies to stop using services from rival Anthropic, a company that has worked with the government since 2024. Defense Secretary Pete Hegseth had earlier threatened to label Anthropic a “supply chain risk” if the company refused to remove certain safety guardrails. Anthropic responded that “no amount of intimidation or punishment from the Department of War will change our position on mass domestic surveillance or fully autonomous weapons” and said it would “challenge any supply chain risk designation in court.”

OpenAI’s ties to major backers were noted in company statements; the firm is supported by investors including Microsoft, Amazon, SoftBank and others. The Department of Defense did not immediately respond to requests for comment.

Public health and equity advocates said the protections are consequential beyond military settings because technologies developed or normalized inside classified programs can later appear in domestic law enforcement, border control and health surveillance systems. Prohibitions on mass domestic surveillance are particularly significant for communities of color and low‑income neighborhoods that have historically faced disproportionate monitoring. Experts will be watching whether contractual prohibitions translate into enforceable audit rights, independent oversight and clear definitions of what counts as “high‑stakes” decisions.

Key gaps remain: the parties have not released the contract text, financial terms or detailed technical specifications of the safety stack. Journalists and civil society groups are likely to press for transparency about who holds clearances, how OpenAI personnel will operate inside classified networks, how audits will be conducted and what mechanisms exist to enforce the red lines if disagreements arise. Those answers will determine whether the agreement meaningfully limits harmful domestic uses or simply sets a new precedent for classified AI partnerships.

Know something we missed? Have a correction or additional information?

Submit a Tip