OpenAI releases GPT-5.5 system card alongside model launch

OpenAI paired GPT-5.5 with a safety card the same day, turning the launch into a test of whether frontier AI can be disclosed before it is widely deployed.

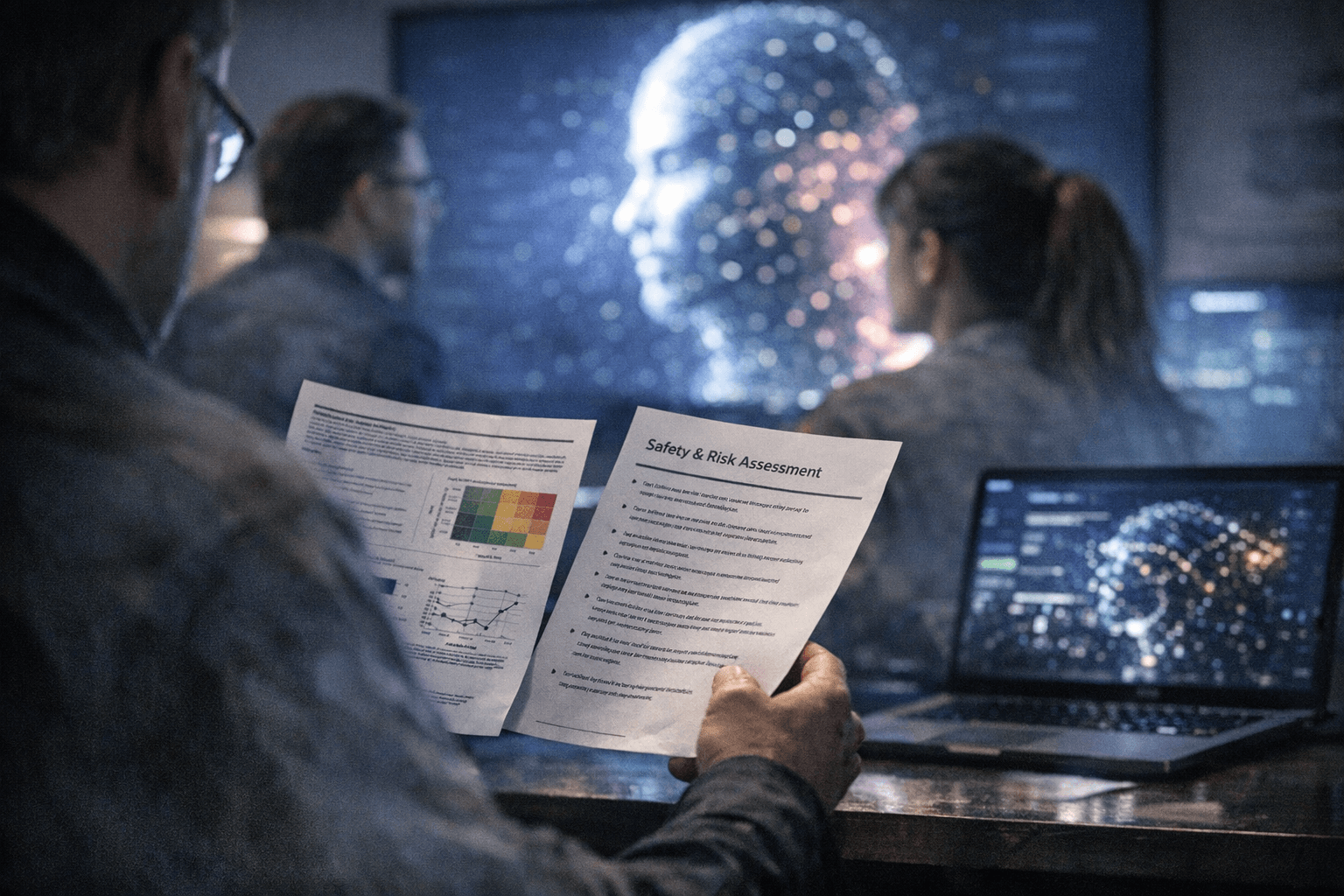

OpenAI did not just launch GPT-5.5. It paired the release with a system card that puts safety, behavior, and deployment risk in the center of the story, where frontier AI companies are increasingly expected to put them.

The card was published on April 23, 2026, and OpenAI updated it the next day to add safeguards for GPT-5.5 and GPT-5.5 Pro in the API. The timing matters. By folding the disclosure into the launch itself, OpenAI made the safety document part of the product announcement rather than a follow-up after adoption had already begun.

That is more than a paperwork exercise. OpenAI said GPT-5.5 was evaluated across its full safety and preparedness frameworks, with targeted red-teaming for advanced cybersecurity and biology capabilities. The company also said it gathered feedback from nearly 200 trusted early-access partners before release, a sign that the model was already being shaped for professional use before it reached the public API.

OpenAI described GPT-5.5 as especially strong in agentic coding, computer use, knowledge work, and early scientific research. It also said the model matched GPT-5.4 per-token latency in real-world serving while operating at a much higher level of intelligence, a claim meant to reassure enterprises that better performance would not come with a slower workflow penalty. OpenAI said GPT-5.5 and GPT-5.5 Pro would reach the API very soon after launch.

The launch materials also underscored how the company wants GPT-5.5 to be judged: by work output, not just chat quality. OpenAI reported scores of 82.7% on Terminal-Bench 2.0 and 84.9% on GDPval, along with results across OSWorld-Verified, Toolathlon, BrowseComp, FrontierMath, and CyberGym. Those benchmarks reinforce a model aimed at tool use, automation, and research tasks, the same areas where bad outputs can create real operational and social harm if human oversight is weak.

The release landed about seven weeks after GPT-5.4, showing how quickly OpenAI is moving its model line. Public launch coverage said the company was pushing GPT-5.5 as better at coding, using computers, and deeper research, while Greg Brockman described it as a step toward more agentic and intuitive computing. NVIDIA said more than 10,000 of its employees had early access to GPT-5.5-powered Codex, a reminder that this is not just a consumer chatbot release but an enterprise deployment story.

For the AI industry, the system card is the transparency test. It reveals how OpenAI wants the model to be used, where it thinks the risks sit, and how much restraint it believes users still need. It also exposes the limits of voluntary disclosure: companies can publish safety language, but only sustained public scrutiny will show whether those safeguards are meaningful before regulators force the issue.

Know something we missed? Have a correction or additional information?

Submit a Tip