OpenAI releases privacy filter to detect and redact personal data

OpenAI unveiled a local, open-weight privacy filter that redacts personal data, but the harder test is whether AI can catch every hidden identifier.

OpenAI has released a privacy filter aimed at one of the biggest barriers to AI adoption inside sensitive institutions: how to use large-scale text processing without exposing personal data. The company said the system is built to detect and redact personally identifiable information before text is stored, shared, indexed or routed into later processing, a pitch that puts privacy tooling closer to the center of the AI stack.

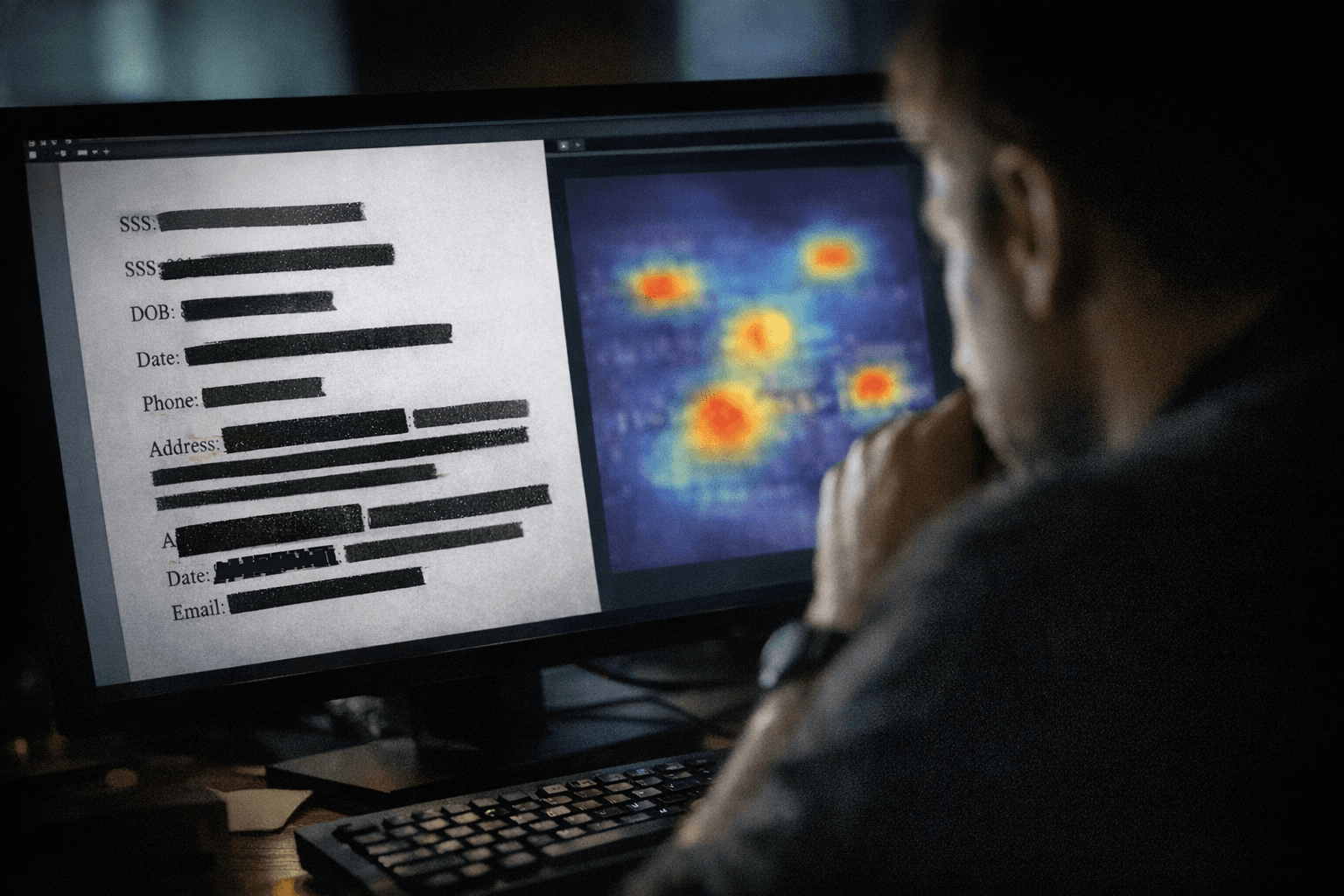

The model, called OpenAI Privacy Filter, is described in its model card as a bidirectional token-classification system for PII detection and redaction. OpenAI said it is designed for high-throughput workflows and can run locally, allowing masking to happen on-device instead of sending data elsewhere. That matters for hospitals, schools, law firms and government agencies, where intake forms, case notes, records requests and internal logs often move through text pipelines that can contain sensitive material.

OpenAI said the released version uses 1.5 billion total parameters, about 50 million active parameters and a 128,000-token context window. It is being distributed under the Apache 2.0 license through GitHub and Hugging Face, and OpenAI said it can be fine-tuned for specific use cases and plugged into training, indexing, logging and review systems. The company also said the model reached state-of-the-art performance on the PII-Masking-300k benchmark after it corrected annotation issues it identified during evaluation.

The privacy taxonomy goes beyond obvious contact fields. OpenAI said it includes personal identifiers, contact details, addresses, private dates, account numbers such as credit and banking information, and secrets including API keys and passwords. That broader scope reflects the weakness of older rule-based tools, which can catch formats like phone numbers and email addresses but miss more subtle references that only make sense in context.

OpenAI said it already uses a fine-tuned version of the model internally in privacy-preserving workflows. Its privacy materials also say the company uses a custom-built internal tool to detect and mask personal information during training-data filtering, and that it disassociates ChatGPT conversations from user accounts before using them for model improvement. The release presents privacy not as an afterthought, but as part of the infrastructure required for enterprise AI to scale.

Still, the central question remains whether any automated filter can catch every piece of personally identifiable information in fast-moving institutional systems. OpenAI’s model card warns about failure modes and high-risk deployment cautions, signaling that the company sees the tool as a layer of defense, not a guarantee. For institutions handling medical, financial or personal records, that distinction may determine how far AI can go from pilot projects to daily operations.

Know something we missed? Have a correction or additional information?

Submit a Tip