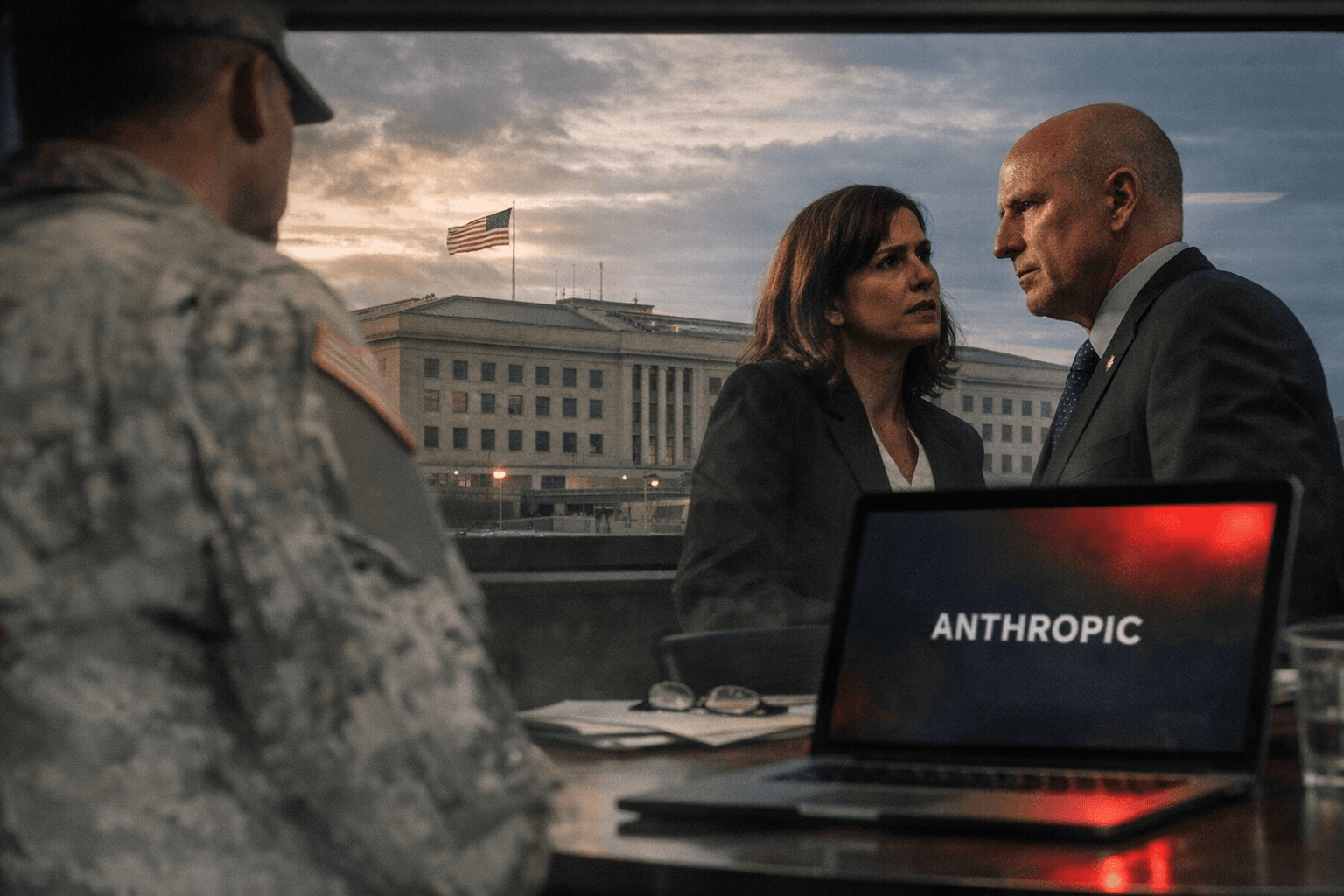

Pentagon designates Anthropic a supply-chain risk, bars Claude on military contracts

The Pentagon barred government contractors from using Anthropic’s Claude on U.S. military work, effective immediately; Anthropic says it will sue and insists the restriction is narrow.

The Department of Defense formally designated Anthropic PBC and its Claude artificial intelligence products a “supply-chain risk,” effective immediately, and said the move prevents government contractors from using the company’s technology on U.S. military contracts. The action crystallizes a months-long clash between Pentagon officials pressing for unfettered access to critical AI capabilities and Anthropic executives who imposed guardrails aimed at blocking autonomous weapons development and mass surveillance.

Anthropic CEO Dario Amodei said the company received a formal letter designating it a risk and that the company will pursue legal remedies. “We do not believe this action is legally sound, and we see no choice but to challenge it in court,” he wrote. Amodei also described the designation as having a narrow scope and argued that it does not and cannot limit uses of Claude unrelated to specific Department of Defense contracts. “The law requires the Secretary of War to use the least restrictive means necessary to accomplish the goal of protecting the supply chain,” he added in his statement.

The Pentagon defended the designation in blunt terms. “The military will not allow a vendor to insert itself into the chain of command by restricting the lawful use of a critical capability and put our warfighters at risk,” a Defense Department statement said, framing the dispute as one about military access and operational control.

Procurement officials and industry analysts say the designation is likely the most consequential use of the supply-chain authority against a U.S.-based AI firm, raising questions about when and how Washington will deploy buying-power rules to shape technology access. The designation, as announced, bars contractors from incorporating Claude in work for the military but does not, according to Anthropic, prohibit commercial or non-military uses of the model.

The dispute centers on Anthropic’s policy decisions to ban certain defense-related applications. Company officials have declined to give defense agencies what they called “unfettered access” to tools that could enable autonomous weapons or mass surveillance of U.S. citizens. Pentagon lawyers countered that vendors should not be permitted to restrict lawful military uses of critical capabilities.

Industry sources say some defense systems had already incorporated prompts and workflows developed with Claude, creating immediate operational questions for contractors and prime integrators. The decision also carries diplomatic risk: procurement advisers warn that a U.S. government move against a domestic supplier could complicate sales to foreign partners and spark vendor shifts toward alternatives seen as more compliant.

The designation has drawn political heat. Senator Kirsten Gillibrand called the step “shortsighted, self-destructive, and a gift to our adversaries,” and added, “The government openly attacking an American company for refusing to compromise its own safety measures is something we expect from China, not the United States.”

Anthropic also pushed back with data about its consumer momentum, noting that “more than a million people” are signing up for Claude every day and that the model is “the most downloaded AI app in several countries.” That contrast underscores the broader stakes: a widely adopted commercial technology is now at the center of an unprecedented legal and policy fight over national security access and corporate safety standards.

With Anthropic preparing to sue and the Pentagon asserting immediate restrictions, the case appears headed for a courtroom test of procurement authority and the limits of corporate-imposed AI guardrails.

Know something we missed? Have a correction or additional information?

Submit a Tip