Regulators lag behind banks in AI adoption, raising oversight fears

Banks have raced ahead on AI while regulators still lack basic adoption data, leaving blind spots in model risk, surveillance and consumer protection.

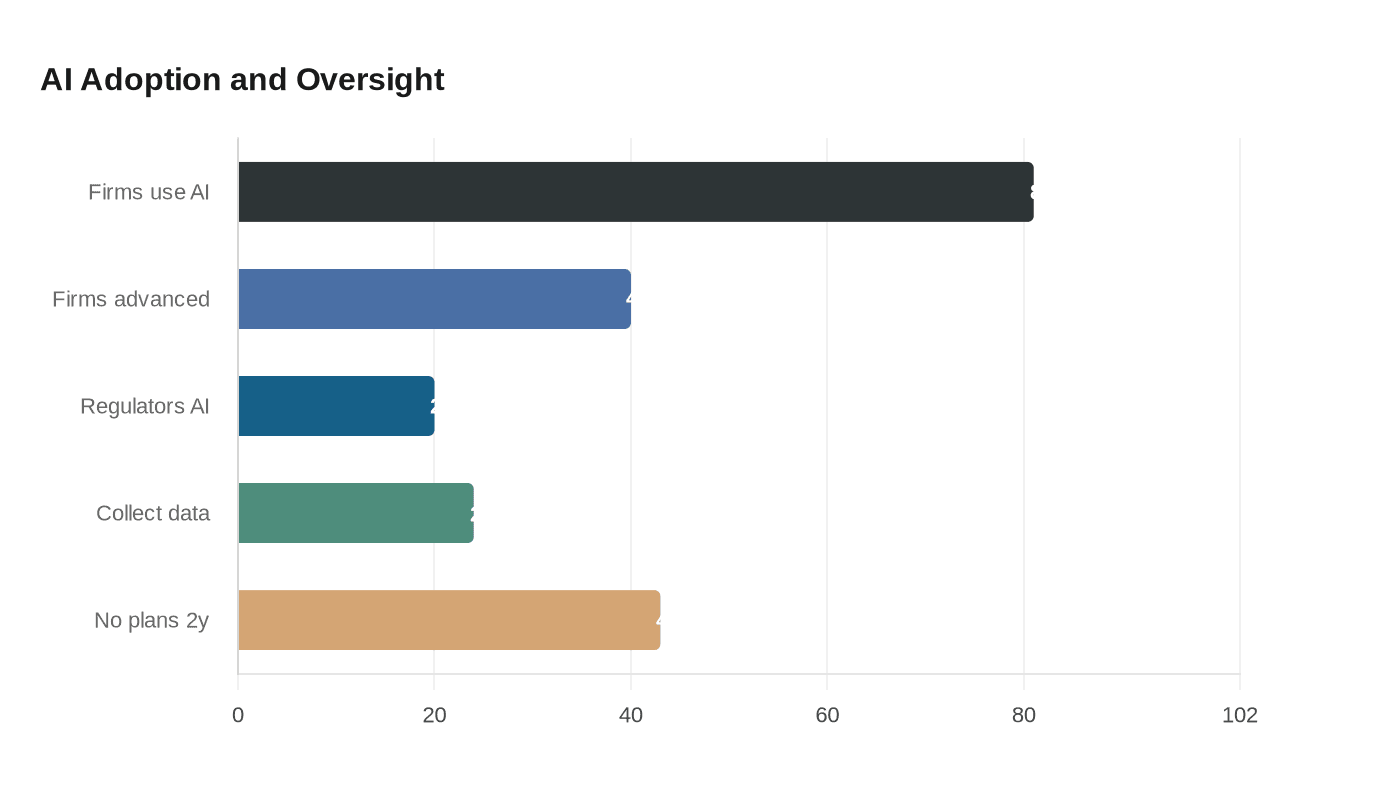

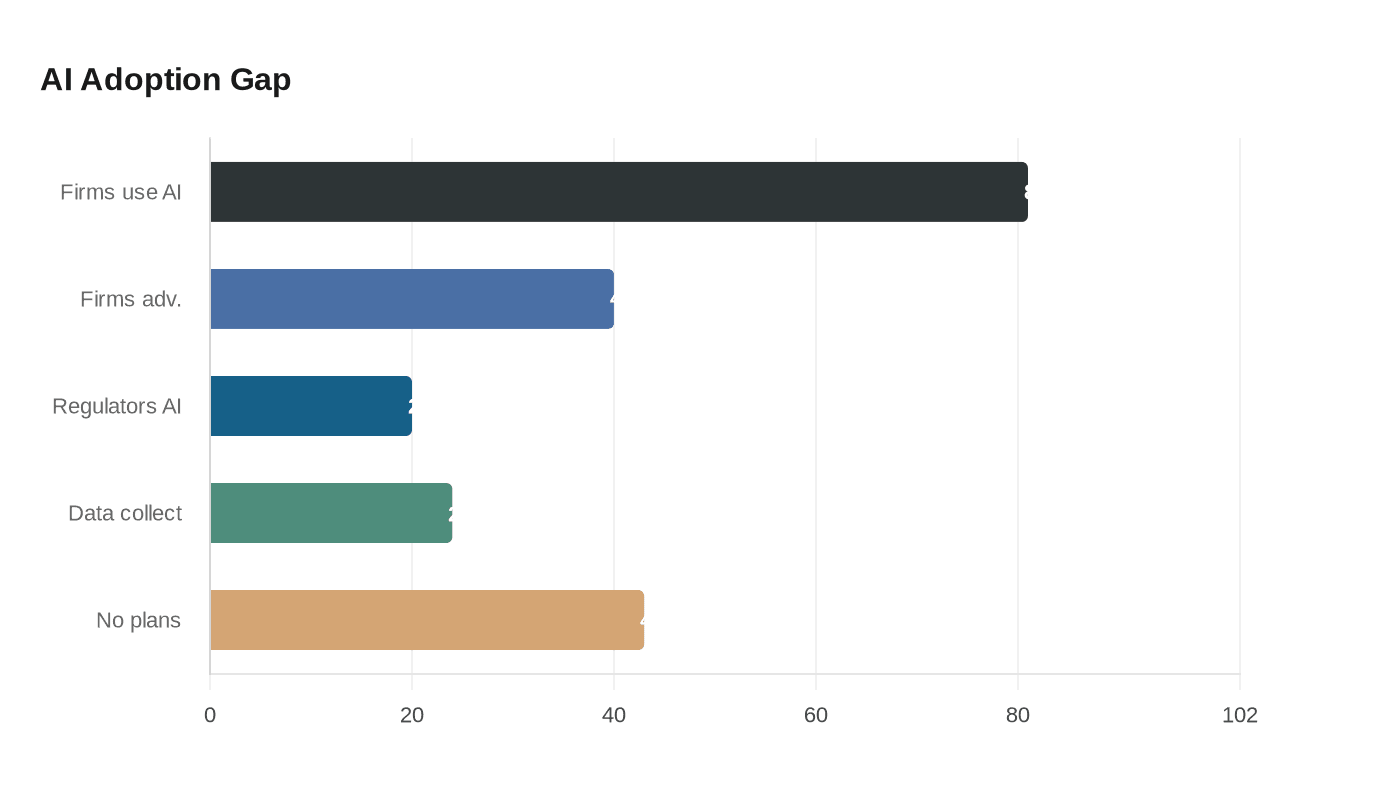

Financial firms are moving into artificial intelligence far faster than the agencies meant to police them, and the gap is already visible in the numbers. The Cambridge Centre for Alternative Finance’s 2026 Global AI in Financial Services Report found 81% of surveyed firms were using AI at some level, with 40% reporting advanced adoption, compared with just 20% of regulators. That divide matters because supervisors are being asked to monitor systems they do not yet fully understand, while the industry keeps layering AI into lending, trading, compliance and customer service.

The deeper problem is not only slower adoption inside the public sector. Only 24% of authorities surveyed said they collect data on industry AI adoption, and 43% had no plans to start within the next two years. That leaves major blind spots in model risk, market surveillance, consumer harm and compliance, precisely the areas where errors can cascade from a single bank to the broader financial system. The CCAF study, produced at Cambridge Judge Business School with partners including the Bank for International Settlements, the International Monetary Fund, the World Economic Forum, the Inter-American Development Bank, CGAP and the Arab Monetary Fund, gives the warning unusual policy weight.

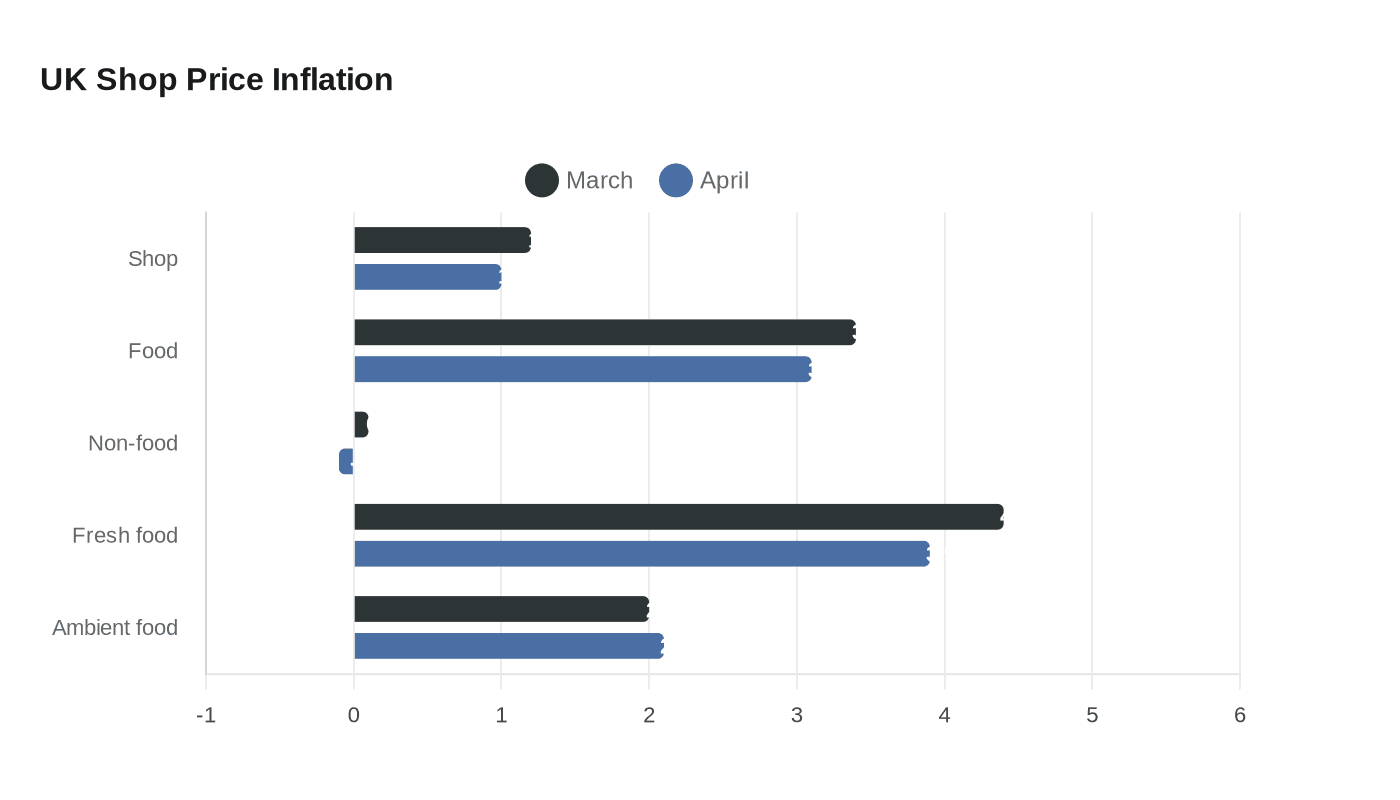

The regulatory lag is not new, but it is becoming harder to defend as adoption accelerates. The Bank of England and Financial Conduct Authority’s third AI and machine-learning survey in UK financial services, published on November 21, 2024, found 75% of firms were already using AI and another 10% planned to use it within three years. It also found foundation models accounted for 17% of AI use cases and that a third of AI use cases were third-party implementations, a reminder that many institutions are outsourcing critical decision-making tools to vendors they may not fully control.

That dependence heightens concerns already flagged by the Bank for International Settlements, which said most financial authorities had not issued AI-specific rules for firms and were still relying on existing frameworks. The BIS pointed to governance, expertise, data governance and third-party AI providers as areas that needed much more attention. The IMF has also warned that AI in securities markets could introduce data, performance and cybersecurity risks, while supervisory capacity remains uneven across advanced economies and emerging markets.

The pressure point is no longer hypothetical. Anthropic’s Claude Mythos Preview, described in its system card dated April 7, 2026, as the company’s most capable frontier model, is being used only in a limited defensive cybersecurity program, not released generally. Anthropic’s Project Glasswing launch the same day brought in JPMorganChase, Microsoft, Google, Cisco, Amazon Web Services and Apple, and extended access to more than 40 additional organizations, alongside up to $100 million in usage credits and $4 million in donations to open-source security groups. That kind of firepower is moving into the financial sector now, while regulators are still struggling to measure what banks are actually deploying. The next failure may not come from AI alone, but from supervisors learning too late where it was embedded.

Sources:

Know something we missed? Have a correction or additional information?

Submit a Tip