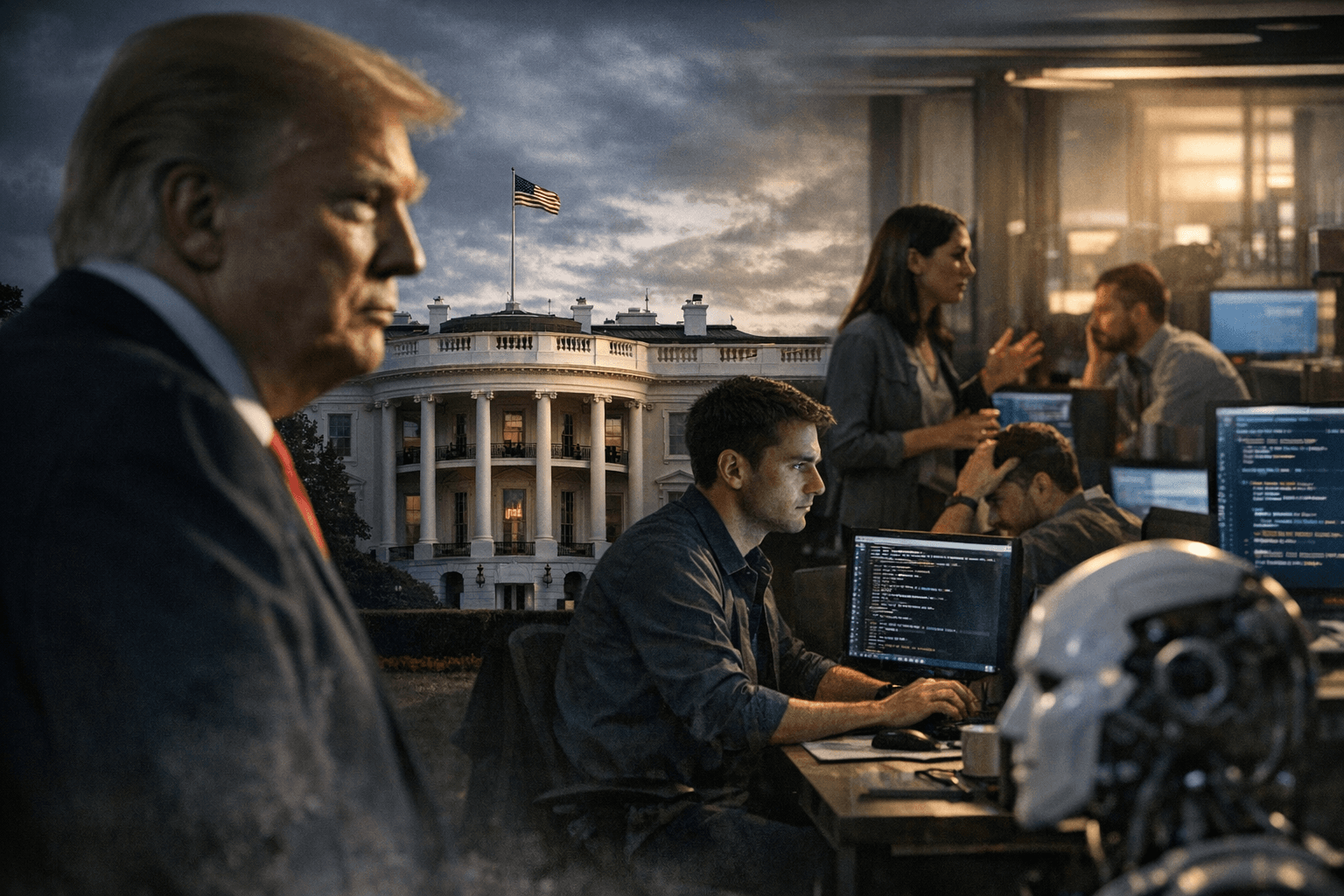

Trump administration bans Anthropic AI from federal systems, prompting Silicon Valley backlash

The White House ordered agencies to stop using Anthropic’s AI and threatened penalties, prompting engineers, investors and rivals to publicly back the startup and threaten legal fights.

The White House ordered federal agencies to stop using Anthropic’s artificial intelligence technology and signaled potential penalties, setting off an unprecedented public clash with Silicon Valley that left tech workers and investors rallying behind the start-up.

President Donald J. Trump framed the move on his social platform, saying “We don’t need it, we don’t want it, and will not do business with them again!” and declaring that “The United States of America will never allow a radical left, woke company to dictate how our great military fights and wins wars!” White House posts also told most agencies to halt use immediately while giving the Pentagon a six-month period to phase out tools already embedded in military platforms, creating ambiguity about the scope of the ban and any carve-outs for defense systems.

Anthropic, led by chief executive Dario Amodei, pushed back. Amodei has said the company does not want its A.I. to be used to surveil Americans or in autonomous weapons, warning those uses could “undermine, rather than defend, democratic values.” In a public statement Anthropic said, “No amount of intimidation or punishment from the Department of War will change our position on mass domestic surveillance or fully autonomous weapons.” The company added it would “challenge any supply chain risk designation in court,” signaling likely litigation if the administration presses formal penalties.

The confrontation followed months of private talks between Anthropic and Pentagon officials that collapsed into a public dispute this week when contract language and assurances became a flashpoint. Anthropic says it sought narrow guarantees that Claude, its flagship model, would not be repurposed for domestic mass surveillance or for fully autonomous weapons. The company contends new contract wording, while “framed as compromise,” included legal language that would permit those safeguards to be disregarded at will.

Silicon Valley’s reaction was swift and visible. More than 100 engineers at Google signed a petition urging the company to “refuse to comply” with Pentagon uses of A.I. on certain operations, and employees at Amazon, Google and Microsoft urged their leaders in an open letter to “hold the line” against military demands they view as overbroad. Open letters from groups of engineers warned that “The Pentagon is negotiating with Google and OpenAI to try to get them to agree to what Anthropic has refused” and that “They’re trying to divide each company with fear that the other will give in.”

Venture capitalists and prominent AI scientists also voiced support for Anthropic, marking a rare moment of broad industry solidarity with a small startup against the federal government. The dispute has competitive implications for suppliers to the Pentagon. The administration’s move may advantage rival offerings, including Elon Musk’s chatbot Grok, which the Pentagon plans to give access to classified military networks. Musk posted on X that “Anthropic hates Western Civilization,” aligning publicly with the administration.

The showdown resurrects earlier tensions over tech companies’ work with the U.S. military, recalling employee protests in 2018 over Pentagon use of corporate AI. For now, the key determinants of how the conflict unfolds are the exact text of the administration’s directive and the contested contract clauses. Anthropic’s vow to litigate and the broad employee resistance inside major tech firms indicate the dispute will quickly migrate from social media to courtrooms and procurement offices.

Know something we missed? Have a correction or additional information?

Submit a Tip