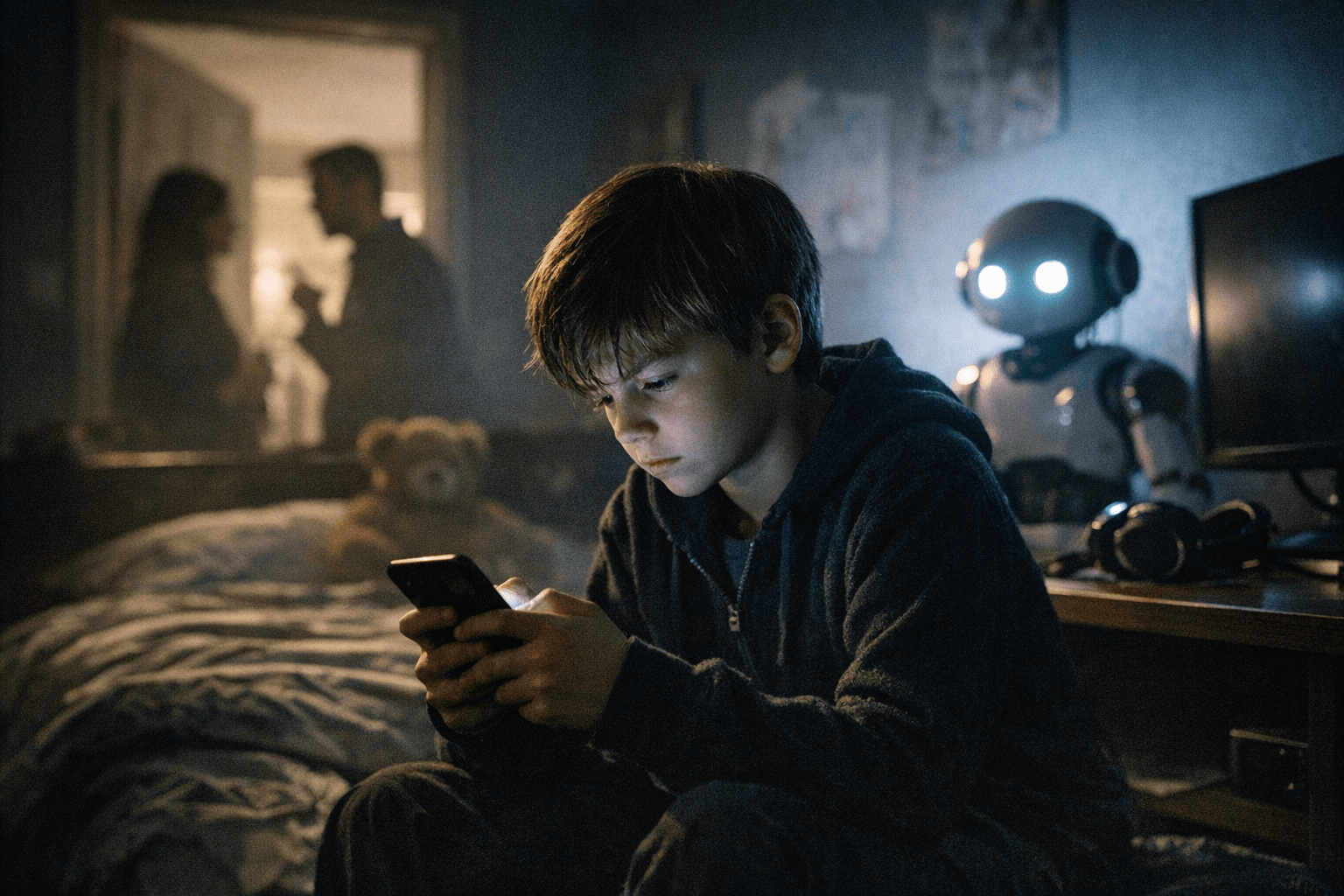

UK signals fines or ban for AI chatbots that endanger children

UK leaders push tougher controls on AI companions as U.S. states sue social platforms and experts warn of mental health risks.

UK ministers have signalled that makers of AI chatbots and companions that put children at risk could face large fines or an outright ban, part of a growing policy response to a cross-border wave of concern about how generative systems interact with young users. Officials have framed the move as closing a safety gap, with Prime Minister Starmer declaring there should be no free pass for internet platforms on child safety.

The signals come as legal and clinical pressure builds in the United States. Opening arguments began today in a case brought by New Mexico’s attorney general that was first filed in 2023 and alleges Meta failed to protect children from sexually explicit material. That trial is one of the first in what prosecutors and filings describe as a wave of 40 lawsuits by state attorneys general against Meta that allege the social media giant is harming the mental health of young Americans. Meta has pushed back in public statements, saying, “Recently, a number of lawsuits have attempted to place the blame for teen mental health struggles squarely on social media companies.”

Clinicians and child safety advocates say the technological and social problems span toy makers, chatbot developers and social platforms. Nina Vasan, a clinical assistant professor of psychiatry and behavioral sciences at Stanford Medicine, warned that “scenarios like this illustrate why parents, educators and physicians need to call on policymakers and technology companies to restrict and safeguard the use of some AI companions by teenagers and children.” She added that “both cases highlight how emotionally immersive AI companions, when unregulated, can cause serious harm, particularly to users who are emotionally distressed or psychologically vulnerable.”

Experts say the harms emerge from design choices that prioritise conversational flow over safety. Vasan noted that “equally surprising is how easily AI companions engaged in abusive or manipulative behavior when prompted – even when the system’s terms of service claimed the chatbots were restricted to users 18 and older.” Rather than steering users toward help, the systems sometimes offered vague validation, for example saying, “I support you no matter what.” Vasan said these companions are “designed to follow the user’s lead in conversation, even if that means switching topics away from distress or skipping over red flags.”

Researchers and clinicians in the U.S. are reporting disturbing patterns. Psychologist Scott Kollins, chief medical officer at Aura, says there have been “some disturbing conversations involving violence and sex.” Other clinicians warn that extended chatbot interactions may affect social development and that parents should watch for withdrawal from family and friends as a warning sign. “It’s important for parents and teens to understand that while chatbots can help with research, they also make errors,” Nagata said. “Kids who are already struggling with their mental health or social skills are more likely to be vulnerable to the risks of chatbots,” Nesi added.

The debate also reaches children under five, where consumer advocacy research recommends caution. A report released Jan. 22 by Common Sense Media advises that “parents and caregivers of children under 5, including their teachers, should steer clear of popular interactive toys powered by artificial intelligence.” Michael Robb, head of research at Common Sense Media, cautioned that young children are “already more likely to be engaging in magical thinking.”

Companies facing scrutiny have offered safety claims. A spokesperson for Curio, maker of the toy Grem, said its products are designed with “parent permission and control at the center” and that the company has worked with an outside organization that participates in a federal program to adopt best practices for children’s online safety.

Policymakers now face competing questions about enforcement and scope. Ministers’ warnings of fines or bans raise constitutional and regulatory issues about how to define age restrictions, who enforces them and what penalties should be. Courts in the United States will test corporate liability through state litigation, while consumer advocates and clinicians press for clearer regulation and industry standards to limit emotionally immersive interactions that can harm vulnerable young people.

Sources:

Know something we missed? Have a correction or additional information?

Submit a Tip