University of Zurich Debuts Event-Aided NeRF for Fast-Flying Drone Reconstruction

Pilots facing motion blur at high speed get a new tool: UZH's event-aided NeRF reconstructs sharp scenes from drones flying up to 2 m/s and claims over 50% better real-data performance.

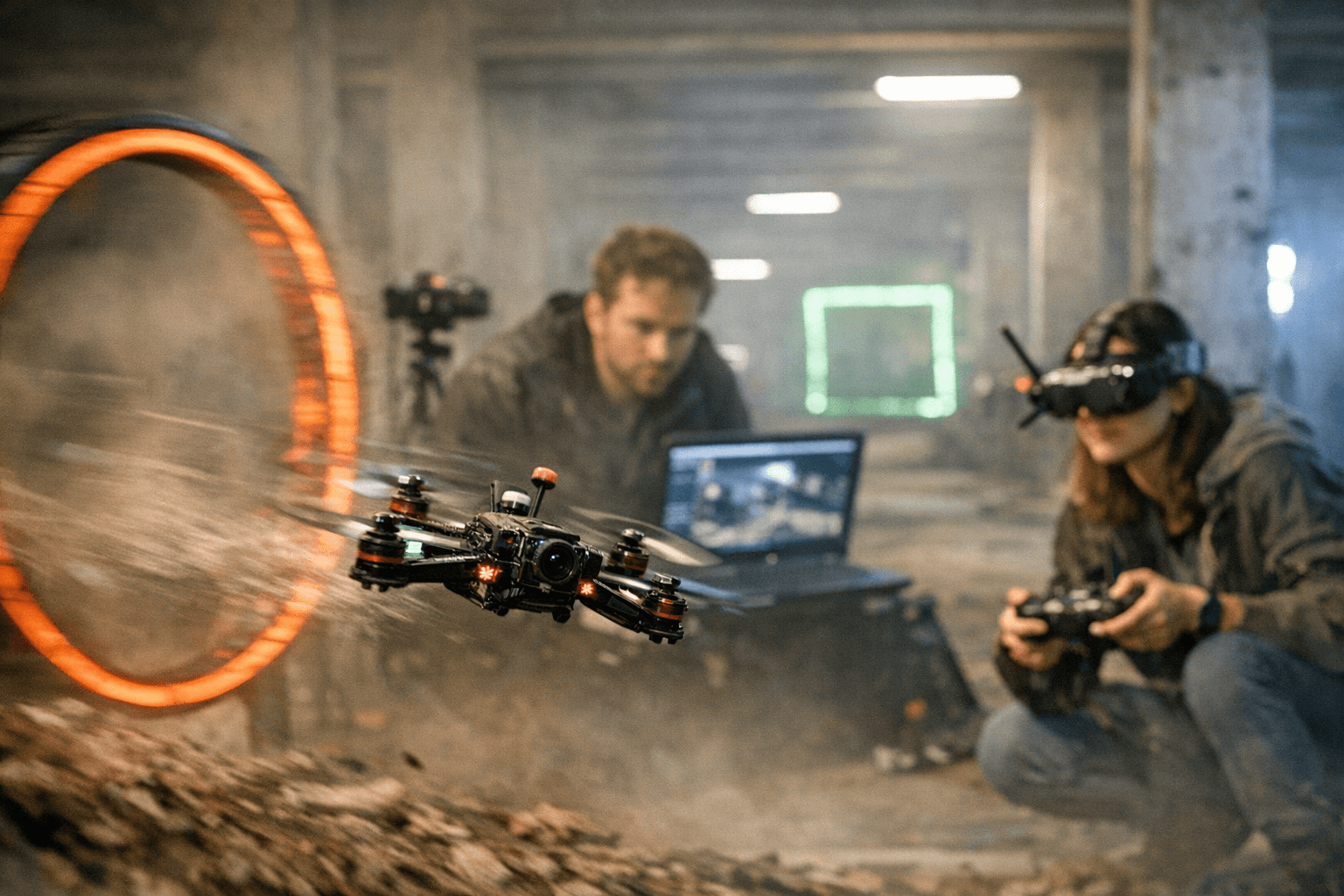

When an FPV pilot leans into an aggressive line and the camera feed smears, reconstruction and mapping pipelines tend to fail. University of Zurich researchers address that exact failure mode with a NeRF-based system that recovers sharp, photorealistic scene representations from motion-blurred RGB frames and asynchronous event streams for drones flying up to 2 m/s. The team calls the work Event-Aided Sharp Radiance Field Reconstruction for Fast-Flying Drones and says it is key for high-speed drone missions including racing.

The paper lists Rong Zou, Marco Cannici, and Davide Scaramuzza as authors in IEEE Transactions on Robotics (T-RO), 2026, with Rong Zou and Marco Cannici noted as equal contributors. Institutional credit and funding credits in the project materials include UZH Department of Informatics, UZH Innovation Hub, Switzerland Innovation Park Zurich, NCCR Robotics, the European Research Council, AUTOASSESS, CSCS, PROPHESEE, iniVation, SynSense, and AlpsenTek.

The system’s architecture tightly fuses the two sensing modalities rather than processing them separately. Project text states, "We propose a unified event-aided NeRF framework tailored to agile aerial robots operating at high speeds. The method tightly integrates asynchronous event streams and motion-blurred image frames directly into radiance field optimization, while simultaneously refining noisy pose priors obtained from event-based visual–inertial odometry. This design enables sharp and geometrically consistent radiance field reconstruction even under aggressive drone maneuvers." The authors implement a shared, learnable camera trajectory module built on a continuous-time formulation so events and frames supervise each other and noisy pose priors are refined during optimization.

Experimental material in the release shows validation on both synthetic data and real-world drone sequences captured with a beamsplitter-based setup at flight speeds up to 2 m/s. The project description explicitly states, "To support reproducibility and benchmarking, we introduce the first drone dataset for radiance field reconstruction under fast motion, featuring synchronized motion-blurred RGB images and event data captured with a beamsplitter-based setup."

On outcomes, the team reports robust qualitative and quantitative gains: "We validate our method on synthetic data and on real sequences captured by a drone flying up to 2 m/s. Despite severe blur and noisy pose priors, our method preserves fine scene details and achieves a performance gain of over 50% on real-world data compared to state-of-the-art methods." The materials also claim the approach significantly outperforms existing NeRF-based deblurring baselines where conventional pipelines fail due to motion blur and pose drift.

Code and assets are available under the GitHub repository labeled uzh-rpg/event-sharp-nerf-drones, with folders listed as assets, configs, data, networks, poses, utils and files including README.md, environment.yml, options.py, and run_nerf.py. The project recommends using the miniforge conda distribution with the provided environment.yml to install dependencies. With that stack and the new beamsplitter dataset, UZH frames this work as a practical step toward sharper reconstruction and more reliable mapping for agile aerial robots and racing scenarios where every frame of clarity matters.

Know something we missed? Have a correction or additional information?

Submit a Tip