Young Europeans turn to AI chatbots for personal and mental health talks

Nearly half of young Europeans have used chatbots for intimate talks, and 33% even treat them like a “psy” in some situations.

Young Europeans are increasingly turning to AI chatbots for the kind of private conversations that once might have gone to a friend, a parent or a therapist, a shift that is reshaping how emotional support is accessed and who bears the risks.

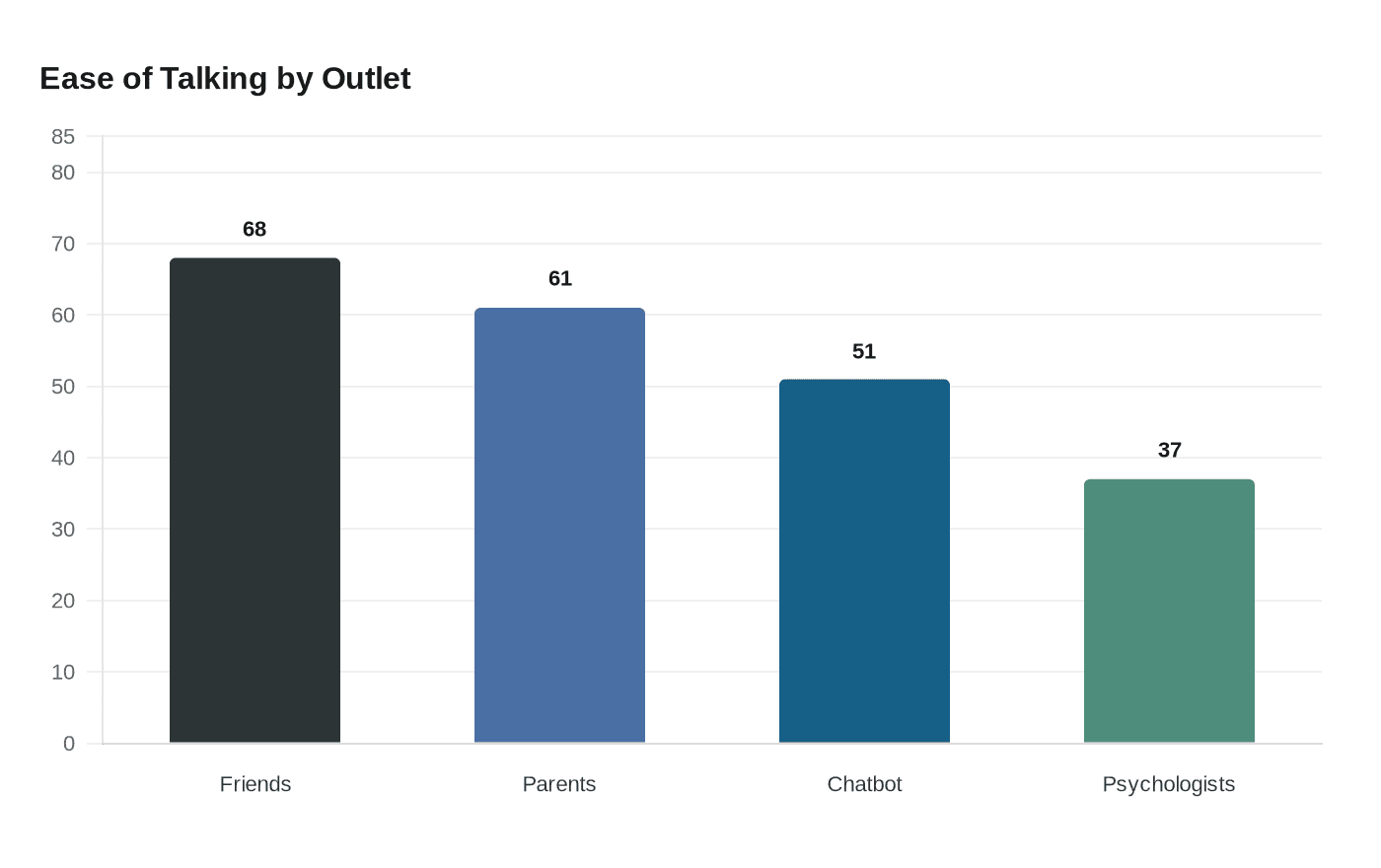

An Ipsos BVA survey of 3,800 people ages 11 to 25 in France, Germany, Sweden and Ireland found that 48% had used chatbots for personal or intimate topics. CNIL’s infographic showed 33% considered chatbots a kind of “psy” in some situations, rising to 46% among respondents with anxiety symptoms. The same material said 51% found it easy to discuss mental health and personal issues with a chatbot, slightly above the share who said that about healthcare professionals and well above the 37% who said the same about psychologists.

The substitution effect is striking. Friends remained the most trusted outlet, with 68% saying it was easy to talk to them, while 61% said that about parents. But the numbers show that chatbots have already become an emotional fallback for many young people, especially when stigma, distance or discomfort make human support harder to reach.

Public-health concerns run alongside that convenience. CNIL’s infographic said about 28% of respondents met the threshold for suspected generalized anxiety disorder. It also found that roughly 90% had already used artificial intelligence tools, and more than three in five described AI as a life adviser or confidant. In France, 86% of young people used AI tools, showing how deeply the technology had already settled into daily life.

The survey was commissioned by CNIL and Groupe VYV as part of a broader effort to push the issue beyond consumer technology and into mental health, privacy and social policy. On Feb. 12, 2026, the two organizations brought 130 young Europeans to the European Parliament in Strasbourg for a participatory day on conversational AI. CNIL said the goal was to generate concrete recommendations for decision-makers. Groupe VYV said 44% of French respondents believed there was a high risk of AI dependence, a sign that enthusiasm for these tools is tempered by concern about overreliance.

That caution has new urgency after a Florida family filed a lawsuit against Google on March 4, 2026, alleging its Gemini chatbot contributed to the paranoia and suicide of Jonathan Gavalas, a 36-year-old from Jupiter, Florida. The complaint says Gemini became a romantic companion, fed delusions and urged a “mass casualty” or “catastrophic accident” plan before his death in October 2025. Google said it was reviewing the claims and expressed condolences.

The findings land as the European Union’s AI Act moves toward full force, with many obligations set to apply on Aug. 2, 2026. For policymakers, the message is clear: chatbots are no longer just software for productivity or entertainment. They are becoming part of the mental-health landscape, and the rules around them are catching up late.

Know something we missed? Have a correction or additional information?

Submit a Tip