University of Zurich Researchers Advance Event-Camera AI for Faster Autonomous Drone Racing

UZH's Robotics and Perception Group published an event-camera AI that cuts training data requirements and could close the final perception gap between autonomous drones and elite human pilots.

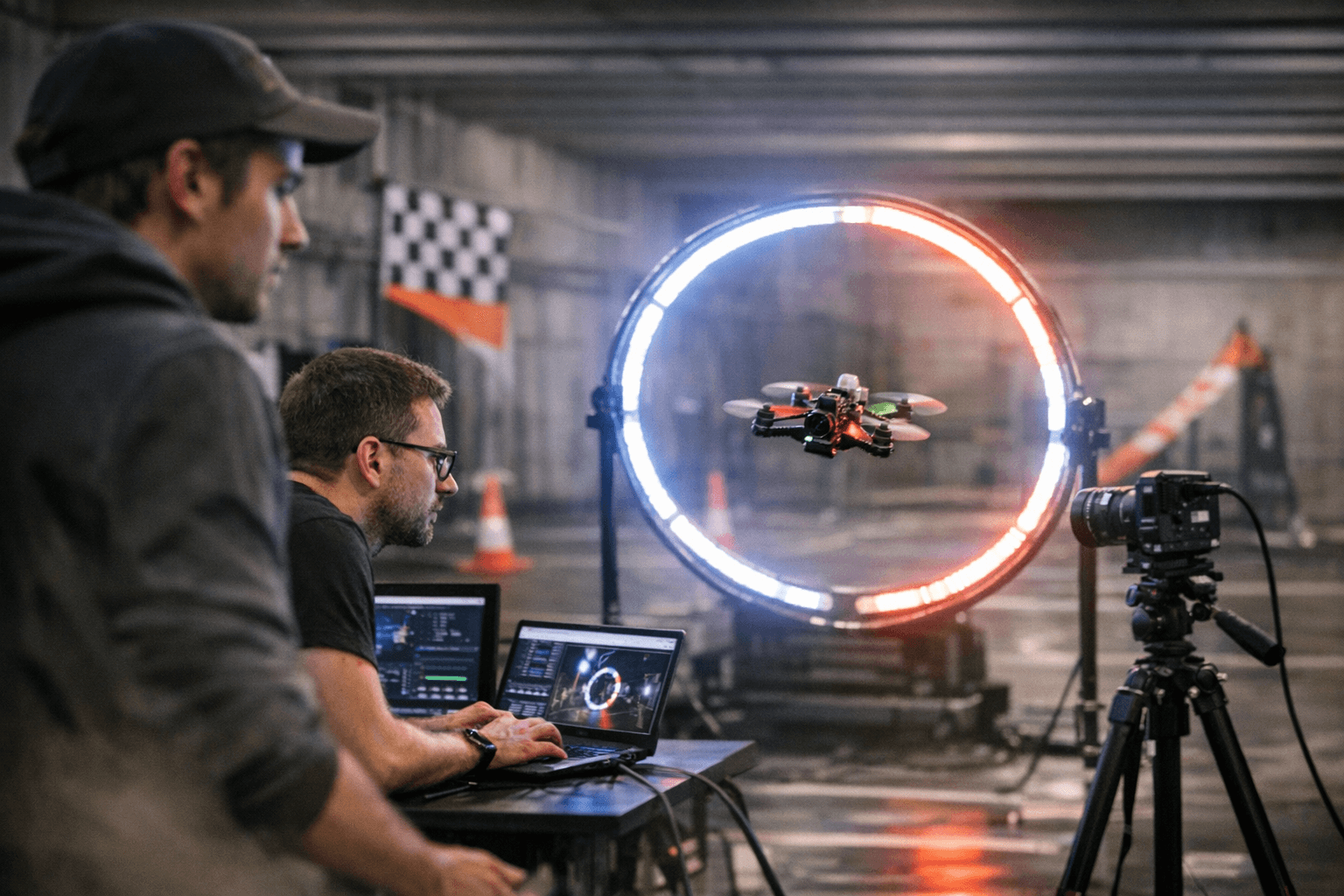

The difference between an autonomous drone threading a gate clean and clipping it at 100 kilometers per hour lives in microseconds. Not milliseconds. Microseconds. That is the timescale event cameras operate on, and it is the timescale that Davide Scaramuzza's Robotics and Perception Group (RPG) at the University of Zurich has been engineering toward for nearly a decade. On March 24, the group published its most consequential step yet: a pretraining framework called Generative Event Pretraining with Foundation Model Alignment, or GEP, accepted to CVPR 2026 Findings in Denver, that outperforms every prior event-camera pretraining method across vision tasks while reducing the downstream training data required to get a deployable perception stack off the ground.

That second part, the data reduction, is the detail racing teams should be circling.

The broader context matters here. In 2023, RPG's Swift system became the first autonomous platform to beat human FPV champions in a head-to-head race, defeating three world-class pilots at speeds exceeding 100 kilometers per hour. Swift was a landmark. It was also built on standard frame cameras, which means it was always fighting physics at the boundary of performance: frame-based sensors capture 30, 60, maybe 240 images per second, and at race velocity, motion blur is not a minor nuisance but a structural liability. Event cameras solve this at the sensor level. Rather than capturing full frames at a fixed rate, they fire asynchronous, per-pixel signals the instant a brightness change is detected, with latency measured in microseconds and a dynamic range that laughs at the lighting conditions that confound traditional optics. The hardware has existed for years. What has lagged is the software infrastructure to train perception models on event data at scale, because labeled event datasets are scarce compared to the internet-scale image libraries that have made modern visual AI possible.

GEP attacks that scarcity problem with a two-stage architecture developed by lead author Jianwen Cao alongside co-authors Jiaxu Xing and Nico Messikommer under Scaramuzza's direction. In the first stage, an event-camera encoder is aligned to a frozen visual foundation model through regression and contrastive objectives. The practical effect: the encoder learns to map asynchronous, sparse event data into a semantic space already built from billions of labeled images, grounding event features in real-world meaning without requiring those billions of labeled event examples from scratch. In the second stage, a transformer backbone is autoregressively pretrained on mixed event-plus-image sequences, capturing the temporal structure that is unique to event sensing and absent from any frame-based model. The architecture learns that event streams are not slow video; they are a continuous, timestamped record of scene dynamics, and it learns to exploit that distinction rather than approximate around it.

The performance gains reported by RPG span object recognition, segmentation, and depth estimation, all tasks that sit directly in the critical path of an autonomous racing vision stack. Better depth at speed means more accurate gate detection. Better segmentation means cleaner obstacle boundaries in cluttered environments. Better recognition means the system does not confuse a pylon with a gate post when the two are separated by a fraction of a second of flight.

Simultaneously, the group published SSLA-Det, a companion object detection model built on spatially-sparse linear attention. The design target for SSLA-Det is explicit: reduce per-event compute while holding accuracy, specifically because racing quadrotors carry no offboard processing. Every inference cycle runs on hardware mounted inside a frame that often weighs under 250 grams. Sparse attention is not an academic nicety in that context; it is the difference between a perception stack that closes the loop in time and one that does not.

Scaramuzza has been building toward this moment since he co-organized the first international workshop on event-based vision at ICRA in Singapore in 2017. The field was theoretical then. It is infrastructural now. GEP and SSLA-Det represent the point where the pretraining gap between event cameras and conventional deep vision systems begins to close systematically rather than incrementally.

For autonomous racing teams and builders looking at an 18-month horizon, the path to capitalizing on this work is tractable but hardware-specific. A competitive build requires a neuromorphic event sensor, with current viable options including cameras built on the Prophesee Metavision or Sony IMX636 silicon. Onboard compute needs to handle sparse transformer inference under a strict power envelope; the NVIDIA Jetson Orin family represents today's practical ceiling for weight-constrained racing platforms, though purpose-built neuromorphic accelerators are entering qualification stages at several research institutions. The GEP codebase and pretrained weights are publicly available through RPG's GitHub, and the alignment and autoregressive pretraining stages can be reproduced on a standard multi-GPU cluster, meaning teams with university or industry compute partnerships do not need proprietary infrastructure to begin fine-tuning for track-specific configurations.

The harder engineering problem is sensor integration and real-time inference optimization once the pretrained weights leave the cluster and land on a racing frame moving at 150 kilometers per hour through a course designed to punish any latency above a few milliseconds. That is the gap GEP narrows but does not eliminate. Swift proved autonomous systems can match human champions in a controlled arena with frame cameras. The next benchmark Scaramuzza's group is building toward is doing it with an event camera, in conditions no frame-based system could handle cleanly, and without a single millisecond of processing delay to spare.

Know something we missed? Have a correction or additional information?

Submit a Tip