68 Million AI Crawler Visits Signal New Visibility Rules for Brands

AI crawler traffic is now measurable at real scale, and the sites winning visibility are the ones built to be read by machines, updated often, and easy to instrument.

The scale of the shift

Sixty-eight million AI crawler visits have turned visibility from a theory into a working business metric. Search Engine Journal’s April 20, 2026 coverage shows that AI systems are already fetching and evaluating web content at a volume agencies can no longer ignore, and one summary of the underlying dataset puts the crawl universe at 858,457 sites hosted on Duda.

The most useful takeaway is not the exact crawler split, but the signal behind it. One summary of the same study says OpenAI accounted for 81 percent of AI crawl traffic, which underscores how concentrated this new discovery layer still is. When one ecosystem drives that much activity, the question changes from “should you care?” to “how quickly can you make your sites legible to it?”

What the data says about visible sites

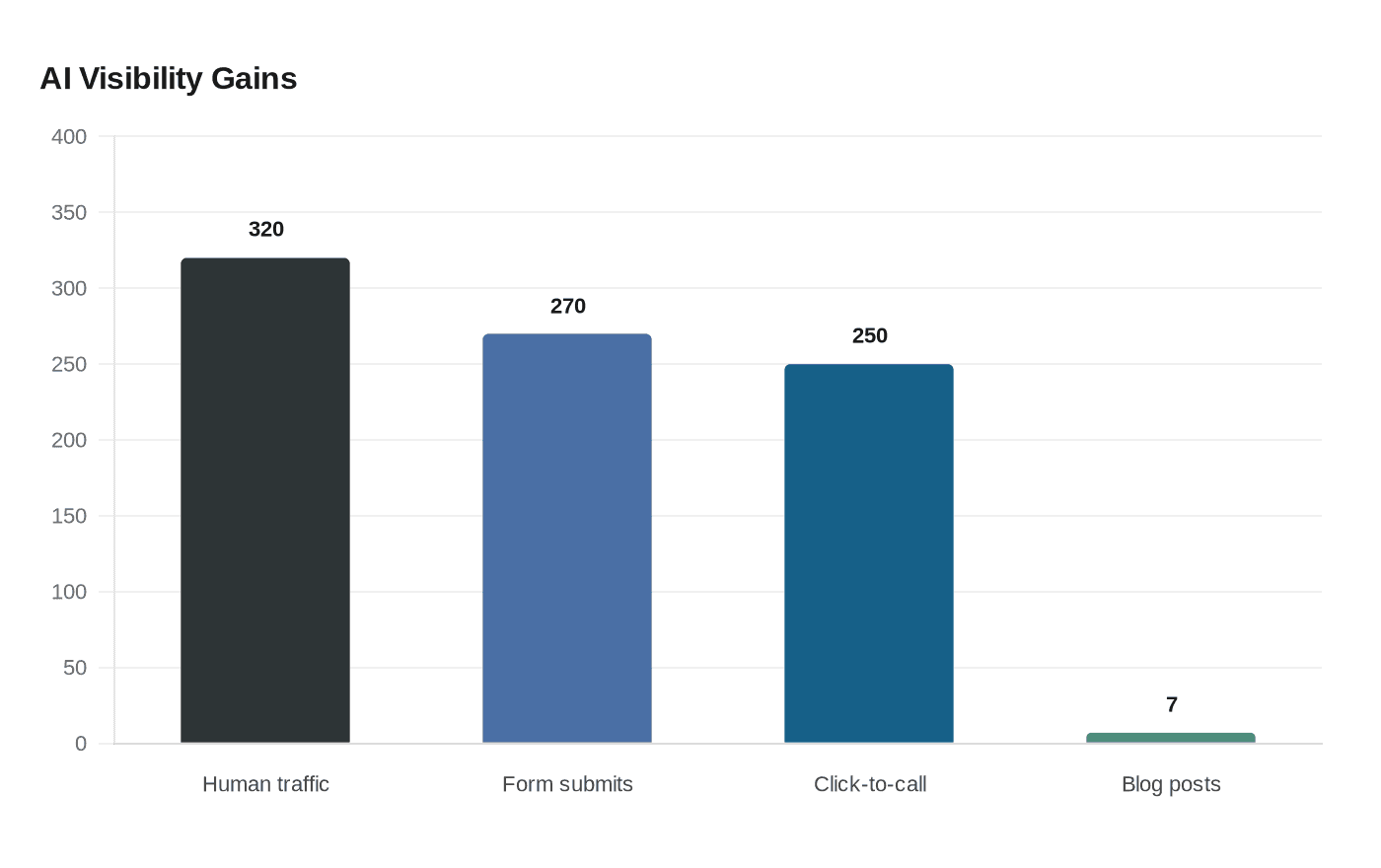

Duda’s April 16 announcement pushes the story beyond raw crawl counts and into performance. Based on more than 850,000 websites and 69 million AI crawler visits, Duda says AI-crawled sites generated 320% more human traffic than non-crawled sites, along with 270% more form submissions and 250% more click-to-call events.

That matters because it reframes AI visibility as a demand-generation issue, not just a brand-awareness one. If crawlers tend to visit sites that already convert better, then the sites that are easiest for AI systems to understand may also be the ones most likely to become useful traffic sources for clients. In other words, crawler activity is starting to behave like an early indicator of commercial value.

Which site traits correlate with crawl activity

The study points to a clear pattern: pages that are organized, semantically clear, and built with fresh information are more likely to attract crawler attention. Duda says blogs, local schema, Google Business Profile synchronization, dynamic pages, and larger page counts were all associated with higher crawl activity. One summary adds that each blog post was associated with a 7% increase in crawler visits.

That combination tells you what AI systems seem to reward. Blogs expand topical coverage, local schema clarifies entities and business details, Google Business Profile synchronization keeps location data aligned, and dynamic pages can expose more timely content at scale. A site with a thin structure and stale copy may still rank in some cases, but it gives crawlers far less to parse, connect, and reuse.

For agencies, this means content architecture is no longer a back-office issue. It is part of the visibility product. If a service page, location page, or blog post is hard to map semantically, then the site is asking both search engines and AI systems to do extra work, and that extra work can cost attention.

How to manage crawlers without flying blind

OpenAI’s crawler documentation makes the operational side of this shift more concrete. The company publicly distinguishes between crawlers such as OAI-SearchBot and GPTBot, and it says webmasters can manage access through robots.txt. That gives agencies a practical control point: you can choose how much access different bots get instead of treating all automated traffic as the same thing.

The tracking side matters just as much. OpenAI’s help material says publishers who allow OAI-SearchBot can track referral traffic from ChatGPT search results by watching for utm_source=chatgpt.com. That turns AI discovery into something you can measure, which is critical if you want to report on it alongside organic search, paid media, and conversion data.

There is also a new layer to watch. OpenAI’s crawler docs reportedly added OAI-AdsBot in April 2026 for pages submitted as ChatGPT ads. That signals a more operationalized ecosystem, where discovery, referral, and ad submission are starting to sit inside the same workflow. For agencies, that means crawler policy is no longer just a defensive settings page. It is part of channel strategy.

The playbook agencies can use this quarter

The practical move is to audit every important client site as both a human destination and a machine-readable asset. Start with the pages that drive revenue, then ask whether each one clearly defines the business, the offer, and the location or entity behind it. If a page needs a human to infer what it does, it is probably costing you AI visibility as well.

From there, tighten the format around the signals the study highlighted:

- Make sure blogs are active, useful, and tied to core topics, not treated as filler.

- Add or clean up local schema so business details, service areas, and entity relationships are explicit.

- Keep Google Business Profile data synchronized with the site so location signals do not drift.

- Expand dynamic pages where they improve freshness, coverage, or structured access to useful information.

- Review canonical signals and internal linking so crawlers can resolve the preferred version of each page without confusion.

Reporting needs to change too. If you only show rankings, you are missing the story the crawl data is telling. Agencies now need dashboards that connect crawler visits, human traffic, form submissions, click-to-call events, and ChatGPT referral traffic into one view. That is how you prove whether machine visibility is turning into demand.

The terminology around this space may keep shifting from SEO to AI search visibility to AEO and GEO, but the job is becoming clearer by the month. The sites that win are not just the ones with the best headlines or the biggest keyword maps. They are the ones that are easiest for AI systems to read, trust, and route into real business outcomes.

Know something we missed? Have a correction or additional information?

Submit a Tip