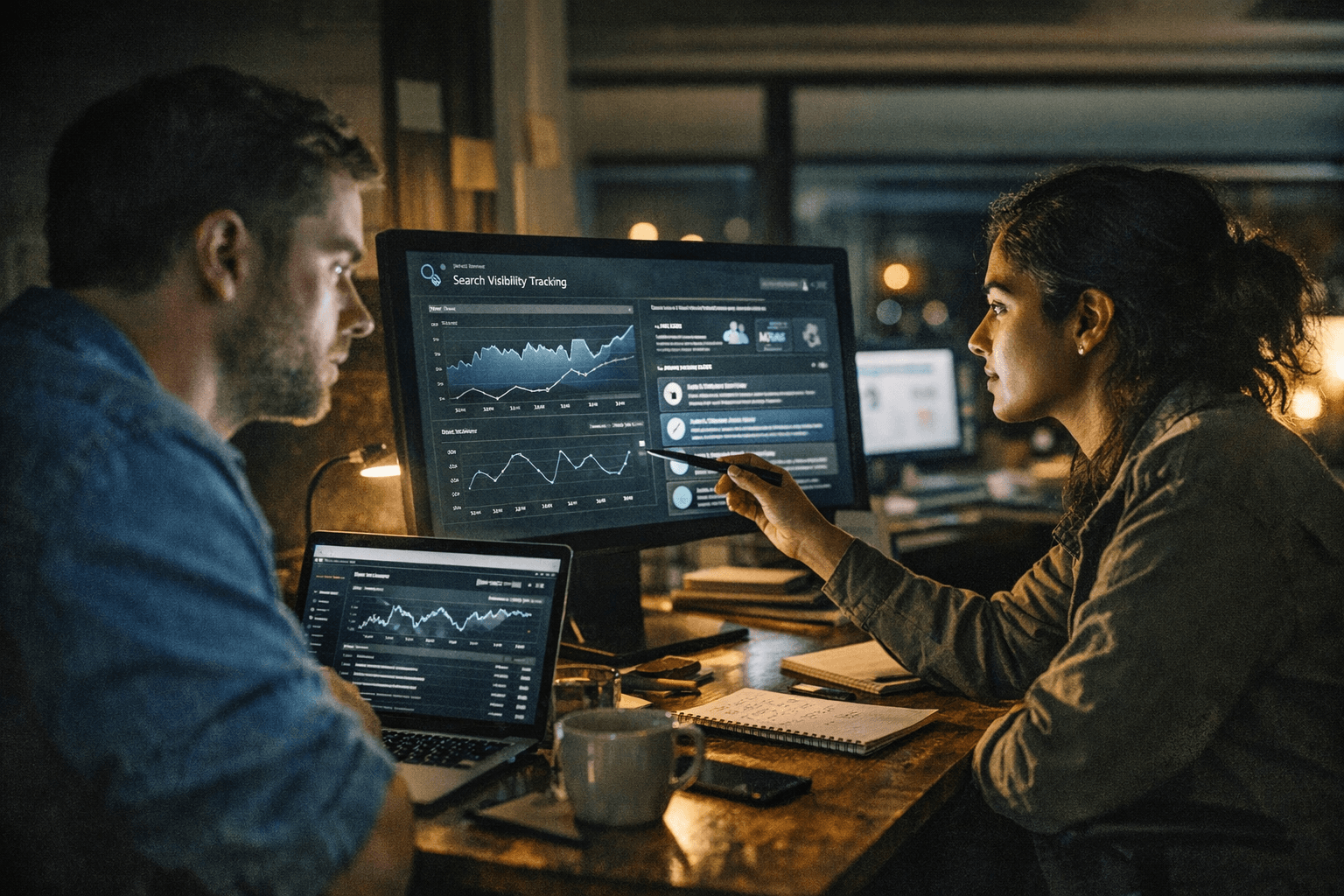

Agencies Build Affordable AI Search Visibility Tracking Without Enterprise Tools

A weekend-built tracker shows agencies how to turn AI search visibility into a low-cost, client-ready retainer instead of an enterprise software bill.

Agencies are finding a new product hiding inside a simple tracker

AI search visibility is quickly becoming one of those reporting problems clients ask about before the industry has settled on a standard answer. Julian Hooks’ Search Engine Land guide takes that pressure point and turns it into a practical agency play: build a repeatable, client-facing tracker for under $100 a month instead of paying for an enterprise stack that can run $300 to $500 monthly or more.

That cost gap is the real story. The point is not just to save money on software, but to package a clearer service. A small team can use a lightweight workflow to show how a brand appears across AI-powered surfaces, compare itself with competitors, and deliver a monthly deliverable that feels more strategic than a rank tracker and more affordable than a full enterprise platform.

Why the old SEO playbook is not enough anymore

Traditional rank tracking was built for a search results page that is now only part of the picture. Google has pushed AI Overviews into the U.S. market and introduced AI Mode as an early experiment in Search Labs using a custom version of Gemini 2.0. Google’s own help pages say AI Mode is opt-in and that AI responses may include mistakes, which is exactly why this space is so messy and so valuable for agencies trying to make sense of it.

That inconsistency creates a reporting opportunity. If the surface itself changes, clients need someone to interpret not just whether they appear, but how they appear, where they appear, and whether the answer is accurate enough to help or hurt the brand. A tracker built for that purpose becomes more than an internal curiosity. It becomes a recurring service.

The minimum viable toolset is the product

The guide’s most useful insight is that the tracker did not start with enterprise software. It started with a specific need that did not exist at the right price, then got built over a weekend by talking to an AI agent in plain English. That is a useful model for agencies because it shows how no-code and low-code workflows can fill a market gap before the commercial tools fully catch up.

The tracker was built across five surfaces: ChatGPT via API, Claude via API, Gemini via API, Google AI Mode, and Google AI Overviews. That mix matters because clients do not care about one isolated model in a vacuum. They care about what the consumer actually sees when the answer is generated across multiple systems, each with its own behavior, sourcing patterns, and quirks.

- Is the brand named at all?

- Is the answer accurate?

- Is pricing correct?

- Is the result actually actionable?

- Are the citations credible?

For agencies, the minimum viable stack is enough when it answers the questions that drive retainers:

Those five questions are the foundation of the report, and they are much easier to sell than a vague promise to "watch AI."

A five-point rubric gives the work consistency

The guide’s custom five-point rubric is what turns a demo into a methodology. Brand-name inclusion tells you whether the brand is visible in the answer at all. Accuracy and pricing correctness show whether the AI is representing the company honestly. Actionability measures whether the answer helps a searcher move forward. Citation quality tells you whether the answer is grounded enough to trust.

That structure is important because agencies do not win on raw data alone. They win by standardizing interpretation. A repeatable rubric lets teams compare results month over month, identify shifts across surfaces, and explain why one competitor suddenly looks more present in AI responses than another. It also gives account managers a language clients can understand without needing to know how the model underneath works.

How to turn the tracker into a packaged retainer

The business case is not simply, “We built it cheap.” The better pitch is, “We can now report on AI search visibility in a way that is tailored to your category, your competitors, and the questions your buyers ask.” That framing makes the tracker feel like a service layer, not a spreadsheet.

- monthly visibility snapshots across the five surfaces

- competitor benchmarking for named queries and category terms

- accuracy checks for brand details, especially pricing and product positioning

- commentary on citation quality and source patterns

- a short action plan for improving visibility where the answers are weakest

A strong retainer add-on can include:

That is the kind of output clients will pay for because it moves beyond vanity metrics. It shows whether the brand is being recognized, represented correctly, and surfaced in places where buying decisions are increasingly influenced.

The market is already moving, but it is still fragmented

The broader tool market is catching up in pieces. Semrush has launched an AI Visibility Index, which signals that AI search visibility is moving from experimental curiosity to a measurable category. Perplexity’s API platform now advertises real-time web search, domain filtering, multi-query search, and content extraction, all features that make custom monitoring workflows more practical.

At the same time, the infrastructure under these workflows is becoming easier to use. OpenAI’s pricing page lists GPT-5.4 at $2.50 per million input tokens and $15.00 per million output tokens. Anthropic says Claude Opus 4.6 starts at $5 per million input tokens and $25 per million output tokens. OpenAI also documents structured outputs, function calling, and the Responses API, including support for text and image inputs plus built-in tools and custom function calls. For an agency trying to automate collection, scoring, and reporting, those are the building blocks that make a weekend-built system plausible instead of theoretical.

The agencies that move first will have a cleaner renewal story

AI search measurement is no longer a side experiment for teams with extra budget. It is becoming part of the core question clients ask: how visible are we when the answer is generated, not just when it is ranked? Agencies that can answer that clearly, affordably, and repeatedly will have a stronger story at renewal time than teams still presenting keyword tables as if nothing has changed.

That is why the under-$100 tracker matters. It is not a toy and it is not just an internal hack. It is the shape of a new service package, one that helps agencies protect margin, differentiate their reporting, and sell a more useful version of visibility while the market is still defining what measurement should look like.

Sources:

Know something we missed? Have a correction or additional information?

Submit a Tip