AI search feeds on its own output, SEO industry fuels the loop

AI search is now feeding on the same low-value pages agencies mass-produce. The fix is stricter originality, better sourcing, and proof that machines can’t fake.

The loop that turns shortcuts into search fuel

The most troubling part of AI search is not a single hallucination. It is the feedback loop Pedro Dias described in his April 22, 2026 Search Engine Journal piece: synthetic content enters the retrieval layer, gets summarized by answer engines, and comes back into the ecosystem looking like fresh fact. That is the AI slop loop, and it turns content shortcuts into systemwide contamination.

Dias uses Lily Ray’s example of Perplexity confidently inventing a non-existent Google core update to show how easily bad information can be laundered into something that sounds authoritative. Ray, founder of Algorythmic and vice president of SEO and AI Search at Amsive, has become one of the most visible voices documenting how AI search can misstate search-news facts and then cite thin, AI-written agency content as support. The lesson for agencies is blunt: if the web gets flooded with templated, low-originality pages, the systems that crawl, summarize, and cite that web will have less trustworthy material to work with.

Google has already drawn a line

Google’s own guidance leaves little ambiguity about what it wants to reward. Its ranking systems are designed to prioritize helpful, reliable, people-first content created to benefit users, not content built to manipulate rankings. Google also defines scaled content abuse as generating many pages primarily to manipulate search rankings rather than help users, and its Search Central guidance explicitly calls out content created with little to no effort, little to no originality, and little to no added value.

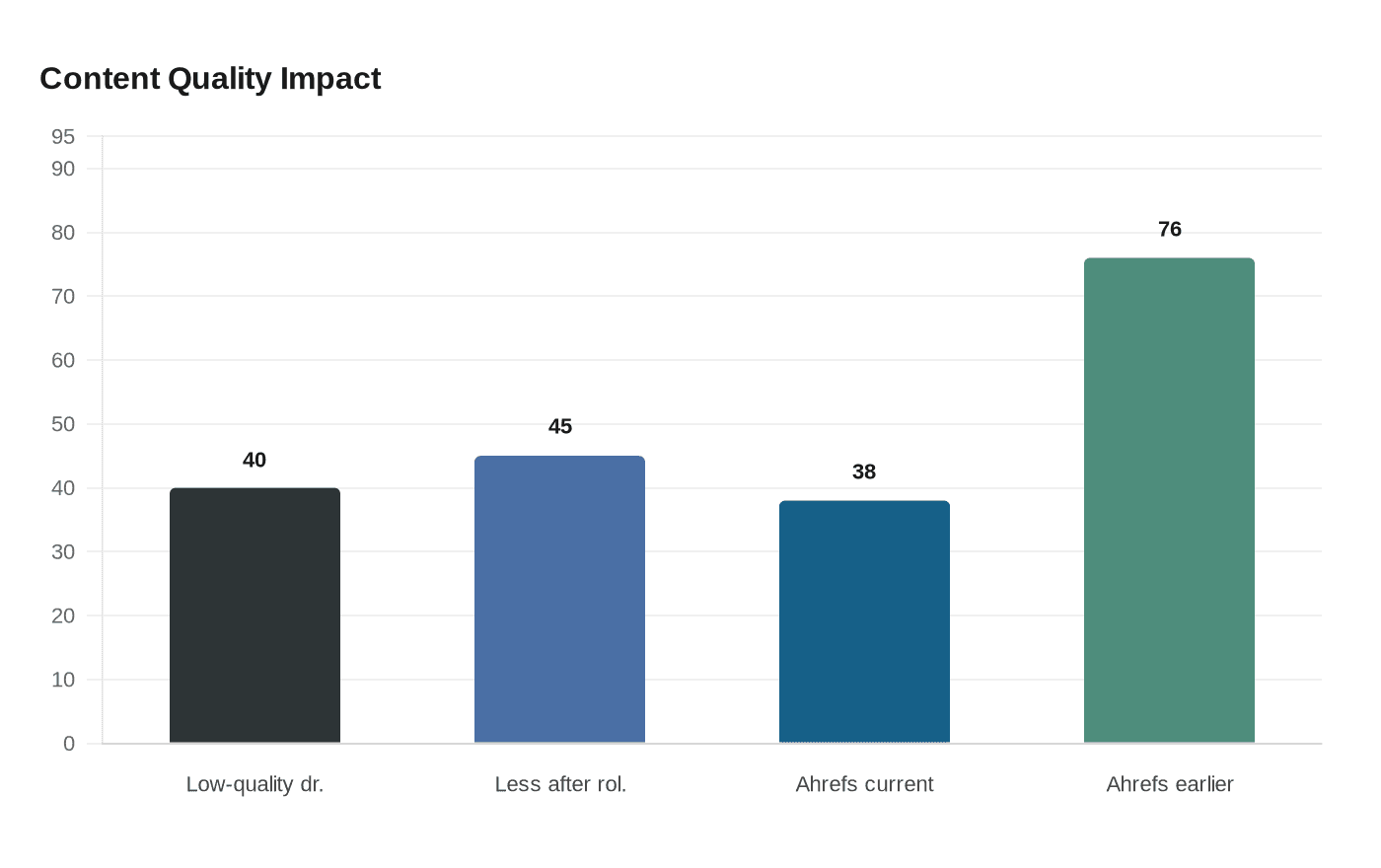

That matters because the industry has not been arguing about quality in the abstract. Google said in March 2024 that its spam and core ranking changes were expected to reduce low-quality, unoriginal content in search results by 40%, then updated that figure to 45% less after rollout completed on April 19, 2024. The direction of travel was already clear before AI search became the main event: mass-produced pages were losing ground, and the penalty for thin content was becoming more visible.

AI Overviews changed the visibility game

The shift from blue links to AI answers made the quality problem harder to ignore. Google launched AI Overviews to everyone in the United States at Google I/O in May 2024, saying people had already used the feature billions of times in Search Labs. Google later expanded generative AI in Search to more than 120 countries and territories, pushing AI-generated summaries deeper into everyday search behavior.

Google’s current Search documentation says AI features like AI Overviews and AI Mode can help users find websites, and it tells site owners to think carefully about how to approach inclusion in those experiences. That is the key change for agencies. Visibility is no longer just about ranking in the classic results. It is about being part of the source ecosystem that AI systems select, summarize, and present back to users.

The citation map is not the old ranking map

Ahrefs has given this shift hard numbers. In an analysis of 863,000 keywords and 4 million AI Overview URLs, only 38% of cited pages also appeared in the top 10 organic results for the same query. In an earlier study, that figure had been 76%. The drop shows that AI Overview citations are not simply a mirror of classic ranking behavior.

That has two implications. First, source quality and brand authority matter in a broader way than many SEO teams expected. Second, content originality matters even more, because AI systems can pull from outside the familiar top-ranking set and still produce an answer that looks coherent. If your work is generic, derivative, and easy to blend into the background, it is easier to overlook in both human search results and AI-generated summaries.

The human quality filter is still there

Search Engine Land reported in April 2025 that Google quality raters were being told to treat automated or AI-generated main content as potentially deserving a Lowest rating. That is a useful reminder that Google’s evaluation framework still depends on human judgment, not just models and metrics. Pages that feel machine-produced can be penalized not because they use AI, but because they offer little value, little effort, and little evidence of original work.

Dias’ warning becomes sharper in that context. AI search is not only vulnerable to hallucination. It becomes more dangerous when the surrounding content ecosystem is already filled with derivative material that sounds plausible enough to be recycled. If answer engines are drawing from pages that were built to imitate information rather than produce it, the entire retrieval chain gets noisier.

What agencies need to change now

The practical response is not to stop using AI. It is to stop confusing scale with substance. Agencies that want durable visibility need editorial standards that protect the information supply chain, because the supply chain is now part of search strategy.

A useful internal checklist looks like this:

- Pass the originality test. If a page can be recreated from a couple of competing articles, it is probably too thin to matter.

- Build around first-party value. Original reporting, proprietary data, interviews, and expert commentary are harder to synthesize and easier to trust.

- Treat AI as a drafting tool, not a truth engine. Use it to organize, accelerate, and surface ideas, but verify every factual claim before publication.

- Keep proof close to the work. Methodology, data sources, screenshots, and named expertise make content easier for editors, readers, and machines to trust.

- Publish less noise. A smaller number of differentiated pages will do more for long-term search trust than a flood of recycled summaries.

These are not abstract ideals. They are defenses against a market where search systems increasingly reward material that can survive scrutiny. The more agencies rely on generic production, the more they contribute to the very contamination that weakens AI search.

The new measure of durable visibility

The big change in Dias’ argument is that the danger is no longer only reputational. It is structural. AI search is increasingly training on content shaped by the SEO industry, and when that content is thin, the feedback loop degrades the ecosystem everyone depends on.

That is why brand trust and editorial standards matter more now, not less. The agencies that win this phase will not be the ones that publish the fastest. They will be the ones that make the web worth retrieving.

Know something we missed? Have a correction or additional information?

Submit a Tip