AI Search Visibility Is Far More Selective Than Google Local Results

AI search isn’t just another SEO channel. The real problem is measurement: answers shift, attribution blurs, and Google-era visibility does not carry over cleanly.

The measurement problem starts with the first surprise: AI assistants do not search the way Google local results do. A business that looks visible in a local 3-pack can still vanish when a chatbot is asked for a recommendation. Trustmary’s synthesis of recent studies makes that gap hard to ignore, especially when SOCi found AI platforms recommending only tiny slices of a very large local universe.

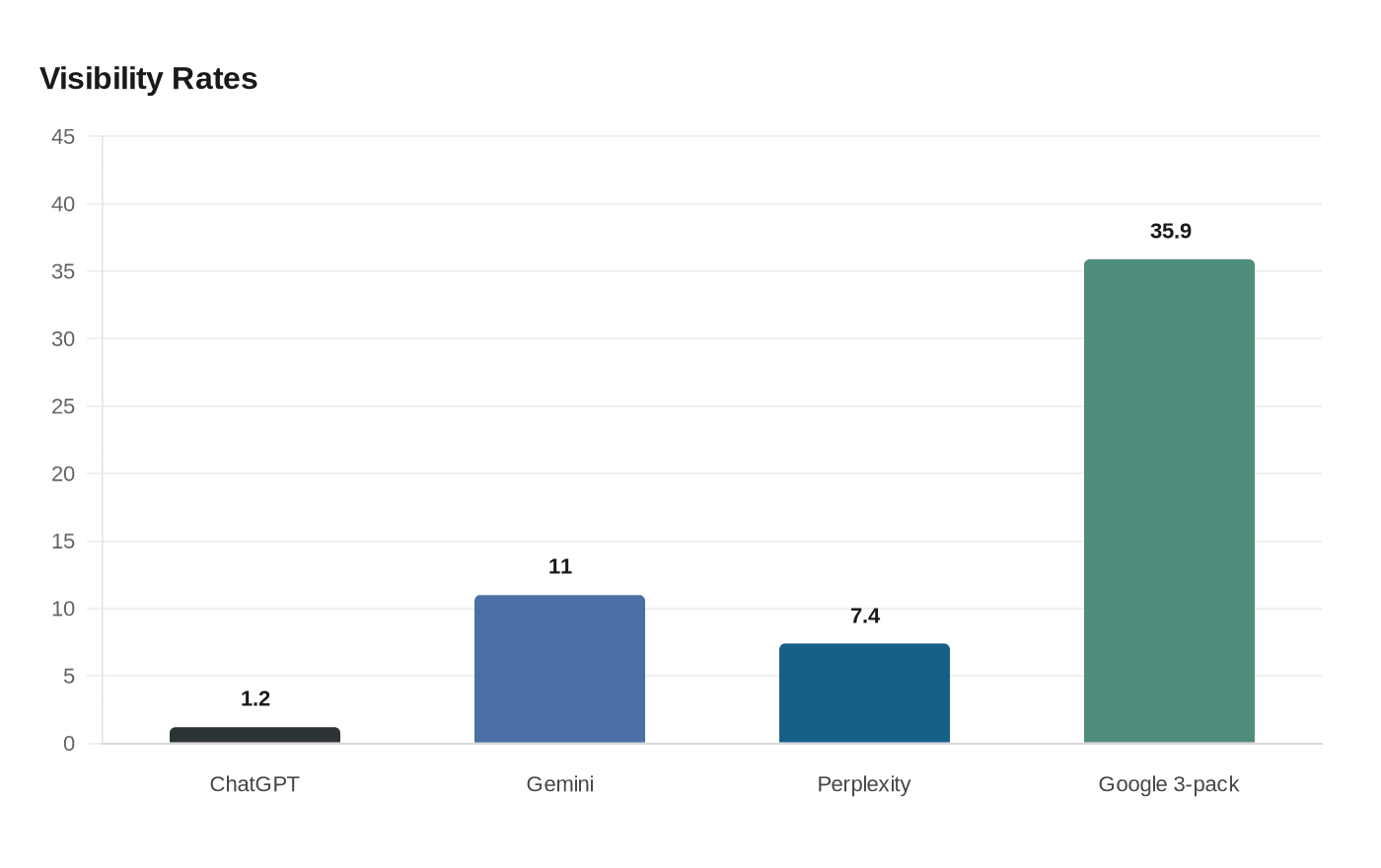

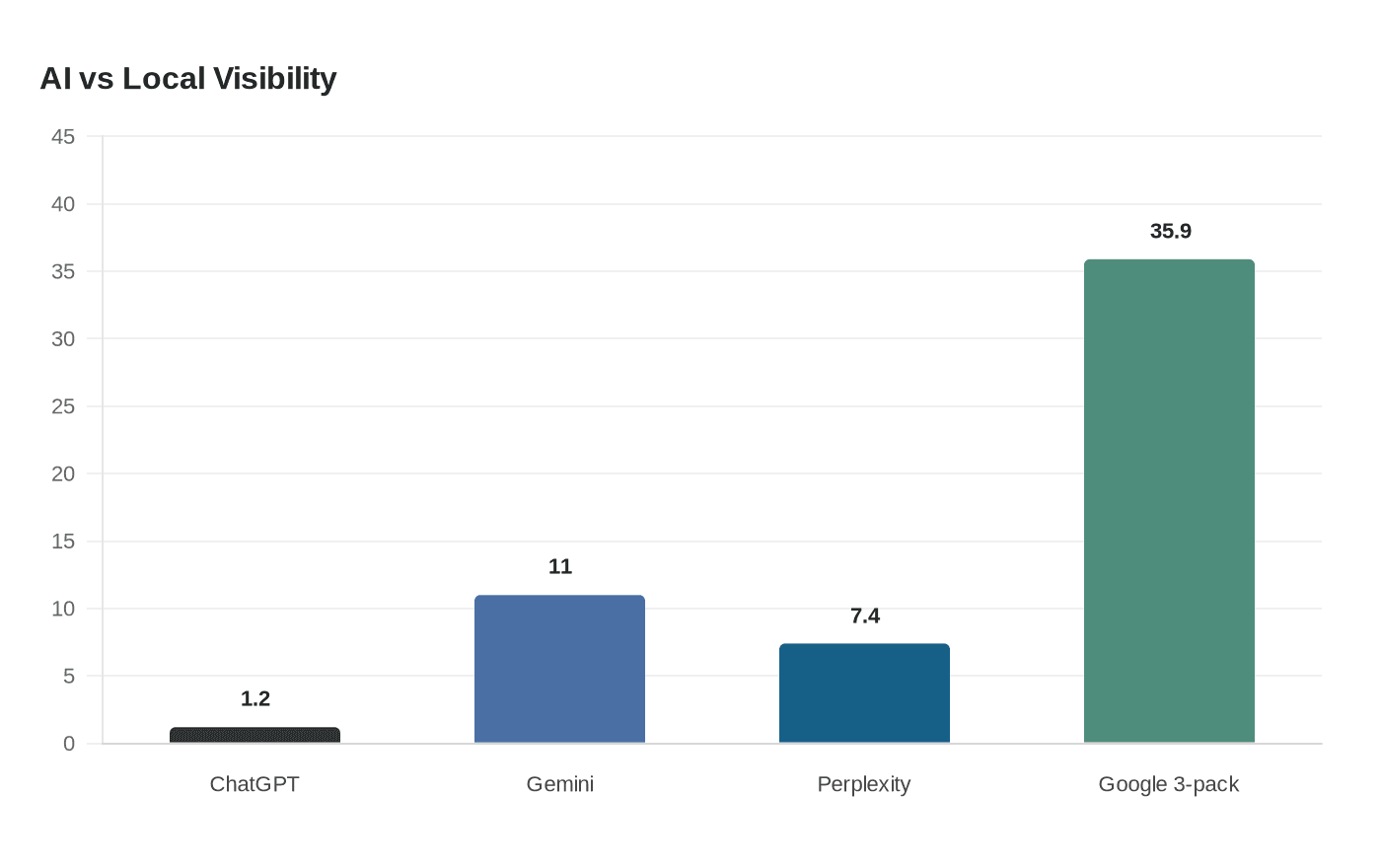

SOCi analyzed more than 350,000 locations across 2,751 multi-location brands and found that ChatGPT recommended just 1.2% of those locations, Gemini 11%, and Perplexity 7.4%. In the same comparison, Google’s local 3-pack showed a 35.9% appearance rate. That puts AI visibility at roughly 3 to 30 times less likely than traditional local search visibility, depending on the platform and the comparison being made.

Google-era visibility does not automatically translate into AI-era selection. That is the part brands keep underestimating. SOCi said only 45% of the top retail brands in traditional local search overlapped with the brands most often recommended by AI, which means nearly half of the names rising in Google’s local ecosystem were not the same names floating to the top in AI answers.

The pattern suggests that answer engines are filtering harder than search engines. SOCi reported that the average rating of ChatGPT-recommended locations was 4.3 stars, with Gemini at 3.9 and Perplexity at 4.1, a strong hint that reputation signals matter more than many marketers assumed. Weak or incomplete local signals can knock a brand out of contention entirely, even if that brand still performs well in classic map-pack visibility.

That is why AI visibility feels like a measurement problem, not just a ranking problem. Traditional SEO gives you a familiar ladder: positions, pages, impressions, clicks. AI answers are messier. They are curated, probabilistic, and often opaque, which means the same brand can appear one moment and disappear the next without any obvious pattern in the interface.

SparkToro’s work made that instability impossible to dismiss. Rand Fishkin and Patrick O’Donnell asked 600 volunteers to run 12 prompts through ChatGPT, Claude, and Google AI a combined 2,961 times. The same list of brand recommendations appeared in fewer than 1% of runs, and the same list in the same order showed up only about 1 in 1,000 times.

That kind of variation changes the entire measurement model. If a result is this inconsistent, a single rank tracker is not telling you much about durable visibility. SparkToro’s warning was blunt in spirit: a lot of the new AI tracking market is already drawing more than $100 million a year in estimated spending, but static rank tracking can end up measuring noise instead of signal.

The practical takeaway is to stop asking only, “What rank am I?” and start asking, “How often do I appear, and under what conditions?” Trustmary’s framing points in that direction, and the two studies together explain why. AI search is less like a fixed results page and more like a recommendation system that repeatedly re-sorts the available options.

For brands trying to diagnose why they are invisible, the first step is a local-signal audit. Look at the basics that AI systems seem to reward: review strength, star rating, and the completeness of your location data. SOCi’s numbers suggest that even a small reputation gap can matter, because the engines are not treating every plausible option as equally eligible.

A practical checklist for diagnosing AI invisibility starts with repetition, not assumptions.

- Re-run the same prompts many times. SparkToro’s findings show that one prompt is not enough, because the answers can swing dramatically from run to run.

- Count appearance rate, not just position. If your brand shows up in 3 out of 20 runs, that tells you more than a one-time appearance in a favorable slot.

- Compare engine by engine. ChatGPT, Gemini, Perplexity, Claude, and Google AI are not behaving the same way, so visibility has to be measured separately.

- Audit rating quality and consistency across locations. SOCi’s average recommended ratings clustered in the high 3s and low 4s, which makes star quality a real gatekeeper, not a cosmetic detail.

- Check whether your strongest Google local performers are also your strongest AI performers. The 45% retail overlap shows that the two worlds are only partially aligned.

- Watch what sources and signals keep getting cited. Trustmary’s conclusion was that businesses need to monitor not only whether they appear, but what seems to improve inclusion.

The deeper lesson is that AI search rewards selectivity, not breadth. Google can be generous enough to surface a large share of viable local businesses. AI answer engines appear to choose from a much narrower field, then redraw that field over and over again. That makes visibility harder to earn, harder to prove, and much harder to explain with old SEO habits.

For marketers, the immediate shift is mental as much as tactical. Stop treating AI answers like a new version of page-one rankings. Treat them like a volatile recommendation layer where reputation, completeness, and repeatability all shape whether a brand gets named at all. The businesses that learn to measure that uncertainty will be the ones best positioned for the next phase of search.

Know something we missed? Have a correction or additional information?

Submit a Tip