Brands Race to Win Visibility in ChatGPT and Gemini Search Results

Brands are learning that AI answers can make them visible, or vanish them completely. The new race is not just to rank, but to be named, cited, and tracked inside chat search.

The new search race is not about blue links

The old SEO playbook is getting a new layer, and it is moving fast. Brands are now chasing visibility inside ChatGPT, Gemini, Claude, Perplexity, and Grok, because the answer itself is becoming the destination. Amplitude says AI search adoption has doubled in the past year, and that shift is pushing marketers toward a new kind of software: tools built to measure, optimize, and attribute visibility inside AI-generated responses.

That is the big change underneath the product launches. Google expanded AI Overviews globally in 2025 to more than 200 countries and territories and more than 40 languages, OpenAI launched ChatGPT search in October 2024 to connect users with “original, high-quality content from the web,” and Anthropic added web search to Claude in March 2025 for paid users in the United States. Chat-based search is no longer a novelty. It is becoming part of the everyday discovery stack.

A new category is forming around AI-answer visibility

The market is starting to split into three jobs that traditional search tools never handled cleanly. One layer measures whether a brand appears in AI answers at all. Another tries to improve the odds of being named or cited. A third ties those mentions to business outcomes, so visibility does not remain a vanity metric.

That is why the current crop of products matters. Meridian claims to be the first AI ranking engine, which places it closest to the measurement end of the spectrum. Amplitude’s AI Visibility tool leans into observability and outcome tracking. Lapis, backed by Y Combinator, is built to watch AI responses in real time and adjust website content accordingly. The category is not one product with one use case. It is a new martech stack forming in layers.

Meridian, Amplitude, and Lapis solve different problems

Meridian: measurement

Meridian’s pitch is the simplest and, in some ways, the most familiar to anyone who has lived through the rise of SEO dashboards. If it is the first AI ranking engine, then its core promise is measurement: where does a brand show up inside AI answers, and how does that position change over time? That makes Meridian the closest analog to rank tracking in classic search, translated for a conversational interface.

That matters because AI visibility is not binary in practice. A brand can be named once, cited indirectly, or replaced by a competitor entirely. A tool that measures ranking inside answers gives teams a baseline, but it does not by itself explain why the model chose that mention, or how to improve the odds on the next query.

Amplitude: measurement plus attribution

Amplitude’s AI Visibility tool goes further. The company says it shows where a brand appears across major AI platforms, how often competitors are recommended instead, and how AI-generated visits connect to conversions, retention, or revenue. That last piece is the tell. Amplitude is not just saying, “Did the model mention us?” It is asking, “Did that mention matter to the business?”

The company has been aggressive about framing the problem. Its launch materials say that if a brand is not listed in AI answers, it can become effectively invisible to high-intent customers. Amplitude also cites Gartner language about a growing “LLM visibility gap” and the need for specialized observability as generative AI moves into mission-critical production systems. In other words, AI search is no longer being treated as a marketing curiosity. It is being treated as infrastructure.

The adoption numbers show the appetite. Amplitude said more than 27,000 brands had used the free tool, and in the three months before its February 5, 2026 update, customers created more than 70,000 AI Visibility reports. That is not a niche experiment. That is a flood.

Lapis: optimization

Lapis is the most active of the three. Y Combinator’s launch post says the product supports ChatGPT, Claude, Perplexity, Gemini, and Grok, and that it processes over 650 million tokens a day to understand responses for customers. Its own framing is blunt: “helps you rank on AI Search Engines.” The practical implication is that Lapis is not just reporting on visibility, it is trying to shape it in real time by dynamically adjusting website content.

That makes Lapis the optimization layer of the category. If Meridian tells you where you stand, and Amplitude tells you whether that standing is worth money, Lapis is trying to move the answer in your direction. It is a more interventionist model, and one that reflects how unstable AI responses can be from platform to platform.

Why the category is taking off now

The reason these tools are arriving together is that the models do not source information the same way. Yext analyzed more than 6.8 million citations across 1.6 million responses from Gemini, ChatGPT, and Perplexity, and found different weighting patterns by model. Gemini citations were heavily weighted toward brand-owned websites, with 52.15 percent coming from owned sites. ChatGPT relied far more on third-party sources, with 48.73 percent of citations coming from sites such as Yelp, TripAdvisor, and MapQuest.

That difference changes the job for marketers. A brand cannot assume its owned content will dominate every model, and it cannot assume a single optimization tactic will carry across every answer engine. The new playbook has to be structured, consistent, and multi-channel. If the data is fragmented, the answer layer will be fragmented too.

The real gap is not just ranking, it is control

This is where the market gap becomes obvious. A brand needs to know three things at once:

- where it appears across ChatGPT, Gemini, Claude, Perplexity, and other AI surfaces

- why one model cites a brand-owned page while another prefers a third-party directory or review site

- whether that visibility leads to clicks, conversions, retention, or revenue

Amplitude is strongest on the last point. Lapis is pushing on the second and third by altering content in response to model behavior. Meridian, as a ranking engine, is the cleanest expression of the first. Together they sketch a category that is still taking shape, with room for more specialized tools and clearer standards.

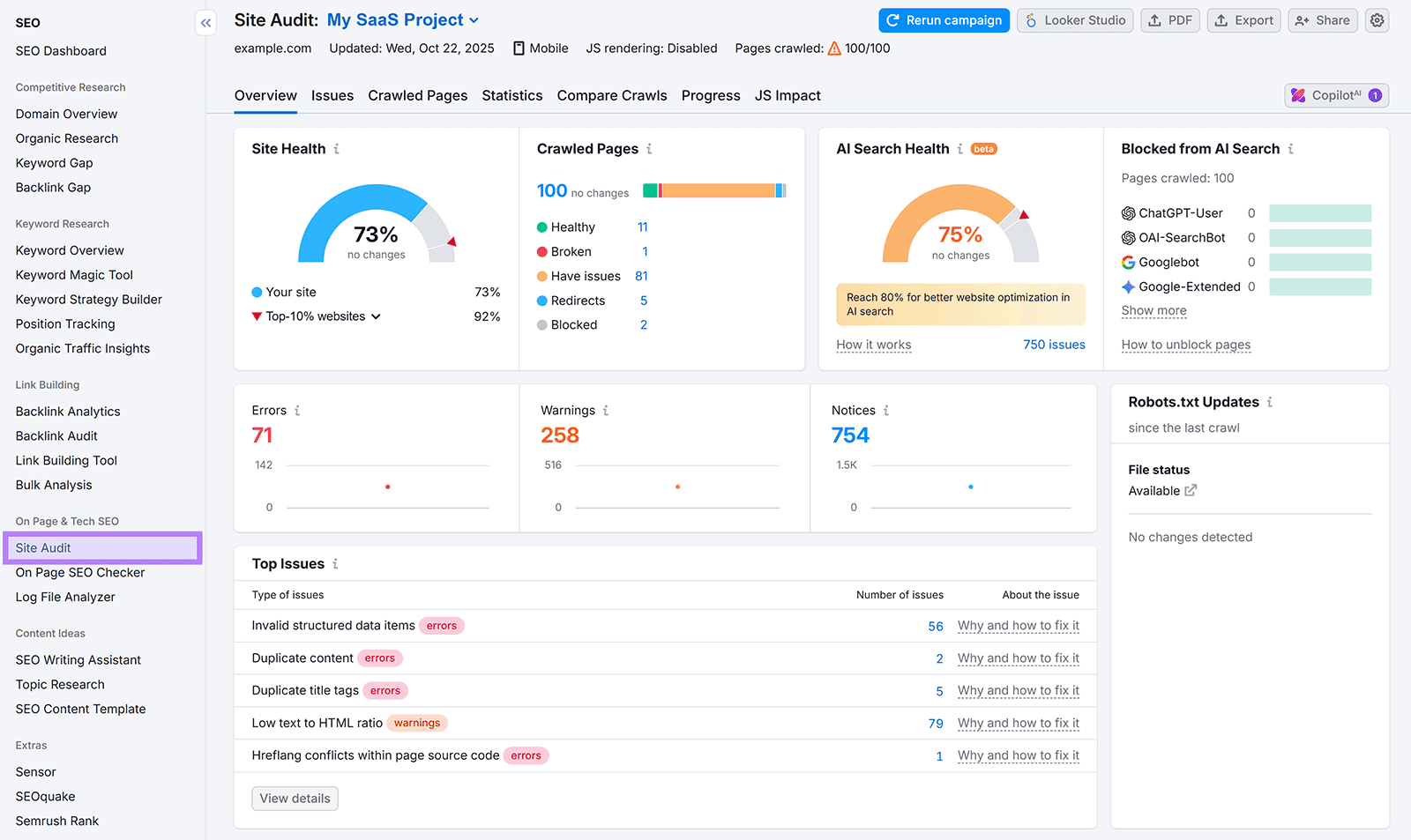

The broader market confirms that this is bigger than one launch. Semrush has already introduced an AI-search visibility framework, and multiple free AI visibility checkers have appeared as well. That crowding is a sign of demand, but it is also a sign that the real product-market fit has not settled yet. The winners will not be the tools that only say, “You are present.” They will be the ones that explain why you were chosen, how to change that choice, and whether it paid off.

Brands spent years optimizing for the click. Now they are learning to optimize for the answer.

Know something we missed? Have a correction or additional information?

Submit a Tip