New Brand Influences AI Search Visibility in Controlled Experiment

A fictional brand broke into AI answers fast, exposing how easily visibility can be shaped and how hard it is to trust answer engines without stronger safeguards.

A stress test for AI search, not a stunt

A fictional brand becoming visible in AI answers is not just a novelty, it is a warning. In a controlled SE Ranking experiment tracked across ChatGPT, Google’s AI Overviews, Google’s AI Mode, Perplexity, and Gemini, a made-up company in a real competitive niche surfaced with enough consistency to show that AI search visibility can be influenced far more deliberately than many marketers assumed.

The uncomfortable lesson is bigger than one fake brand. It suggests answer engines can be nudged by structured authority signals, consistent branding, and content architecture, which creates new opportunities for both legitimate brand building and reputation gaming.

How the experiment was built

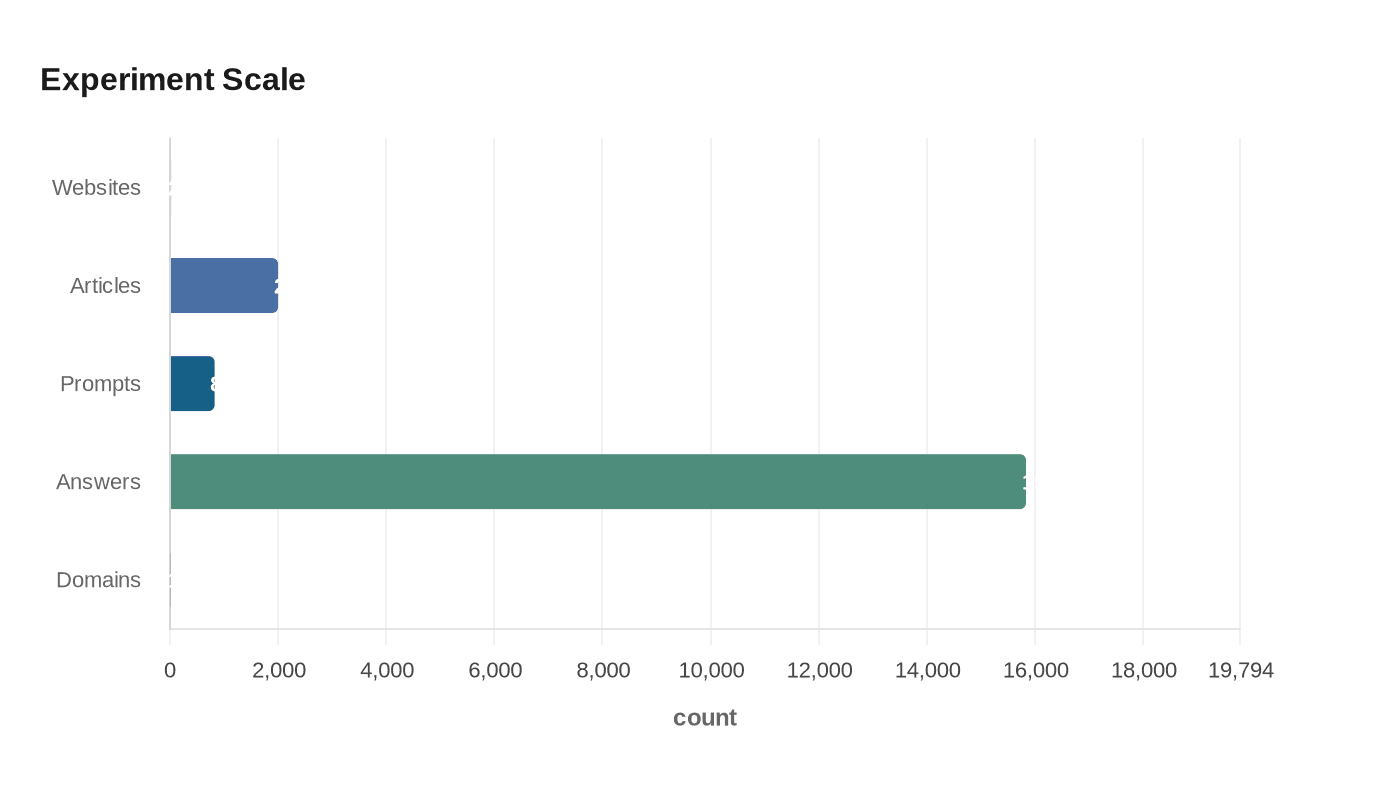

The effort began in November 2024 as part of a 16-month research program on AI-generated content and search visibility. SE Ranking launched 20 brand-new websites across different niches and published 2,000 AI-generated articles across them, then followed how those properties performed over time.

That earlier work set the stage for the AI-search phase, which started in March 2026. For this round, the team created a fictional brand in a real market with real competition, then surrounded it with a new website and 11 additional domains that were all more than a year old and already had history and rankings. The point was to see whether AI systems would respond to a brand that had no real-world reputation but did have a structured digital footprint.

They tested seven content formats: deep guides, alternatives listicles, best-of listicles, review articles, comparison pages, how-to tutorials, and clickbait-style articles. Over the first month, the researchers tracked 825 prompts and collected 15,835 AI answers. That volume matters because it turns the experiment from a one-off curiosity into a repeatable visibility test.

What the first month revealed

The headline finding is stark: 96% of the fake brand’s AI visibility came from branded searches. In other words, the model exposure was heavily concentrated around prompts that already contained the brand name, which means recognition came faster than broad category dominance.

On queries that only the fake brand could realistically answer, the site outperformed established competitors by as much as 32x and reached near-exclusive visibility in less than 30 days. That is the kind of result that should grab the attention of marketers, SEO teams, and trust and safety leaders alike, because it shows that a new entity can move into AI answers quickly when its content and domain structure are aligned.

The most-cited pages from the main domain were also revealing. Pages that clearly explained who the brand was, what it offered, and how it differed became the dominant sources. The strongest performers were the “compete guide” and the About Us page, a reminder that AI systems often reward clarity and identity framing as much as product detail.

Why the setup matters more than the gimmick

The point is not that a fake brand can trick an answer engine and then disappear. The point is that AI visibility appears measurable, testable, and in some cases engineerable. That opens the door to a more disciplined approach to brand discovery, where teams can build assets, track citations, compare outcomes, and iterate based on what the model chooses to surface.

That same logic cuts both ways. If a fictional brand can surface quickly, a bad actor can also attempt to seed confusion, borrow authority, or crowd out weaker brands with tightly packaged content. The risk is not only misinformation, but reputation gaming, where visibility is manufactured ahead of real trust.

What the earlier SE Ranking data adds

The new experiment makes more sense when paired with SE Ranking’s broader findings from the 20-site AI content project. In that earlier phase, 71% of new pages were indexed in the first month, producing 122K-plus impressions and 244 clicks. Eighty percent of the websites ranked for at least 100 queries, and total impressions later climbed above 526K.

But the early lift did not last. After roughly three months, the share of pages in the top 100 fell from 28% to 3%, and the overall picture remained largely unchanged after 16 months. Clicks rose from 244 to 782 by months 2 and 3, then rankings declined sharply.

That pattern matters for anyone treating AI visibility as a shortcut. New properties can gain traction fast, but durability is harder. Without sustained authority, the visibility curve may flatten or reverse, which is exactly why brand-building in AI search needs to be tested as an ongoing system rather than a one-time launch.

The broader market is already measuring this shift

SE Ranking is not alone in treating AI search as a trackable channel. Yext reported in October 2025 that 86% of 6.8 million AI citations across ChatGPT, Gemini, and Perplexity came from sources brands already control. First-party websites accounted for 44% of citations and listings for 42%, while Reddit and similar forums accounted for just 2% once location and query intent were applied.

That finding reinforces a practical point: visibility in AI answers is not evenly distributed across the open web. Brands that manage their own websites, listings, and structured identity signals are already the most likely to appear in cited answers.

An arXiv paper on generative engine optimization pushes the same message from a different angle, arguing that AI search systems show a strong bias toward earned media over brand-owned and social content, along with a big-brand bias that can disadvantage niche players. Put together, these studies suggest AI search is developing its own hierarchy of trust, and it does not always mirror traditional SEO logic.

What brand teams should do now

If AI search visibility can be influenced this quickly, the practical response is not panic, it is process. The teams most likely to benefit are the ones that treat AI visibility like a product feature: build, measure, compare, revise.

- Create pages that state exactly who the brand is, what it does, and why it is different.

- Track citations across ChatGPT, Google’s AI products, Perplexity, and Gemini, not just classic rankings.

- Compare branded prompts with category prompts, because the experiment showed branded searches drove the majority of visibility.

- Use multiple content formats, including guides, comparisons, and review-style pages, to see which structures AI systems prefer.

- Monitor listings and first-party assets, since brand-managed sources are already dominating a large share of citations.

Search Engine Land had already flagged in November 2024 that monitoring visibility across ChatGPT, Perplexity, Copilot, Claude, and Gemini was becoming a practical marketing challenge. This experiment makes that warning concrete. The rules of discovery are no longer hidden in theory. They are being benchmarked, manipulated, and studied in real time.

The real takeaway is not that a fake brand can win. It is that AI answer engines are now part of the reputation layer, and the brands that learn how that layer works will shape what users see first.

Know something we missed? Have a correction or additional information?

Submit a Tip