Semrush says AI agents now search, compare, and act on websites

AI agents are becoming the new visitors, and Semrush says visibility now depends on whether they can crawl, understand, and complete tasks on your site.

The new SEO brief is no longer just “can AI mention us?”

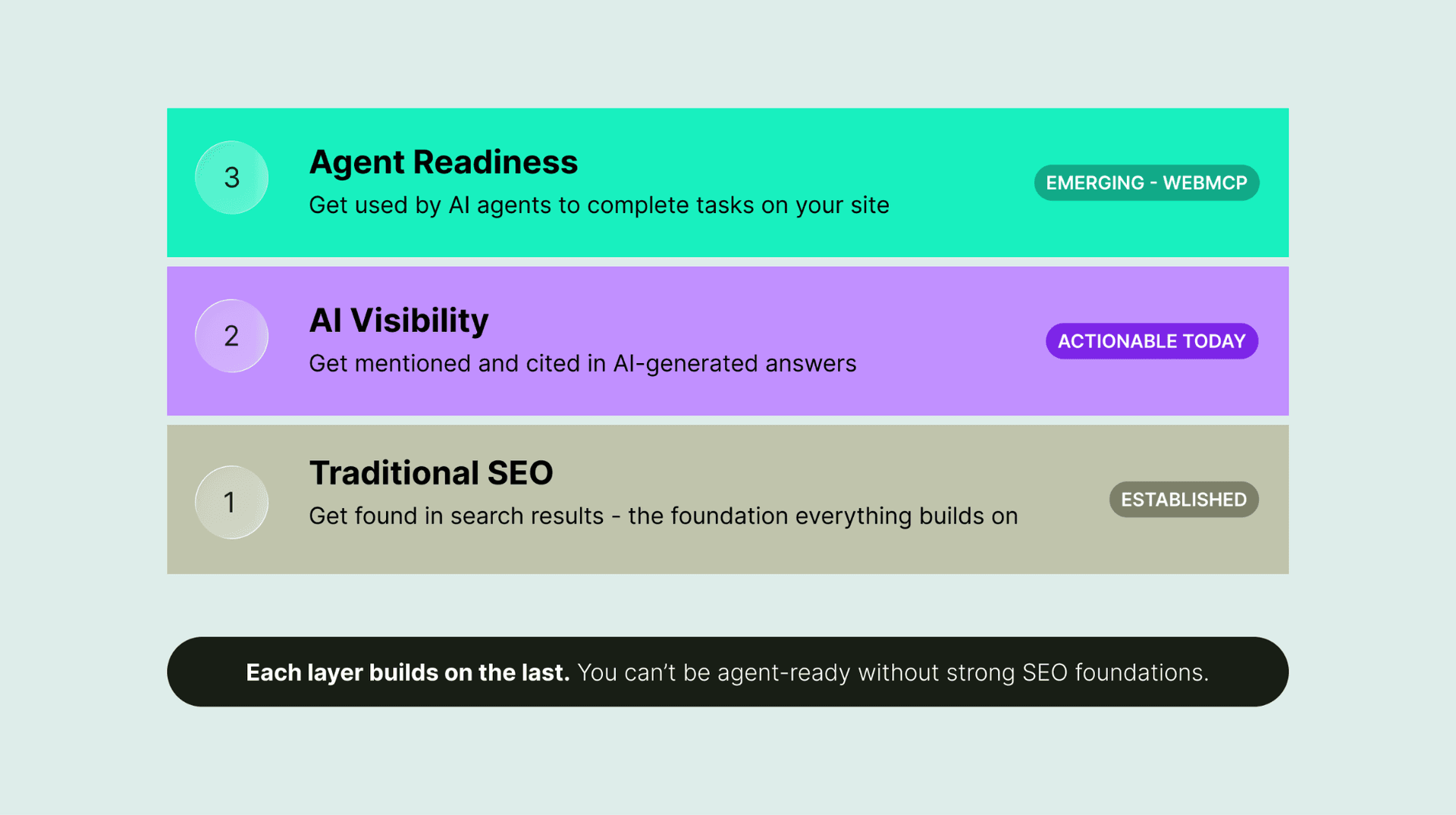

Semrush’s agentic search guidance pushes the field one step further: AI systems are not only answering questions, they are beginning to search, compare, and act on a user’s behalf. That changes the job for every brand with a website. The winning page is no longer just the one that earns a citation in an AI response; it is the one an agent can actually land on, interpret, and use to finish a task.

Semrush calls that test agentic readiness. In practical terms, a site is agent-ready if an AI agent can reach it, understand the content, and complete a useful action such as checking pricing, submitting a form, or making a purchase. That is a more demanding standard than classic AI visibility, because a page now has to behave like an interface, not just a document.

What changes when agents do the searching

Traditional SEO has always cared about crawlability, indexability, and clear page structure. Agentic search keeps those rules, then adds a new layer: the machine has to be able to do something useful with what it finds. A page that looks fine to a human but hides core details behind heavy JavaScript, inconsistent markup, or blocked resources can still fail in an AI-driven workflow.

Semrush’s point is blunt: the same signals that help a brand appear in AI-generated answers also affect whether an agent can use the site successfully. That means brands should stop treating AI search and technical SEO as separate tracks. If the page is not easy to parse, the agent may never reach the decision point where it can compare products, read a form, or complete a transaction.

Start with crawlability, not just content quality

The first tactical move is to reduce obstacles between an AI crawler and the page content. Semrush recommends cutting back on JavaScript-heavy elements, making content easier to parse, and auditing for crawler access. That is not a cosmetic adjustment; it is the foundation for whether an agent can understand what the page offers.

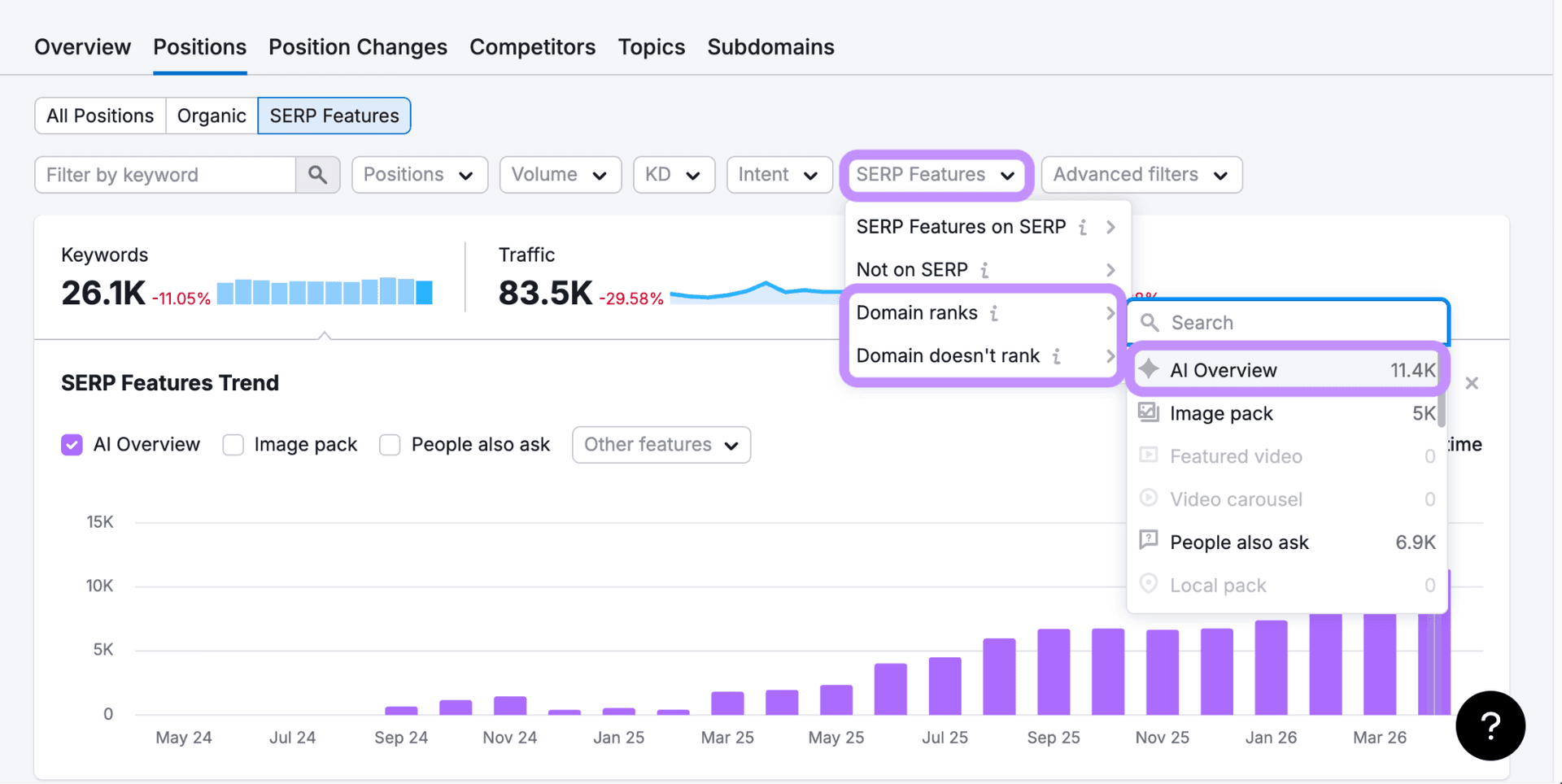

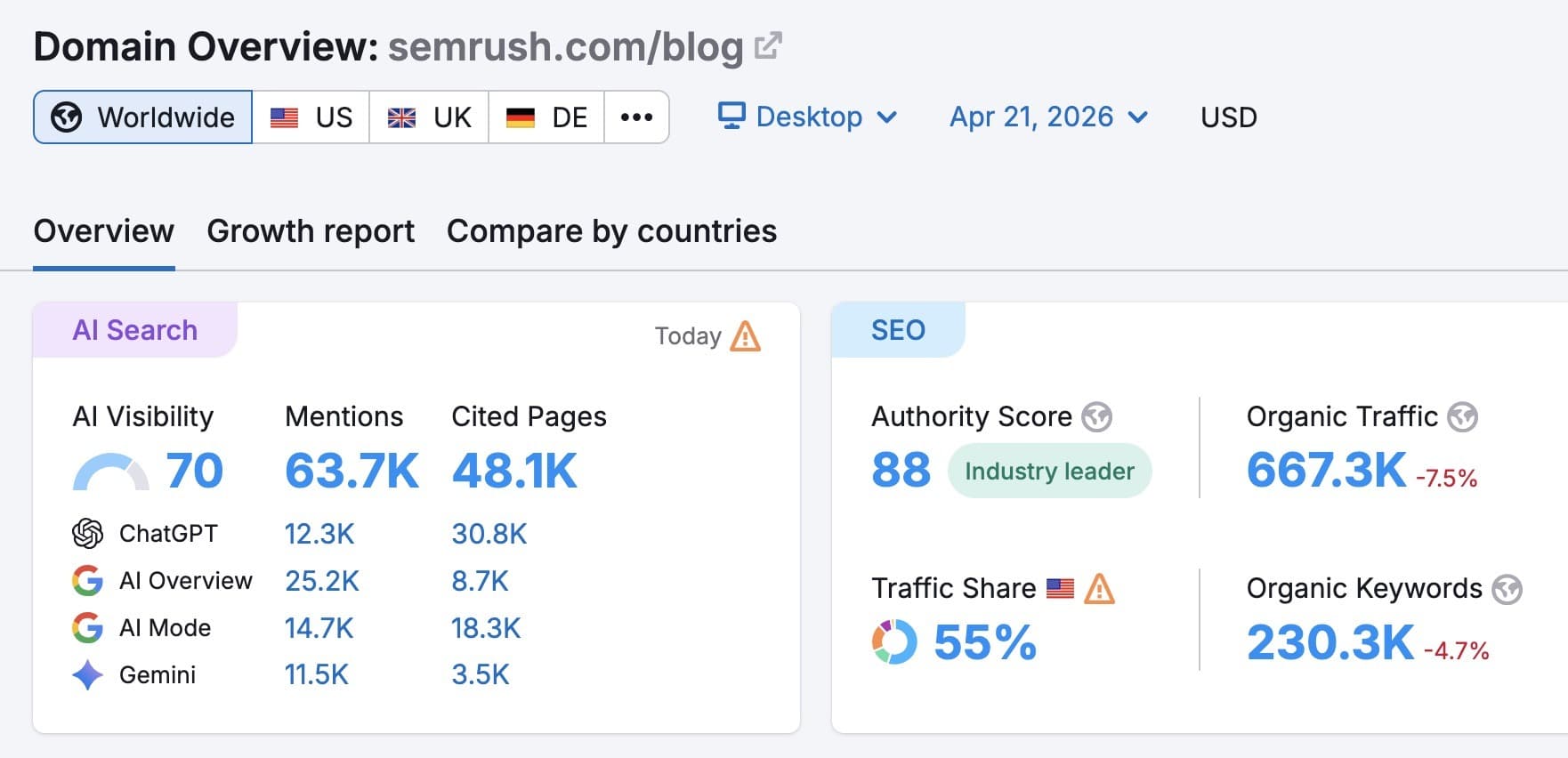

Semrush’s Site Audit now gives teams an AI Search Health score to measure whether a site is optimized for AI-driven visibility. The score looks at whether AI bots can crawl content, whether key AI-visibility elements such as structured data and llms.txt are present, and whether technical blockers stand in the way. Semrush also introduced a dedicated AI Search category in Site Audit on July 1, 2025, signaling that AI-facing optimization is now a distinct workflow, not a side note.

What to inspect first

- Reduce reliance on client-side rendering for essential content

- Expose pricing, availability, product specs, and form labels in the raw HTML where possible

- Use structured data to make entities, offers, and actions easier for machines to interpret

- Check for blocked scripts, disallowed paths, and anything that prevents bots from reaching key pages

- Add or validate llms.txt where it makes sense for your site architecture

Use the right Semrush checks before the agent hits your site

Semrush’s workflow is designed to show where AI-readiness breaks down. The Blocked from AI Search widget surfaces crawler restrictions, while the Issues tab can flag problems such as broken anchor text or pages missing llms.txt. The Log File Analyzer then lets teams inspect real bot behavior instead of guessing from theory.

That last step matters because agentic search is about actual behavior, not just policy. A site may look permissive on paper yet still be difficult for bots to traverse in practice. Log analysis reveals whether important pages are being visited, whether bots are stalling on redirects or scripts, and whether the site is delivering the content an agent needs to complete a task.

Know which bots matter now

The user-agent list has become part of the playbook. Semrush names the key types that site owners should track: GPTBot, ChatGPT-User, OAI-SearchBot, and ClaudeBot. Those are not abstract labels for the technical team to file away. They are the identifiers behind whether a site can be discovered, read, and, in some cases, used by AI systems that are increasingly acting like intermediaries.

OpenAI says it uses web crawlers and user agents such as GPTBot and OAI-SearchBot, and that site owners can manage access through robots.txt. OpenAI’s Help Center says publishers may need to update robots.txt so OAI-SearchBot can access pages. It also notes that if a disallowed page is discovered through other means, ChatGPT Atlas may still surface the link and page title unless the page is noindexed. That makes robots.txt necessary, but not sufficient, for controlling how your content appears in AI-driven experiences.

Anthropic’s system cards draw a similar line around crawler behavior. Claude’s crawler follows robots.txt instructions, and Anthropic says it does not access password-protected pages or pages requiring sign-in or CAPTCHA verification. For publishers, the message is clear: if a flow depends on barriers a human can clear manually, an agent may stop cold.

Think in tasks, not just traffic

This is where ecommerce brands, software vendors, and any company with a comparison or checkout flow need to shift their thinking. Agentic search is not only about winning awareness at the top of the funnel. It is about whether an AI system can compare vendors, verify details, and complete a transaction without getting lost in the interface.

OpenAI’s GPT Actions docs point toward that future by showing how ChatGPT can invoke third-party actions so users can ask in natural language and receive structured results back. Anthropic’s latest Claude system cards underline the same direction with strong agentic-task and computer-use capabilities. The site owner’s job is to make sure the website is ready for those machine-mediated journeys: clear product data, stable forms, straightforward navigation, and markup that exposes meaning instead of hiding it.

The page-level decisions that matter most

- Put critical answers in visible, machine-readable HTML

- Use schema for products, offers, reviews, organization details, and action-oriented pages

- Avoid burying essential pricing or inventory behind scripts or modal-only interfaces

- Make forms concise, labeled, and resilient enough for automated completion

- Keep internal links descriptive so agents can move from comparison to conversion without guesswork

llms.txt and robots.txt are becoming complementary controls

The /llms.txt proposal, maintained at llmstxt.org, has emerged as a community effort to standardize a markdown file that helps LLMs use websites at inference time. It does not replace robots.txt, but it reflects the same broader shift: site owners want a clearer way to tell machine systems what matters, what is allowed, and how content should be consumed.

That shift is already visible in Google’s own AI ecosystem. Google says AI Overviews are now available in more than 200 countries and territories and in more than 40 languages, which shows how quickly AI-mediated discovery has moved from experiment to default behavior at global scale. When AI systems are this embedded in search experiences, page architecture and machine readability become business decisions, not technical afterthoughts.

The tactical playbook from here

The next SEO shift is not about abandoning content quality. It is about pairing content quality with interface quality. Brands that want to stay visible when AI agents choose sources and vendors on a user’s behalf need pages that are understandable, navigable, and task-completable from the first crawl to the final click.

Semrush’s framework makes that concrete. Audit for access, strip away friction, validate structured data, watch the bot logs, and treat agent readiness as a live operational requirement. In a market where AI systems increasingly do the searching, comparing, and acting, the sites that win will be the ones built to be used, not merely read.

Know something we missed? Have a correction or additional information?

Submit a Tip