Monday.com Builds MCP to Turn Figma Designs Into Production Code

monday.com’s new MCP shows why AI only helps when it respects design rules. The real leverage shifts to system design, QA judgment, and workflow control.

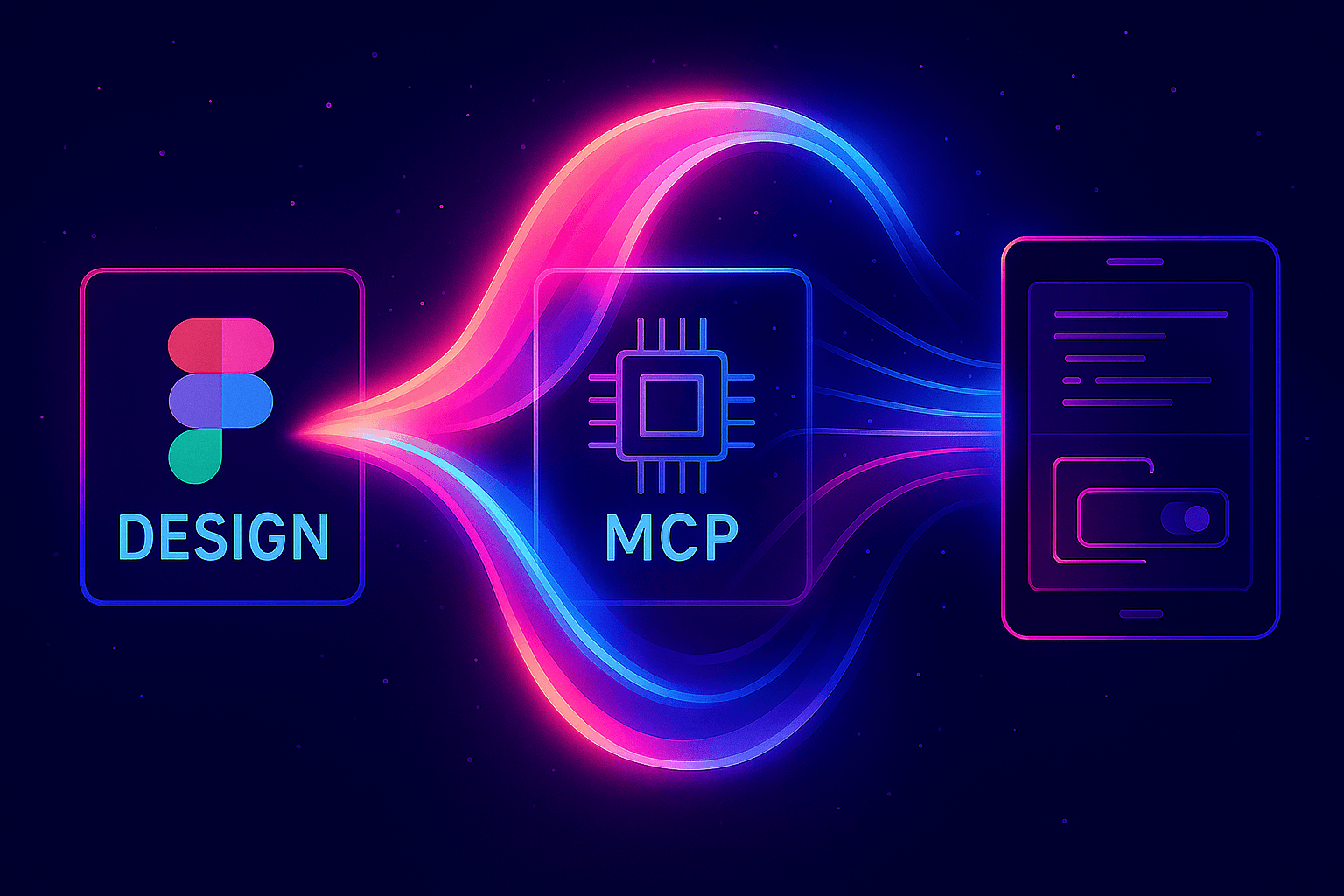

From pixel mockup to governed code

monday.com is no longer treating AI as a shortcut from Figma to finished UI. In its latest engineering write-up, the company lays out a more disciplined view of frontend automation: the model should not just invent code that looks right, it should produce code that fits the company’s design system, accessibility rules, and component contracts.

That matters inside monday because the hard part is not generating more lines of code. The hard part is making sure those lines survive review, reuse, and scale across a platform that ships to a large customer base and a sprawling internal architecture.

The failed shortcut

The team tested a simple workflow first: paste a Figma link into Cursor and run it through the Figma MCP. The output looked plausible at a glance, but it failed the standards that actually keep a design system coherent. It hard-coded colors, overrode typography, and fell back to manual CSS instead of using the components the company already trusts.

That failure is the key lesson. The model was not just missing talent, it was missing context. It did not know which components existed, which props were valid, which tokens had to be used, or which accessibility rules were non-negotiable. In other words, the problem was not “AI cannot code.” The problem was “AI cannot safely guess the rules of your frontend.”

For product leaders, that distinction is important. A fast-looking prototype can still create slower teams if engineers have to clean up token drift, rework inaccessible patterns, and replace one-off styling with the real system later. monday’s experience shows how easily AI can increase output while lowering code quality if the guardrails are vague.

Why the design-system MCP changes the workflow

monday’s answer was to build a design-system MCP that exposes deterministic, structured tools to the model. Instead of asking the agent to infer the platform from a prompt or a screenshot, the company gives it machine-readable knowledge about what the system allows and what it forbids.

That structured layer does three things at once:

- It tells the agent which components exist and how they can be composed.

- It defines which props are valid, so the code generator cannot improvise unsupported behavior.

- It enforces design tokens and accessibility rules, so the output stays inside the company’s standards.

This is a different model of AI adoption than the “type a prompt and hope” approach that still dominates many teams. monday is treating the AI agent like a junior contributor that needs a source of truth, not like a magical designer-engineer hybrid that can intuit policy from aesthetics.

For engineering managers, that shifts where value sits. The leverage is less in writing raw UI code and more in designing the system the model can safely operate inside. The work becomes setting constraints, validating edge cases, and deciding when a generated result is acceptable versus when it needs human revision.

How this fits monday’s wider AI strategy

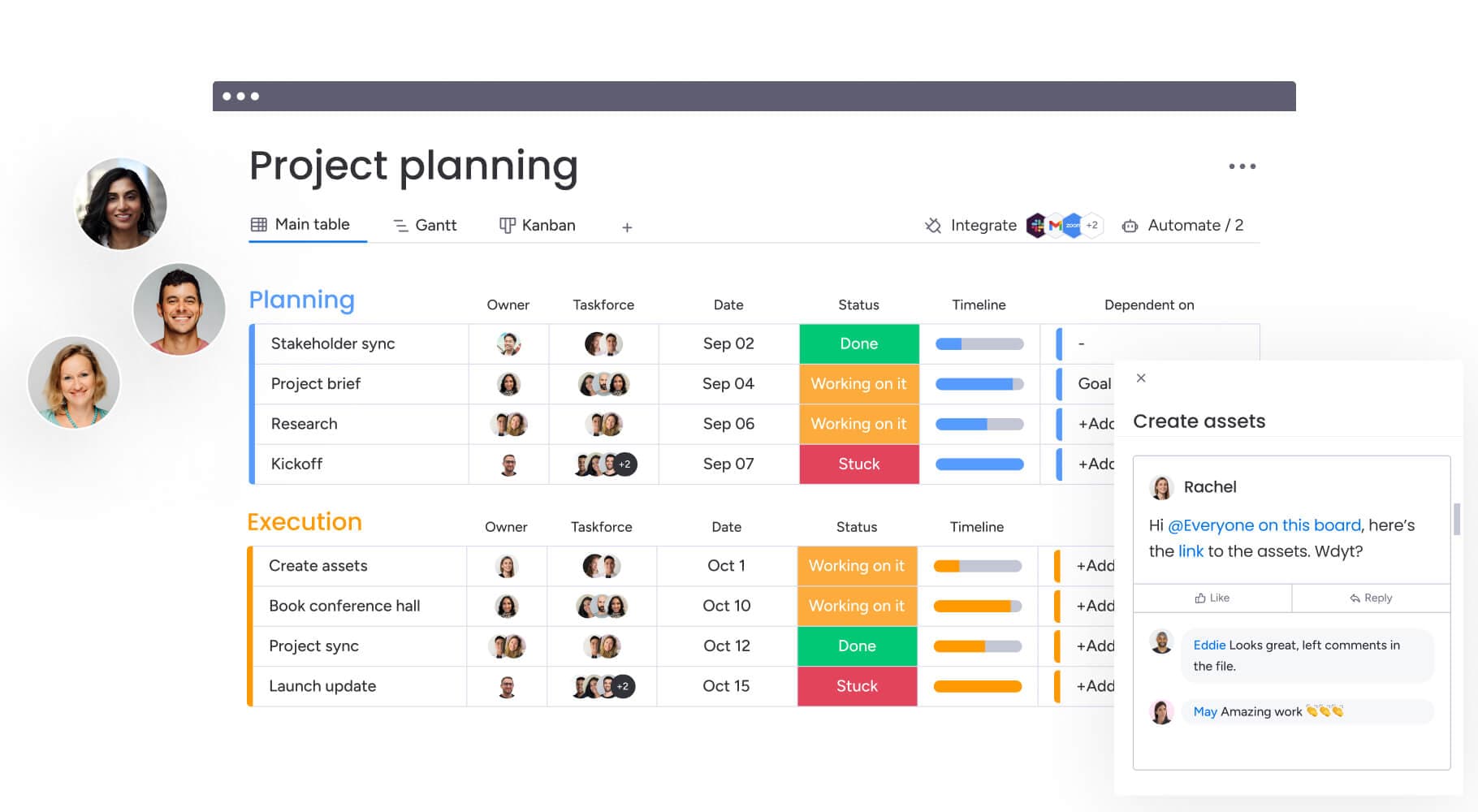

The Figma-to-code workflow is not an isolated experiment. monday said in 2025 that its AI strategy rested on three pillars: AI Blocks, Product Power-ups, and the Digital Workforce. That framing signaled a company-wide effort to make AI useful for non-technical users and enterprise teams without forcing organizations to add a lot more headcount or process overhead.

By July 2025, that strategy had hardened into product names that showed up across the platform: monday magic, monday vibe, and monday sidekick. The message was not just that monday was adding AI features. It was that the company was recasting itself around work execution, with AI stitched into the flow of making, deciding, and coordinating.

The financial backdrop helps explain why. monday said fiscal 2025 revenue reached $1.232 billion, up 27% year over year, and that customers with more than $50,000 in ARR represented 41% of total ARR. It also said monday vibe became the fastest product in company history to pass $1 million in ARR. Those numbers suggest that the company’s AI bets are not decorative; they are being built for a customer base large enough to reward repeatable workflows, not just flashy demos.

Why Vibe is central to the story

The design-system push makes even more sense when you look at Vibe, monday’s React component library and design-guidelines system. The company says Vibe serves hundreds of microfrontends across multiple products and external consumers as an open-source library. That means every bad AI-generated decision can ripple far beyond one screen.

A design system at that scale is not a style preference. It is infrastructure. When AI skips it, the cleanup cost is not just visual inconsistency. It is broken patterns, accessibility gaps, and the kind of technical debt that slows down every future release.

In a separate engineering post, monday described a Vibe v3 release that had to work across hundreds of microfrontends. That is the reality behind the glossy product surface: one system choice can affect a huge share of the company’s frontend estate. AI that ignores that reality creates more work than it removes.

What this means for product, design, and engineering leaders

The career lesson is straightforward. The premium is moving away from people who can only produce screens or snippets, and toward people who can define systems that machines can follow. That means stronger demand for designers who understand component governance, engineers who can write reliable contracts, and product leaders who know how to translate workflow rules into constraints.

The skill stack that matters most now looks like this:

- System design, not just screen design.

- QA judgment, especially around accessibility, token use, and component reuse.

- Workflow orchestration, so AI output can be reviewed and repeated without collapsing standards.

- Cross-functional fluency, because the best guardrails sit between design, frontend engineering, and product operations.

monday’s approach is also a quiet rebuke to the idea that AI should replace review. It cannot. If anything, it increases the value of reviewers who know what “good” looks like at the system level and can tell the difference between something that is merely generated and something that is actually shippable.

The larger story here is not that monday found a clever way to turn Figma into code. It is that the company is redefining frontend work around machine-readable rules, not intuition. That is where AI becomes durable: not when it feels magical, but when it makes design-to-code reliable, reviewable, and repeatable.

Know something we missed? Have a correction or additional information?

Submit a Tip