monday.com guide turns A/B testing into a decision framework

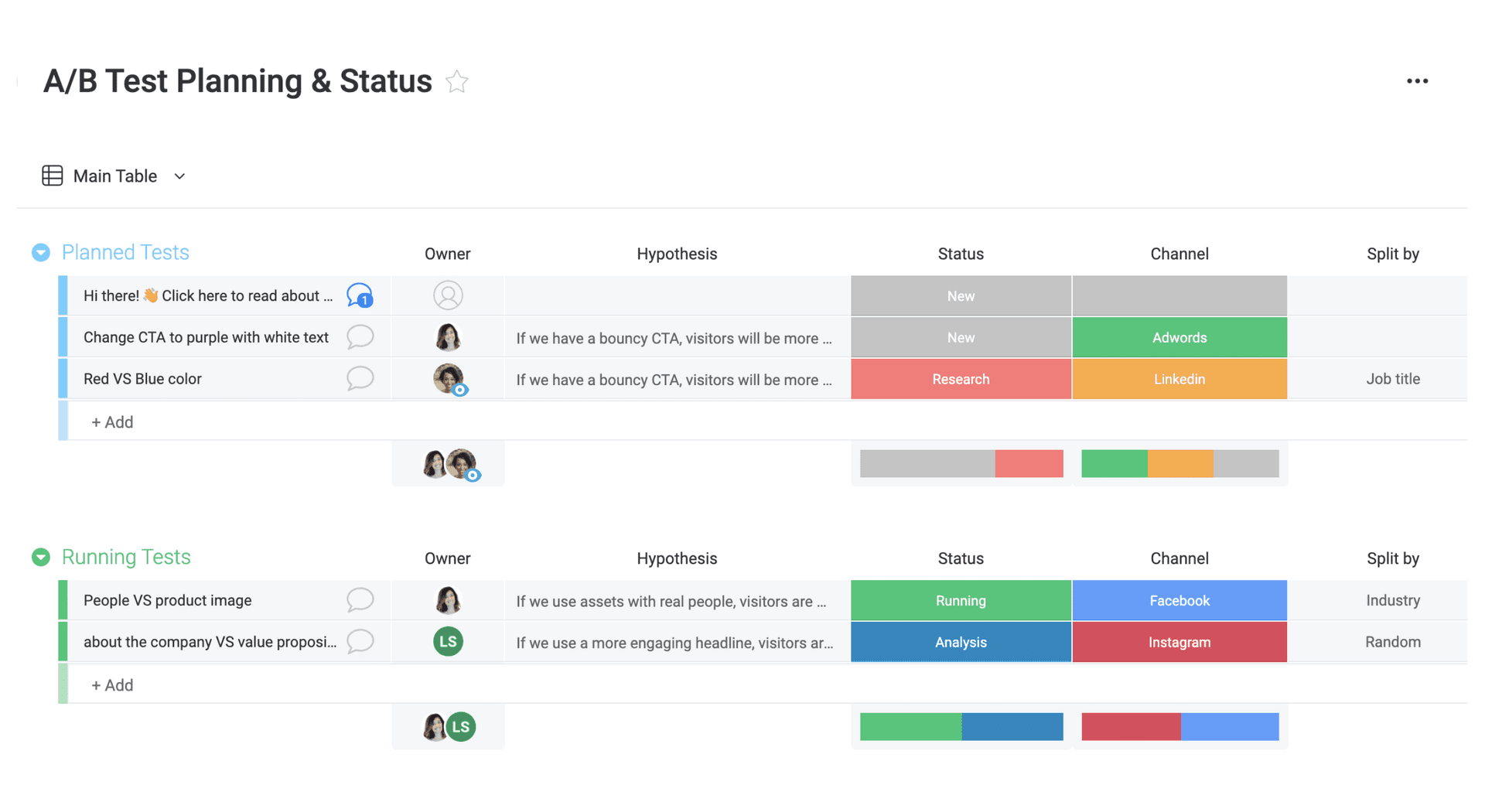

A/B testing only works when teams treat it like a shared operating system: one hypothesis, one metric, and a habit of learning instead of debating.

A/B testing as a workplace habit, not a one-off tactic

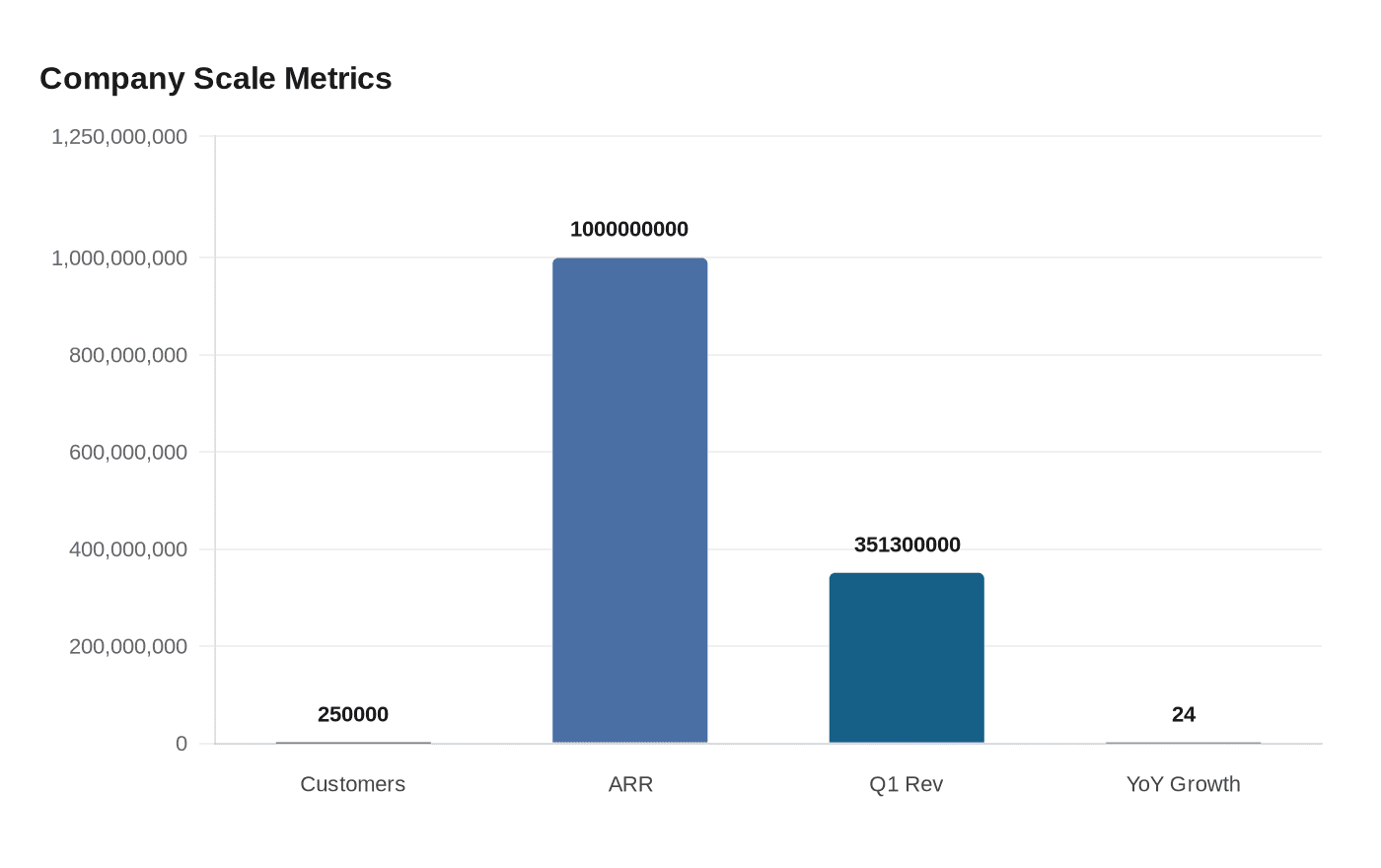

The fastest way to settle a hallway argument about a headline is not to argue harder. It is to test it cleanly, measure what changes, and decide from there. That is the practical lesson running through monday.com’s A/B testing guidance, and it fits a company that now says more than 250,000 customers worldwide use its platform, after crossing $1 billion in annual recurring revenue in August 2024 and posting $351.3 million in first-quarter 2026 revenue, up 24% year over year.

At that scale, experimentation is not just a marketing trick. It is a way for product, growth, sales, and engineering teams to work from the same evidence instead of separate instincts. monday.com’s own framing is simple: split an audience, show version A to one group and version B to another, then measure which performs better against a specific goal. The value is not in the test itself. The value is in the way the test changes how teams make decisions together.

Start with the decision, not the opinion

Good experimentation begins with a question that matters to the business. If the real issue is whether a new onboarding flow improves activation, or whether pricing page copy lifts conversion, the test should be built around that decision from the start. monday.com’s guidance stresses building hypotheses from real user behavior rather than assumptions, which is a useful correction for teams that too often begin with a senior opinion and reverse-engineer a test around it.

That shift matters because A/B testing works best when everyone agrees on what success looks like before the experiment launches. Product managers can use it to compare onboarding flows, pricing page copy, feature placements, or call-to-action labels. Sales and revenue teams can apply the same logic to demos, campaigns, and nurture flows. The method is consistent across functions: define the change, define the metric, and decide in advance what result would justify a rollout.

What to test first

Not every surface deserves an experiment. monday.com’s guidance points to high-traffic, high-value pages first, because small gains there can create real revenue impact. That means homepages, checkout flows, and key email subject lines should usually outrank low-traffic corners of the site where the signal is weaker and the business payoff is smaller.

This is also where discipline matters most. Test one variable at a time so the result is interpretable. If the headline, button color, and page layout all change together, you may get a winner, but you will not know why it won. Run the test long enough to cover normal business cycles as well, because early spikes can be misleading if they capture a temporary pattern rather than a stable behavior change.

The metric has to be unambiguous

Nielsen Norman Group treats A/B testing as an efficient method for continuous design improvement, but it comes with a warning that should be posted above every experiment board: the metric has to be unambiguous. If teams cannot agree on the success measure, the test becomes an argument in spreadsheet form. If they can agree, the result becomes a shared answer.

That clarity is especially important because A/B testing can encourage short-term thinking. A page that wins on clicks might not produce better customer quality, better retention, or better satisfaction over time. Nielsen Norman Group’s advice is to combine A/B tests with qualitative research, which is the right antidote to false certainty. Numbers can show what changed. Interviews, observation, and user feedback help explain why it changed, and whether the change actually improves the experience.

Where the work breaks down inside a company

For engineers, the hardest part is often not the hypothesis. It is the plumbing. A test only helps if variants are flagged cleanly, events are collected reliably, and analytics stay readable while the experiment runs. Once the instrumentation is messy, teams end up debating whether the product changed or the tracking changed, which defeats the point.

That is why experimentation should be treated as part of the product system rather than a side project. In a platform business like monday.com, where the product now brings people, workflows, and AI agents together on one flexible system, measurement has to be boringly dependable. The more teams launch, iterate, and extend the product, the more they need a repeatable way to separate a real improvement from a noisy one.

Why this matters for product, growth, and sales teams

A/B testing becomes powerful when it changes behavior across functions. Product teams stop defending ideas purely on taste. Marketing teams stop optimizing for vanity metrics that do not connect to downstream results. Sales teams stop guessing which message, flow, or offer resonates and start learning from conversion data tied to a known goal.

That is why the best experimentation cultures document more than winners and losers. They record the hypothesis, the audience, the metric, the duration, and the result, then they decide what gets scaled. Over time, that creates an internal memory of what works for monday.com’s users and customers, which is more valuable than any one test. It also prevents teams from treating a single successful experiment as universal truth.

The practice is already mainstream

The reason this discipline matters is that the market has already moved. VWO says about 77% of firms globally conduct A/B testing on their websites, and about 59% use it in email marketing campaigns. Optimizely’s examples show how broad the practice has become, with common use cases that include homepage elements, category pages, mobile pages, and search-result optimization.

That ubiquity is the real signal. A/B testing is no longer a niche optimization tactic reserved for a handful of growth teams. It has become a basic operating habit for organizations that want to improve performance without guessing, especially when a small lift on a homepage, email subject line, or checkout flow can ripple into materially better revenue.

For monday.com workers, the lesson is clear. The company’s scale, its revenue pressure, and its broad customer base make experimentation too important to leave to instinct. The teams that will get the most out of it are the ones that treat every test as a decision framework, not a debate, and build a culture where the answer is not who argued best, but what the evidence actually shows.

Know something we missed? Have a correction or additional information?

Submit a Tip