Google Gemini 3 Deep Think Turns Sketches Into 3D-Printable Models

Google's Gemini 3 Deep Think now converts rough sketches into STL-ready 3D models with conversational edits, available today to Google AI Ultra subscribers.

Google rolled out a major upgrade to Gemini 3 Deep Think that converts rough sketches, 2D images, and photos of physical objects directly into print-ready 3D model files, with no CAD software required. The updated mode is live today for Google AI Ultra subscribers through the Gemini app, with early API access open to researchers, engineers, and enterprises who express interest through the Gemini API.

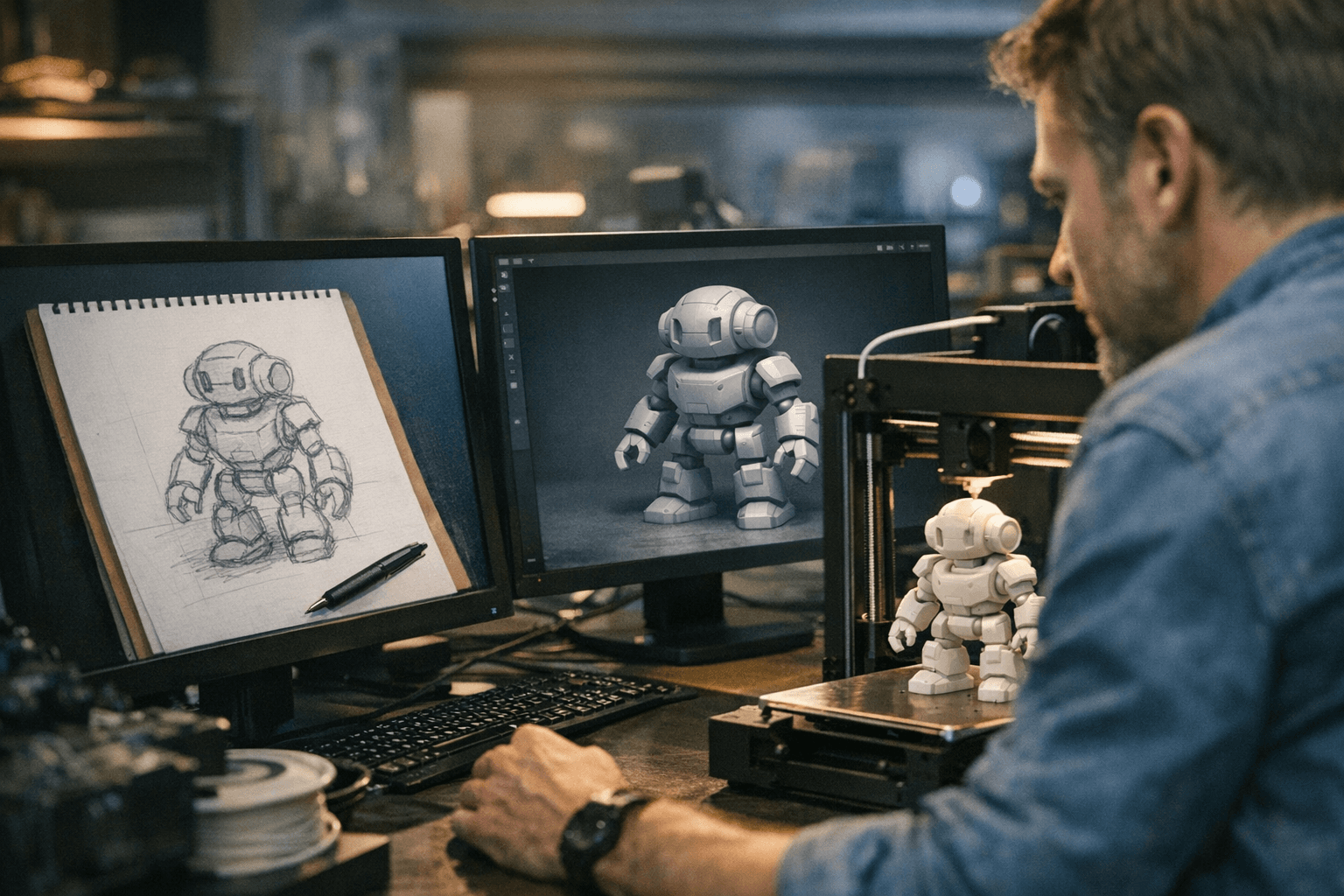

"With the updated Deep Think, you can turn a sketch into a 3D-printable reality," Google stated in its official announcement. "Deep Think analyzes the drawing, models the complex shape and generates a file to create the physical object with 3D printing." The company described the upgrade as "a major upgrade to Gemini 3 Deep Think, our specialized reasoning mode, built to push the frontier of intelligence and solve modern challenges across science, research, and engineering."

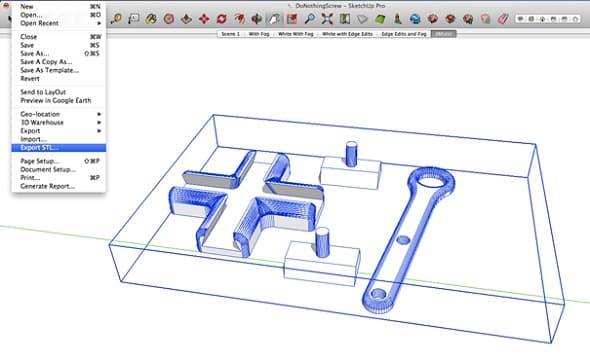

The workflow addresses one of the most persistent friction points in desktop fabrication: getting from idea to printable file without proficiency in CAD, physics-based modeling, or simulation software. Deep Think handles that translation through multimodal reasoning, then lets users iterate on the result through plain conversational prompts rather than manual geometry edits. Ask it to thicken a wall, extend a flange, or hollow a section, and it updates the model accordingly.

MIT engineering professor Markus Buehler put that capability to a practical test, posting on X that he gave Deep Think an image of a 3D spider web and asked it to generate an interactive design tool. The system returned a full design suite covering procedural control, simulation, and optimization, complete with STL export. Buehler then used that output to engineer new metamaterials and a spider-web-inspired bridge, printed the design, and ran a structural integrity validation using an NVIDIA DGX Spark load test. He called the result "a glimpse of a future where images go in and fabrication-ready designs come out."

Google says it developed the Deep Think upgrade in close partnership with scientists and researchers specifically to handle messy, open-ended problems where there is no single correct answer, the kind of work that has historically kept AI reasoning tools confined to abstract benchmarks rather than real fabrication pipelines. Beyond 3D printing, the same upgrade is being positioned for broader scientific and engineering interpretation tasks.

The Gemini API integration marks the first time Deep Think has been available as an API surface, which opens the door for product developers and research labs to embed the sketch-to-model pipeline directly into their own tooling. Google noted that bringing Deep Think "to researchers and practitioners where they need it most" starts with the Gemini API, with further surface expansion implied.

Key technical questions remain open: supported file formats beyond STL, whether generated meshes are automatically checked for watertightness, whether the system suggests print settings or support structures, and what dimensional accuracy tolerances users should expect for engineering-grade parts. Google's own blog carries the caveat that "generative AI is experimental," a reminder that Buehler's spider-web bridge, while a striking proof of concept, represents a single demonstrated workflow rather than a validated production pipeline. For anyone sitting on a stack of hand-drawn part concepts, the case for grabbing an Ultra subscription and running a test just got considerably more compelling.

Know something we missed? Have a correction or additional information?

Submit a Tip