LLM Multi-Agent System Repairs 3D Printing Defects in Real Time

A Carnegie Mellon multi-agent LLM system monitors printers layer by layer, diagnoses defects, and issues API commands to fix errors in real time - parts showed a 5.06× peak load gain.

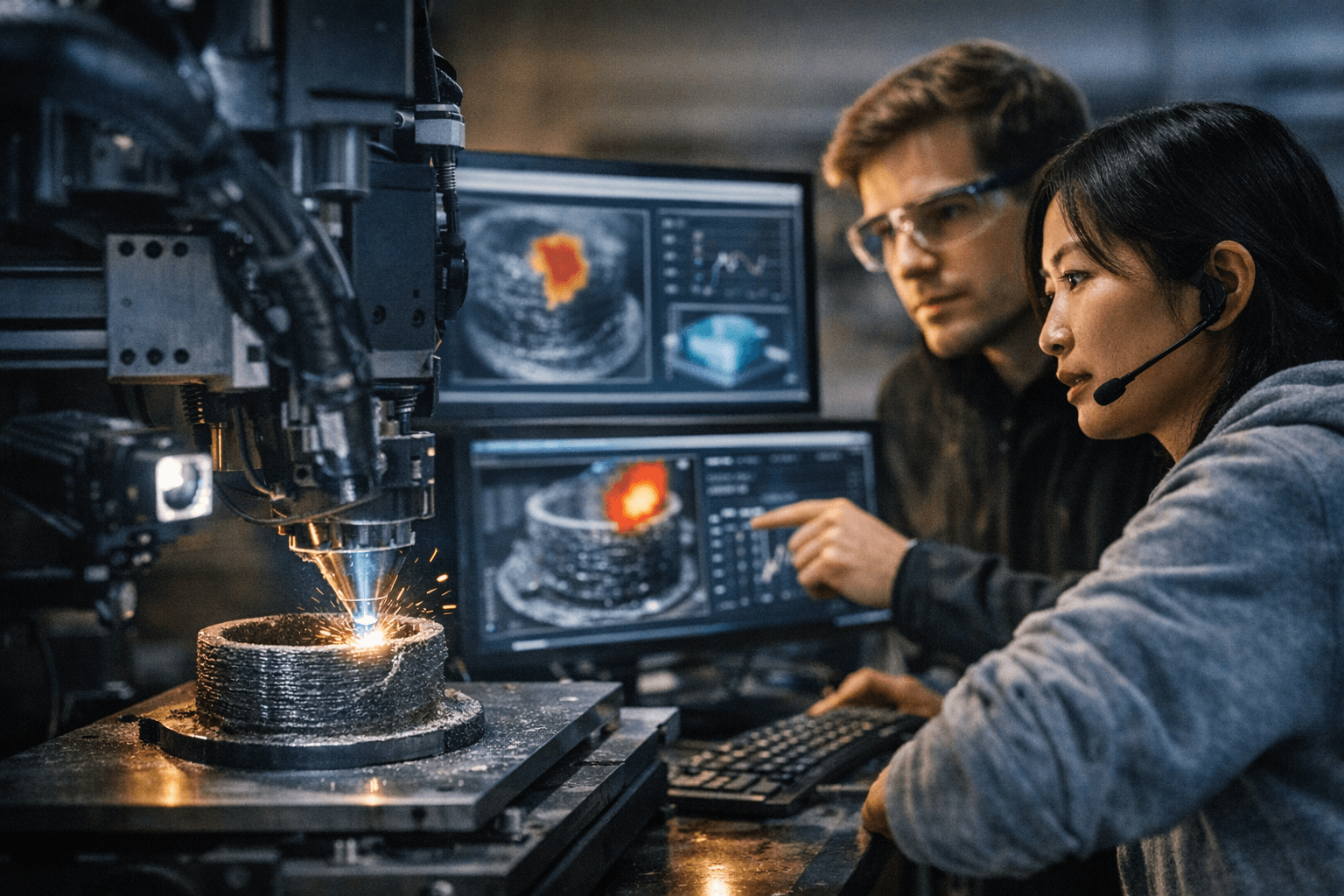

A research team led by Amir Barati Farimani at Carnegie Mellon built an LLM-based multi-agent controller that watches 3D prints after each layer, diagnoses defects from images and machine-state data, and issues corrective commands so errors are fixed in subsequent layers rather than scrapping parts. The system produced parts with “significantly enhanced structural integrity,” reporting a 5.06× increase in peak load capacity for prints run under the controller.

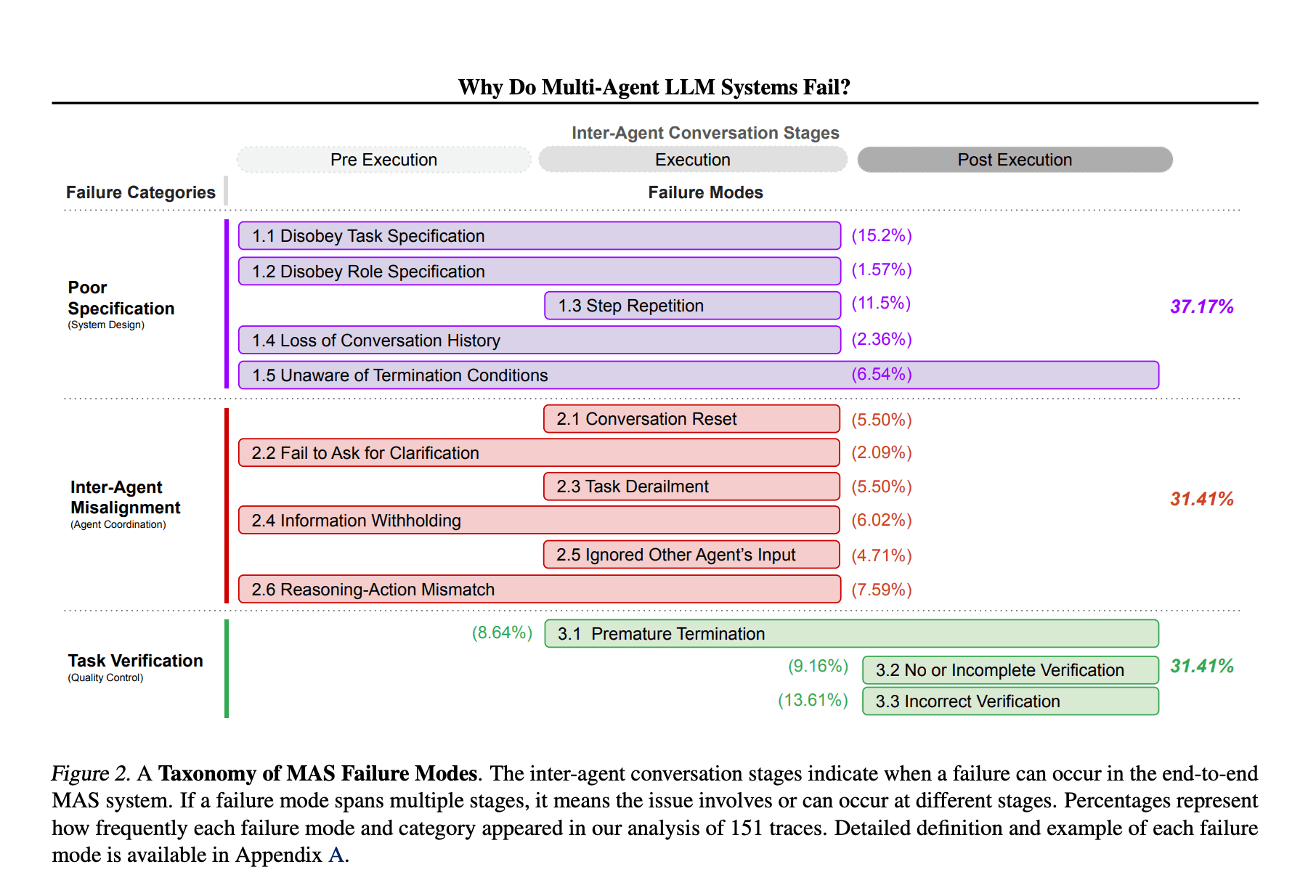

The system runs as a continuous loop. After each completed layer, top and front cameras capture images and feed them to a vision-language agent that flags visual problems such as inconsistent extrusion, stringing, oozing, warping, poor layer adhesion, and even blobs and zits. Planner agents then inspect printer-state variables - temperature, material flow rate, and other sensor readings - to diagnose root causes and craft corrective actions. A solution planner refines the fix into explicit steps, and an executor converts that plan into machine-readable API commands that adjust printer settings in real time. A supervising LLM coordinates the modules and sequences the loop so each agent reports back before the next layer proceeds; as the team put it, “We needed a model to identify 3D printing errors, explain them in plain language, and autonomously correct the problem in real time.” The researchers add that “This allows the system to operate in a coordinated yet modular fashion, mirroring the orchestral model of distributed expertise under centralized guidance.”

Methodologically, the team says the approach required no domain-specific LLM fine-tuning. Instead it used in-context learning, self-prompting, and iterative prompt-reason refinement with structured prompts; reporting indicates the experiment used a base ChatGPT-4o model combined with those prompts. The framework was tested on two different 3D printers with distinct sensor setups to evaluate generalizability and was pitted head-to-head against 14 additive-manufacturing experts. The LLM agents “consistently identified major failure modes with high accuracy” and in several cases recognized emerging errors earlier than the human experts, enabling corrective intervention before defects compounded.

The work is not flawless. An arXiv excerpt documents a misclassification example: the LLM identified blobs and zits in layers 10 and 15 but sometimes labeled related defects as stringing or oozing in other layers, indicating occasional generalization across subtle variants. The ScienceDirect entry notes code availability, but the repository link was not included in the excerpts available for review.

Practical value is immediate for bench-top makers and small shops: fewer ruined prints, less material waste, and less time spent babysitting a build. As Amir Barati Farimani put it, “The future is adaptive.” Wider adoption will depend on accessible integrations - camera mounts, API-capable firmware, and published code and prompts - plus further validation of the 5.06× structural claim through full methods and statistics. For now, expect smarter monitoring and fewer instances of plastic spaghetti on the build plate as this line of research moves from lab tests toward workshop pilots.

Know something we missed? Have a correction or additional information?

Submit a Tip