RTK compresses command output, saving tokens for AI coding tools

RTK cuts noisy command output before Claude Code rereads it, and that can save a shocking amount of context in a day of AI-assisted coding. Its Rust, zero-dependency design makes it feel like infrastructure, not another toy plugin.

RTK has a brutally practical job: strip the junk out of shell output before Claude Code burns tokens rereading it. If you live inside AI coding tools all day, that matters fast, because the model does not just see your latest command, it keeps carrying the baggage forward.

Why RTK exists

The core problem is not exotic. Terminal output is usually way more verbose than the model needs. Progress spinners, ANSI color codes, repeated status lines, and noisy logs all get dragged into the conversation history, where they keep charging you again and again on later turns. That is the “re-read tax” in practice: every extra line you leave in the transcript becomes part of the context the model has to parse repeatedly.

Claude Code’s own guidance makes the stakes explicit. Its docs say the context window is the most important resource to manage, and that performance degrades as context fills. That is exactly the kind of pain RTK targets. It sits between the shell and the agent, intercepts command output, and compresses it before the context window ever sees it.

How RTK actually works

RTK is not just clipping text at random. Its pipeline is built to preserve the useful part of the output while stripping the waste. First comes smart filtering, which removes things like ANSI codes and progress spinners. Then grouping collapses repeated patterns, deduplication removes redundant lines, and truncation keeps the signal while dropping the tail end of the noise.

That matters because most command output is not information-dense from a model’s point of view. A build log, a package install, or a test run often repeats itself in ways a human would skim past in half a second. RTK turns that into a tighter payload, which means less token burn and less chance that the model gets distracted by irrelevant churn.

The architecture choice is part of the appeal too. RTK is a single Rust binary with zero dependencies, which is a very Rust way to solve the problem: small, direct, and easy to drop into a workflow without dragging in a framework. In this corner of the AI toolchain, that lightweight feel is not cosmetic. It is the difference between something you trust every day and something you only try once.

Why Claude Code makes RTK especially relevant

Claude Code is built around the idea that context management is central, not incidental. Its docs also describe hooks as user-defined shell commands, HTTP endpoints, or LLM prompts that run automatically at specific points in the lifecycle. That creates the opening RTK needs: a place to intercept commands and rewrite them before execution.

The key detail is that PreToolUse hooks run before Claude Code’s permission prompt. That means RTK can work transparently, shaping the command flow before the output ever comes back to the agent. Instead of asking you to change how you think about the tool, it quietly plugs into the part of the system where the friction actually happens.

Claude Code’s .claude directory setup reinforces the same point. It reads instructions, settings, skills, subagents, and memory from both the project directory and ~/.claude. In other words, this is already a product that expects workflow-level customization. RTK fits naturally into that model because it behaves like infrastructure, not a novelty wrapper.

The numbers that make it worth paying attention to

The claims around RTK are strong, but the real test is whether the savings show up in actual use. RTK’s official site says it works with Claude Code, Cursor, Gemini CLI, Aider, Codex, Windsurf, and Cline, which makes it useful across a broader agent stack rather than in one narrow setup. Its marketing copy claims an average of 89% token savings, while the GitHub README says common development operations can see reductions in the 60% to 90% range.

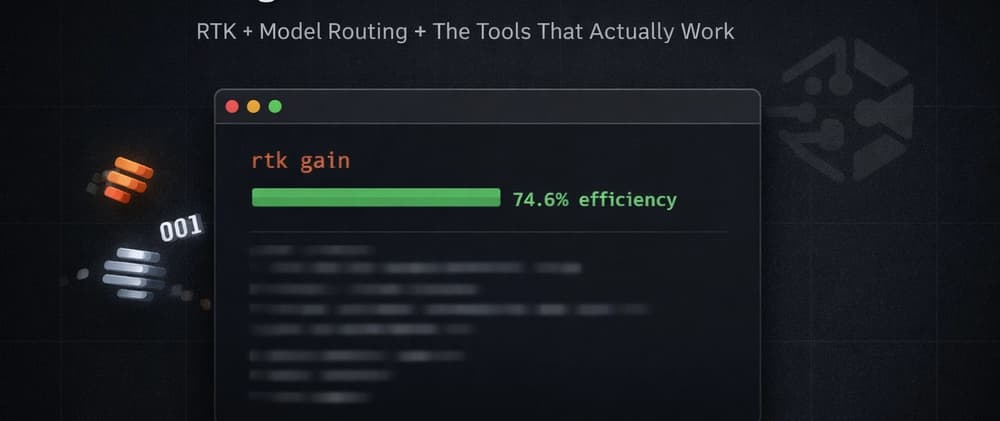

A concrete example makes that easier to believe. In one Blazor project, 80 RTK commands consumed 152K input tokens and 39K output tokens, while saving 113.6K tokens at 74.6% efficiency. That is not a theoretical micro-optimization. That is the kind of reduction you feel when a workday stops being a parade of bloated command transcripts.

The best part is that these savings compound. If a tool trims one output by a few hundred tokens, that sounds minor. If it does it dozens of times across a project session, the context window stays cleaner, the model has less irrelevant history to reread, and the next prompt is more likely to land on the real problem instead of slogging through leftovers.

What the latest RTK release adds

RTK v0.35.0 landed on April 6, 2026, and it is a good example of why this kind of tooling feels more like living infrastructure than a one-off utility. The release expanded AWS CLI filters from 8 to 25 subcommands, which shows the project is not standing still at the level of command coverage. It also shipped fixes across git, gh, grep, go, hook checking, and process cleanup.

That mix matters because it shows the product is being hardened around real workflow edges, not just padded with flashy features. If you are routing commands through a proxy that touches everyday developer tools, correctness and cleanup matter just as much as compression. A broken filter or sloppy process handling would erase a lot of the gain.

Why Rust readers should care

RTK is interesting because it points to a wider shift in AI-assisted development. The next wave of useful tooling is not always going to be a bigger model or a clever prompt. Sometimes it will be a small Rust binary that quietly makes the whole stack cheaper, cleaner, and less annoying to use.

That is where the important line is drawn between genuinely useful workflow infrastructure and the forgettable add-ons that only feel clever for a day. RTK reduces tokens, fits cleanly into Claude Code’s hook system, supports multiple coding tools, and stays lightweight enough to disappear into the background. For anyone spending real time inside AI coding tools, that combination is exactly the kind of plumbing that ends up mattering most.

Know something we missed? Have a correction or additional information?

Submit a Tip