Transformer Co-Author Launches IronClaw, a Rust-Based Secure AI Agent Runtime

Illia Polosukhin, a Transformer paper co-author, rebuilt OpenClaw in Rust as IronClaw, using WASM sandboxes and AES-256-GCM vaults to keep API keys away from LLMs.

Illia Polosukhin, one of the original co-authors of the Transformer paper, has released IronClaw, a ground-up Rust rewrite of the OpenClaw AI agent runtime built specifically to close the security holes that let autonomous agents leak passwords and API keys to language models and across networks. The project lives at github.com/nearai/ironclaw and is drawing attention across AI and Rust communities alike.

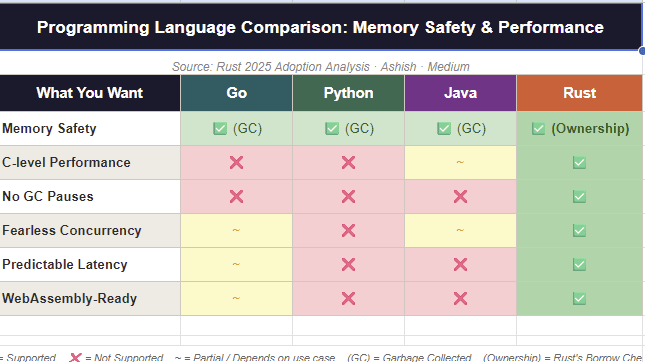

OpenClaw's core problem, as Polosukhin diagnosed it, was that agent runtimes were operating without meaningful isolation or credential protection, leaving sensitive secrets exposed to the models they served. IronClaw addresses this through what its documentation describes as a four-layer defense-in-depth architecture. The first layer is Rust itself: memory-safety guarantees baked into the language eliminate an entire class of low-level vulnerabilities before any other defensive measure is needed.

The second layer introduces WASM sandbox isolation. Every third-party tool and every piece of AI-generated code runs inside an independent WebAssembly container, so even a malicious tool's blast radius is strictly confined within its sandbox. The third layer is an encrypted credential vault: all API keys and passwords are stored using AES-256-GCM encryption, and each credential is bound to a policy rule that restricts its use to a single specified domain. The practical consequence is that the language model itself never sees the credentials at all, removing the attack surface that prompt injection exploits to exfiltrate secrets.

IronClaw sits inside NEAR Protocol's broader "User-Owned AI" vision, where the runtime serves as the trusted execution layer for autonomous agents operating on behalf of users. NEAR has paired it with supporting infrastructure including an AI cloud platform and a decentralized GPU market. On market.near.ai, developers can register specialized agents that accumulate reputation over time and receive progressively higher-value tasks as that reputation grows. A contributor identified in coverage as "Brother Pineapple" has extended that ecosystem further, building a market specifically for agents to hire other agents.

The security framing is pointed. One piece of Chinese-language tech coverage asked directly: "How many 'lobsters' are running naked on the Internet?" The question captures the practical stakes for any team deploying autonomous agents against real APIs today: without runtime-level isolation and credential policy enforcement, those agents are one injected prompt away from exposing production credentials. IronClaw's combination of Rust's ownership model, WebAssembly containment, and AES-256-GCM-encrypted policy-bound vaults represents the most architecturally complete public response to that problem released so far.

Know something we missed? Have a correction or additional information?

Submit a Tip