Adaption launches AutoScientist to automate model fine-tuning

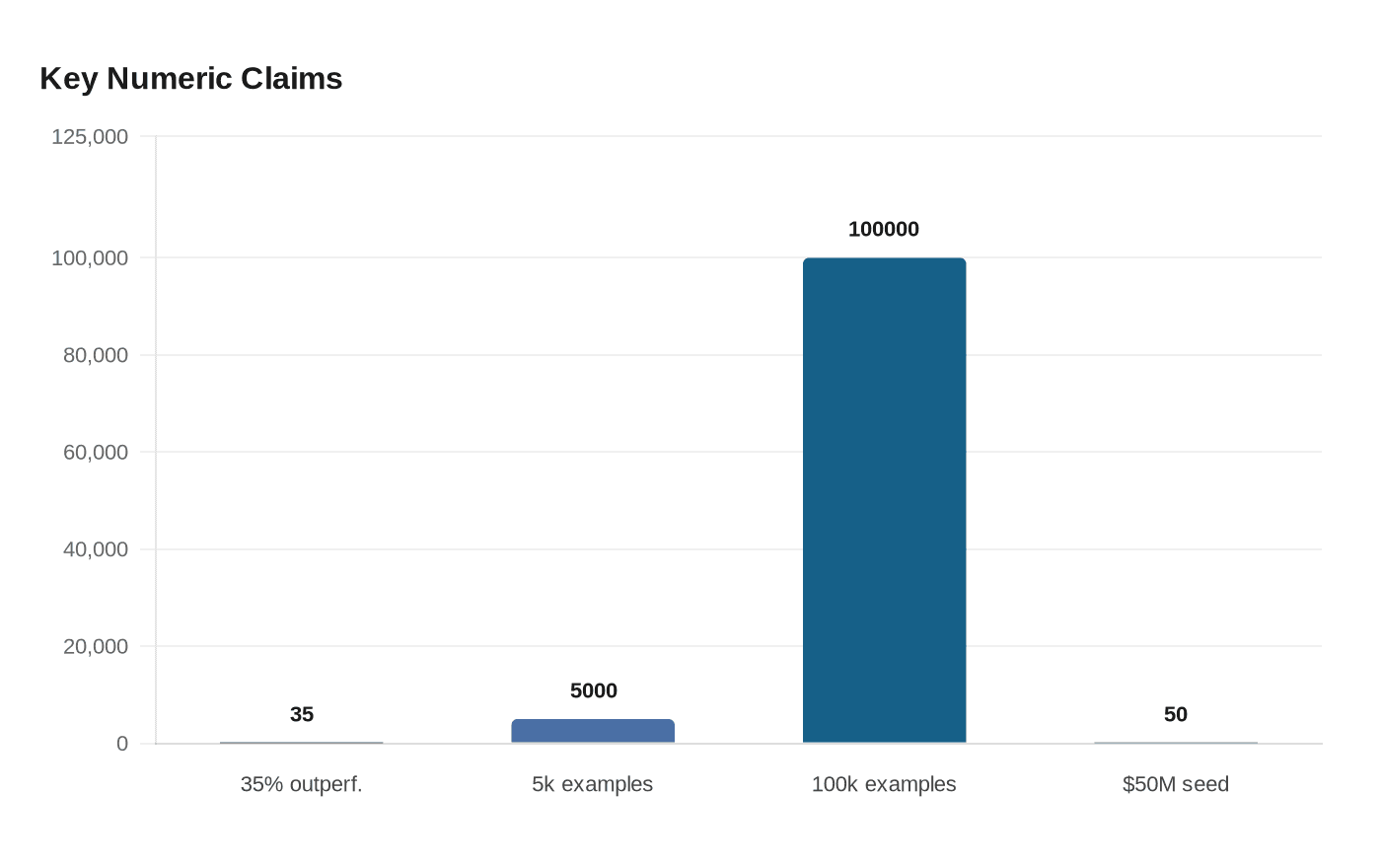

Adaption says AutoScientist can turn fine-tuning from weeks into an afternoon, using automated data and training loops that it claims beat human setups by 35%.

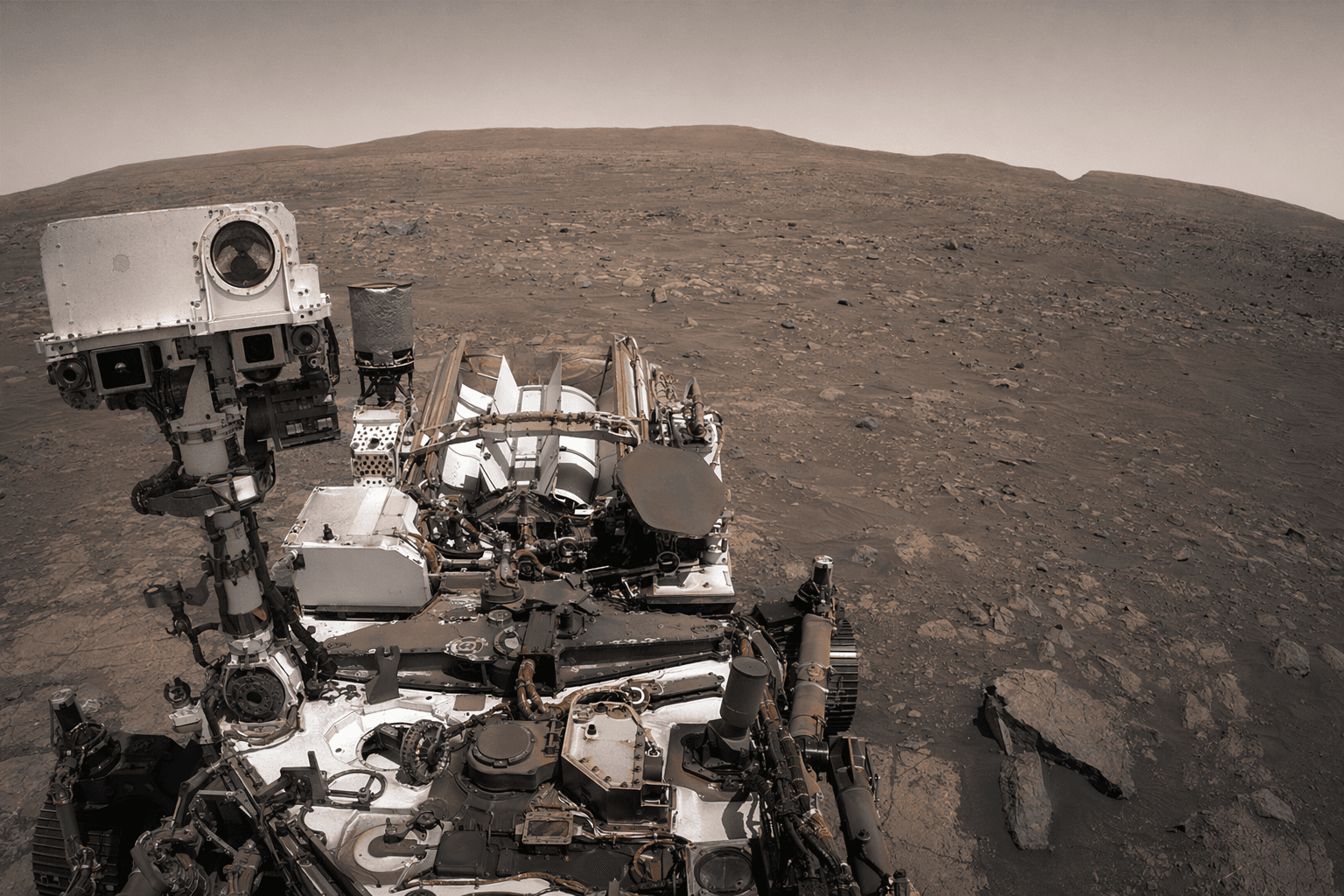

Adaption is betting that the hardest part of custom AI is no longer model scale, but the grind of getting a model to behave the right way. Its new AutoScientist tool, announced May 13, 2026, is designed to automate the full research loop behind model training and alignment, co-optimizing data and training recipes until a model converges on the target behavior.

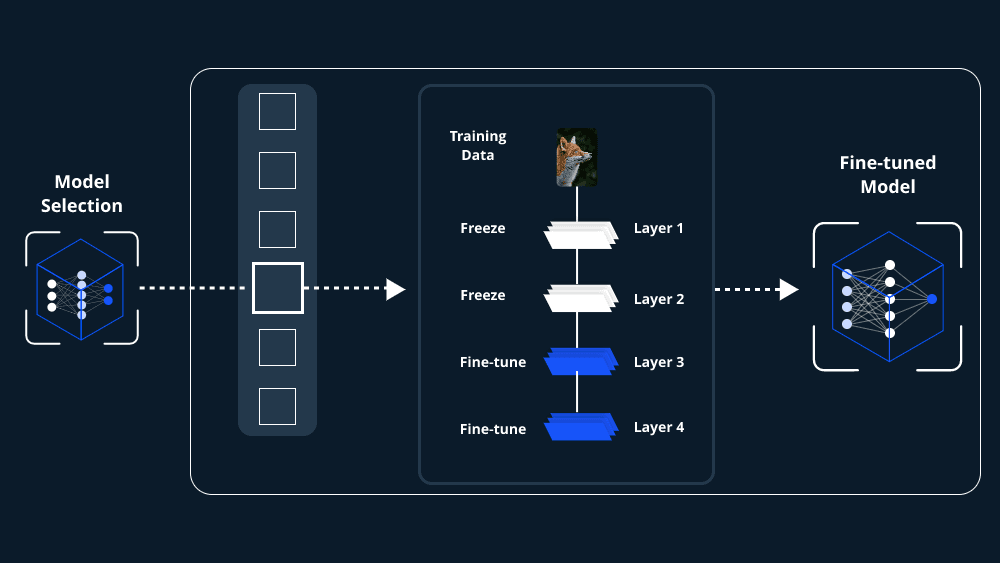

In operational terms, AutoScientist takes over the repetitive work that usually sits between an idea and a usable fine-tuned model. Adaption says the system is built to help developers move from concept to an adapted model in an afternoon rather than weeks, while addressing familiar failure modes such as catastrophic forgetting, overfitting on small or low-quality datasets, and conflicting training signals. The company says the tool is meant to make model adaptation more accessible for non-technical users and enterprises that want domain language support, structured output, latency and cost savings, or models tuned for proprietary workflows.

The claim is ambitious, but it also points to where human oversight still matters. Teams still have to define the target behavior, choose what data belongs in the training set, and decide whether the resulting model is fit for a real deployment. AutoScientist automates the search process around those decisions, not the business judgment behind them. That distinction matters for companies that want speed without surrendering control over quality, accuracy, or internal policy requirements.

Adaption says AutoScientist outperformed human-configured training by an average of 35 percent across all runs, with gains shown across datasets ranging from 5,000 to 100,000 examples. Those results were based on in-house domain-specialized evaluations, which makes the benchmark useful as a signal of the company’s approach, but still tightly tied to Adaption’s own testing environment.

The launch extends Adaption’s earlier Adaptive Data product and follows its April 30 partnership with Together AI, which made Together Fine-Tuning natively available inside Adaption’s workflow. Together said the integration supports LoRA and full fine-tuning, including large open models above 100 billion parameters, with cost estimates before training, ETA tracking during runs, and export to Hugging Face Hub.

Founded by Sara Hooker, a former Cohere research leader and Google DeepMind veteran, and Sudip Roy, who also worked at Cohere and Google DeepMind, Adaption has been pitching itself as a challenge to the industry’s scale-first logic. The San Francisco company raised $50 million in seed funding on February 4, led by Emergence Capital Partners with backing from Mozilla Ventures, Fifty Years, Threshold Ventures, Alpha Intelligence Capital, E14 Fund, and Neo. Emergence Capital said “scale alone is no longer enough,” and argued that frontier-model training costs have risen about 2.4 times per year since 2016, with some future runs projected to top $1 billion by 2027.

For enterprises weighing whether to build custom models at all, AutoScientist is a direct argument that adaptation can be faster, cheaper, and less technical than frontier training. Whether that promise holds outside Adaption’s own evaluations will determine how far the tool can move model fine-tuning beyond the frontier labs.

Know something we missed? Have a correction or additional information?

Submit a Tip