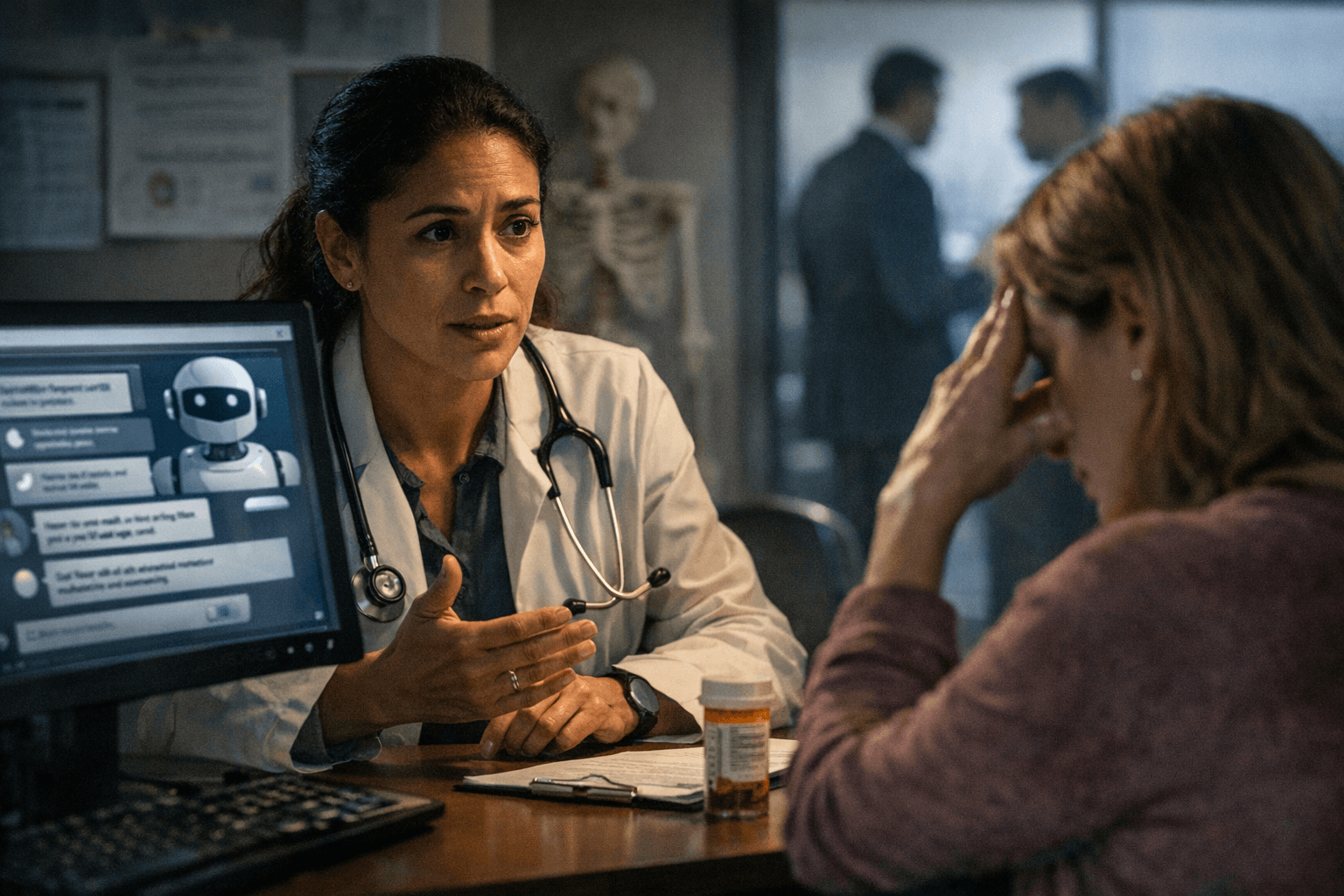

AI health chatbots spark debate over safety, trust, and regulation

Chatbots can speed routine health help, but the wrong answer can delay care or spread harm. Doctors and regulators are racing to set guardrails as the tools move closer to patients.

A chatbot can feel like a shortcut when it helps decode a symptom, a lab result, or a medical bill. It becomes a liability when fluency disguises a missed warning sign, a made-up fact, or advice that pushes real care out of reach. That is the risk calculus now facing ordinary patients like Abi, whose experience reflects a broader split in what consumer-facing AI can do safely, and what it cannot.

When a chatbot helps, and when it hurts

The safest use cases are narrow and practical: summarizing paperwork, translating terminology, organizing questions for a doctor, or helping someone understand what a clinician already said. The danger starts when a chatbot drifts into diagnosis, treatment, or urgent triage without enough context. A safer answer says what it cannot know, asks follow-up questions, and points to in-person care when red flags appear. A flawed answer sounds certain, skips the screening questions, and offers a home remedy or diagnosis that may be wrong. A 2026 Nature study found that publicly available chatbots produced problematic responses in 21.6% to 43.2% of answers, with unsafe responses ranging from 5% to 13%, and concluded that millions of patients could be receiving unsafe medical advice.

That gap matters because the problem is not limited to one company or one model. The Nature study tested four public chatbots, Claude, Gemini, GPT-4o, and Llama, across 222 patient questions and 888 total responses on primary care topics including internal medicine, women’s health, and pediatrics. In the United States, the same paper says 43 million patients ask chatbots medical questions at least once a month, which means even a modest error rate can scale into a public health problem.

Why regulators are drawing a line

The World Health Organization warned in May 2023 that caution should be exercised with AI-generated large language model tools to protect human well-being, safety, autonomy, and public health. In January 2024, it followed with guidance on large multimodal models for health care, focusing on ethics and governance and offering more than 40 recommendations for governments, technology companies, and providers. The message from Geneva was clear: health AI needs rules, not just speed.

The Centers for Disease Control and Prevention is making a similar institutional turn. Its FY2026 to FY2030 AI strategy centers on governance, public trust, and safe adoption, and says the agency will use AI to accelerate disease detection, reduce operational burden, and support public health workflows. Its public guidance on generative AI says the tools can fit open-ended tasks like writing, summarizing, translation, and analysis, while emphasizing transparency, security, and human oversight. That distinction is important: public health agencies see value in AI, but they are also putting guardrails around how it is used.

Why the evidence remains mixed

The research record is not a simple verdict of good or bad. Stanford Medicine reported on February 5, 2025, that chatbots alone outperformed doctors on some nuanced clinical decisions, but that doctors who were given AI support improved their own accuracy. That finding points to a crucial policy distinction: patient-facing advice and clinician-assist tools are not the same product, and they should not be judged by the same standard.

The best evaluation frameworks also go beyond raw accuracy. A 2024 Nature paper argued that healthcare chatbot testing should include hallucinations, up-to-dateness, empathy, personalization, relevance, latency, trust-building, ethics, user comprehension, and emotional support. In other words, a chatbot that sounds caring but is wrong can still be dangerous, and a chatbot that is correct but unreadable may still fail the patient. A separate JAMA Network Open review of 137 health-advice studies found that 99.3% assessed closed-source models and did not provide enough information to identify the model, which underscores how little transparency often exists in the evidence base.

OpenAI’s health push raises the stakes

OpenAI entered the space directly on January 7, 2026, with ChatGPT Health, a dedicated health experience designed to support, not replace, medical care. The company says users can optionally connect medical records and wellness apps so responses are grounded in personal health data, and it says the feature is designed for everyday questions rather than diagnosis or treatment. OpenAI also says its healthcare product includes citations so clinicians can verify the evidence, and that health conversations are separated from model training.

That move blurs a boundary that used to be easier to defend. A general-purpose chatbot is now being recast as a health companion, while regulators are simultaneously warning that safety, governance, and oversight must come first. The tension is not abstract. If consumers start treating a polished answer as a medical judgment, the cost of a bad recommendation can be measured in delayed appointments, missed diagnoses, and preventable harm.

Guardrails to use right now

- Use chatbots for preparation, not diagnosis: ask them to summarize records, explain terms, or draft questions for a clinician. That fits the CDC’s view of generative AI as a tool for open-ended writing, summarizing, translation, and analysis.

- Treat any urgent symptom as a medical problem first. If the issue is chest pain, trouble breathing, fainting, heavy bleeding, severe allergic reaction, or a sudden neurologic change, get real-world care immediately rather than waiting for a chatbot to reason it out. That caution follows directly from the unsafe-response patterns identified in the Nature study.

- Prefer tools that show their sources and make uncertainty explicit. OpenAI says its healthcare product includes citations for verification, and WHO’s guidance on health AI stresses ethics and governance, not blind trust.

- Be wary of medical advice that sounds personalized but lacks context. The 2024 Nature framework says evaluation should account for personalization, relevance, and up-to-dateness, because a confident answer can still be stale, generic, or incomplete.

The real question is no longer whether health chatbots exist. It is whether the public can use them without mistaking convenience for care. Until oversight, transparency, and clinical safeguards catch up, the most responsible answer is to treat them as aides, not authorities.

Know something we missed? Have a correction or additional information?

Submit a Tip