AMD Unveils Helios Rack and Previews MI455X to Challenge Nvidia

At CES 2026 in Las Vegas, AMD used CEO Dr. Lisa Su’s keynote to introduce Helios, a liquid-cooled, rack-scale platform built around new MI455X accelerators and EPYC Venice CPUs. The announcement positions AMD as a direct competitor to Nvidia in large-scale AI training infrastructure and sketches a broader Instinct roadmap that could reshape data center choices for AI builders.

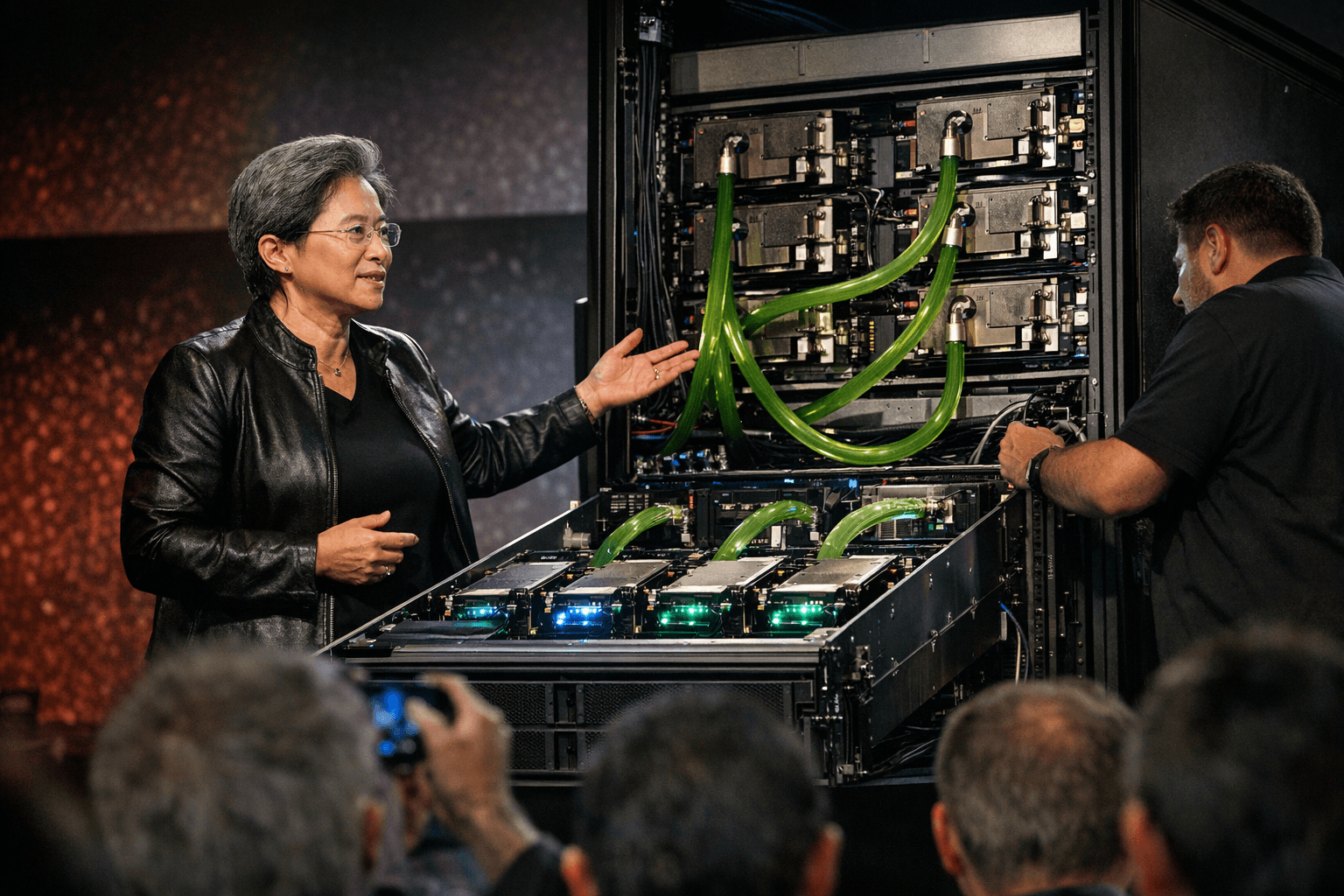

AMD set out an aggressive push into data-center AI hardware during Dr. Lisa Su’s keynote at CES 2026 on Jan. 6, presenting Helios as a rack-scale "blueprint for yotta-scale infrastructure" and calling the system the "world’s best AI rack." The company displayed a MI455X accelerator onstage and previewed a family of Instinct products that AMD says will address both cloud and on-premise AI needs.

Helios is described as a liquid-cooled, ROCm-integrated rack designed for high-bandwidth, energy-efficient training of very large models. At the component level AMD paired the new MI455X accelerators with EPYC "Venice" CPUs, marketed onstage as Zen 6 processors. Each Helios compute tray was shown holding four MI455X GPUs paired to a single EPYC Venice CPU; racks can be populated with multiple trays to scale GPU counts. AMD presented configurations that reach parity with competing racks by supporting up to 72 MI455X accelerators per rack, matching recent high-density offerings from rivals.

Networking and data-processing tasks in the Helios concept are handled by Pensando components, which AMD included in the stack. Onstage materials and follow-up materials named Pensando Vulcano and Salina parts in different contexts, reflecting slightly different component rollups in early descriptions. AMD emphasized unified software through its open ROCm ecosystem to manage acceleration, CPU tasks, and networking.

Performance claims for Helios were ambitious. AMD asserted that a fully configured rack can deliver as much as three AI exaflops of throughput, and positioned the platform as optimized for bandwidth and power efficiency to support training of trillion-parameter models. Executives framed Helios as an early step toward "yotta-scale" ambitions, a term AMD used to describe compute orders of magnitude beyond current zetta-scale deployments.

The keynote included appearances by OpenAI President Greg Brockman and AI researcher Dr. Fei-Fei Li, underscoring AMD’s intent to align its hardware roadmap with demanding model-development workflows. AMD also reiterated product plans for the MI440X for enterprise on-prem deployments and teased further MI500-series Instinct GPUs, with a 2027 timeframe cited in some post-show materials.

Analysts see the move as a direct bid to challenge Nvidia’s dominance in rack-scale AI systems. Alexander Harrowell, principal analyst for advanced computing at Omdia, described AMD’s approach as a parallel strategy that offers both rack blueprints and support for traditional air-cooled GPUs and OEM server partners. The Helios announcement follows AMD’s acquisition of ZT Systems and signals a full-stack posture spanning compute, CPU, DPU/NIC and software.

Key questions remain. AMD did not provide final shipping dates, validated per-rack performance numbers, or a single confirmed parts list for Pensando networking. Some reports also characterized the MI455X and Venice chips as 2nm devices, a detail not formally confirmed during the keynote. For now, Helios stands as a preview of AMD’s intent to give AI builders alternatives to incumbent rack architectures and to push energy and bandwidth efficiency as central competitive levers.

Know something we missed? Have a correction or additional information?

Submit a Tip