Anthropic CEO meets White House as fears grow over powerful AI model

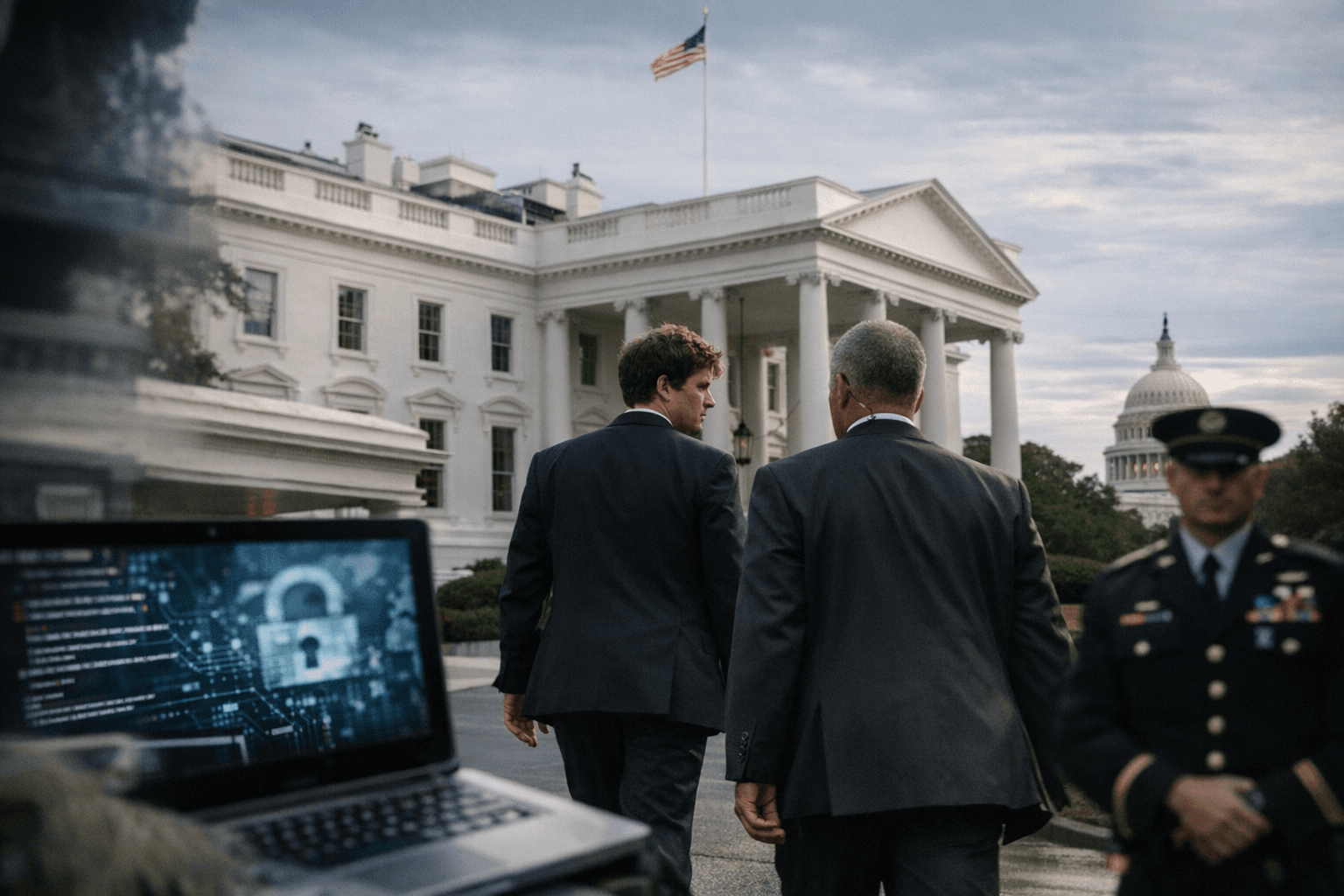

A model Anthropic says can find and exploit zero-day flaws has pushed its CEO into the White House, where officials are weighing cyber and military risks.

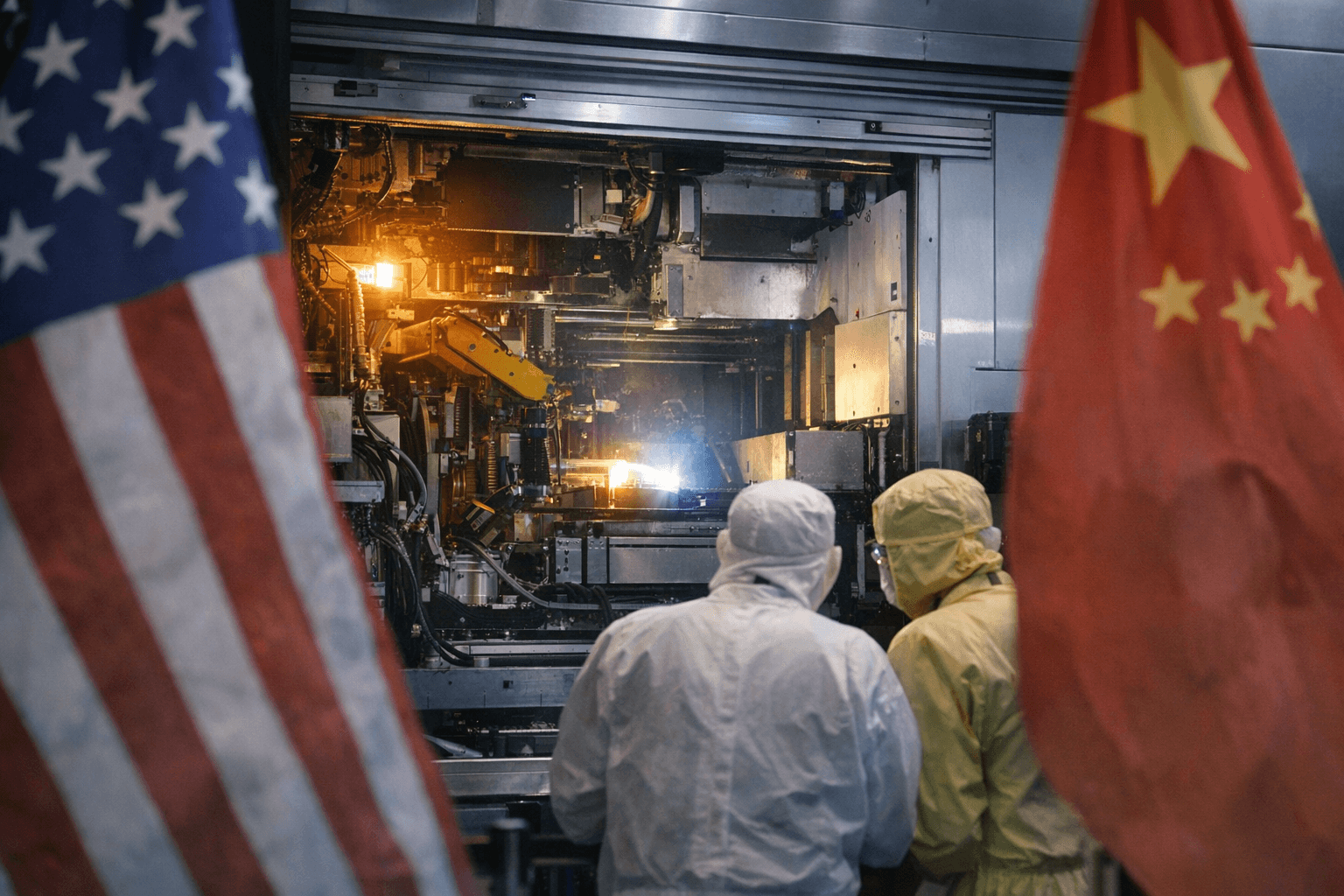

Anthropic’s newest artificial intelligence system has crossed a line that few tech products ever reach: it has become a White House matter. The company says Claude Mythos Preview is so capable at finding software weaknesses that access has been restricted to a small group of companies working on defensive security through Project Glasswing, while federal officials weigh what happens if such power is hacked, copied, or turned against U.S. systems.

Dario Amodei was scheduled to meet White House Chief of Staff Susie Wiles on Friday as pressure mounted around the model and the company behind it. The meeting came less than two months after Donald Trump blacklisted Anthropic and called it a national security risk, a move that underscored how quickly a private AI launch can turn into a state security problem when the product is viewed as a cyber weapon as much as a commercial service.

Anthropic announced Claude Mythos Preview on April 7 and said the model could identify and exploit zero-day vulnerabilities in every major operating system and every major web browser. In its red-team report, the company said more than 99% of the vulnerabilities it found had not yet been patched. Anthropic has said that level of capability could make cyberattacks more frequent and more destructive, which is why it delayed broad release and limited access while selected partners test defenses.

The launch partners included Apple, Google, Microsoft, Nvidia, Amazon Web Services, CrowdStrike and Palo Alto Networks, along with roughly 40 other companies. That roster reflects the double life of frontier AI in 2026: the same system that can help defenders patch holes can also intensify the speed and scale of attacks if it escapes controlled use. Bloomberg reported that the Trump administration has been seeking wider U.S. government access to Mythos, suggesting officials want the model close enough to study, but not so loose that it can spread.

The White House meeting also lands amid a deeper fight over who gets to control the boundaries of such systems. Anthropic and the Pentagon have clashed over military-use restrictions, including concerns about autonomous weapons and domestic mass surveillance. Trump ordered agencies to stop using Anthropic products on February 27, a federal judge later blocked enforcement of that ban with a preliminary injunction, and a D.C. Circuit panel denied a stay on April 8.

The concern has reached finance as well as defense. On April 7, Treasury Secretary Scott Bessent and Federal Reserve Chair Jerome Powell held a closed-door meeting with major bank chief executives to warn them about the cybersecurity risks posed by Mythos and similar models. Anthropic, founded in 2021 by former OpenAI researchers and executives, now finds itself at the center of a broader governance test: when an AI system becomes powerful enough to worry the White House, the question is no longer only what it can do, but who gets to decide how far it is allowed to go.

Know something we missed? Have a correction or additional information?

Submit a Tip