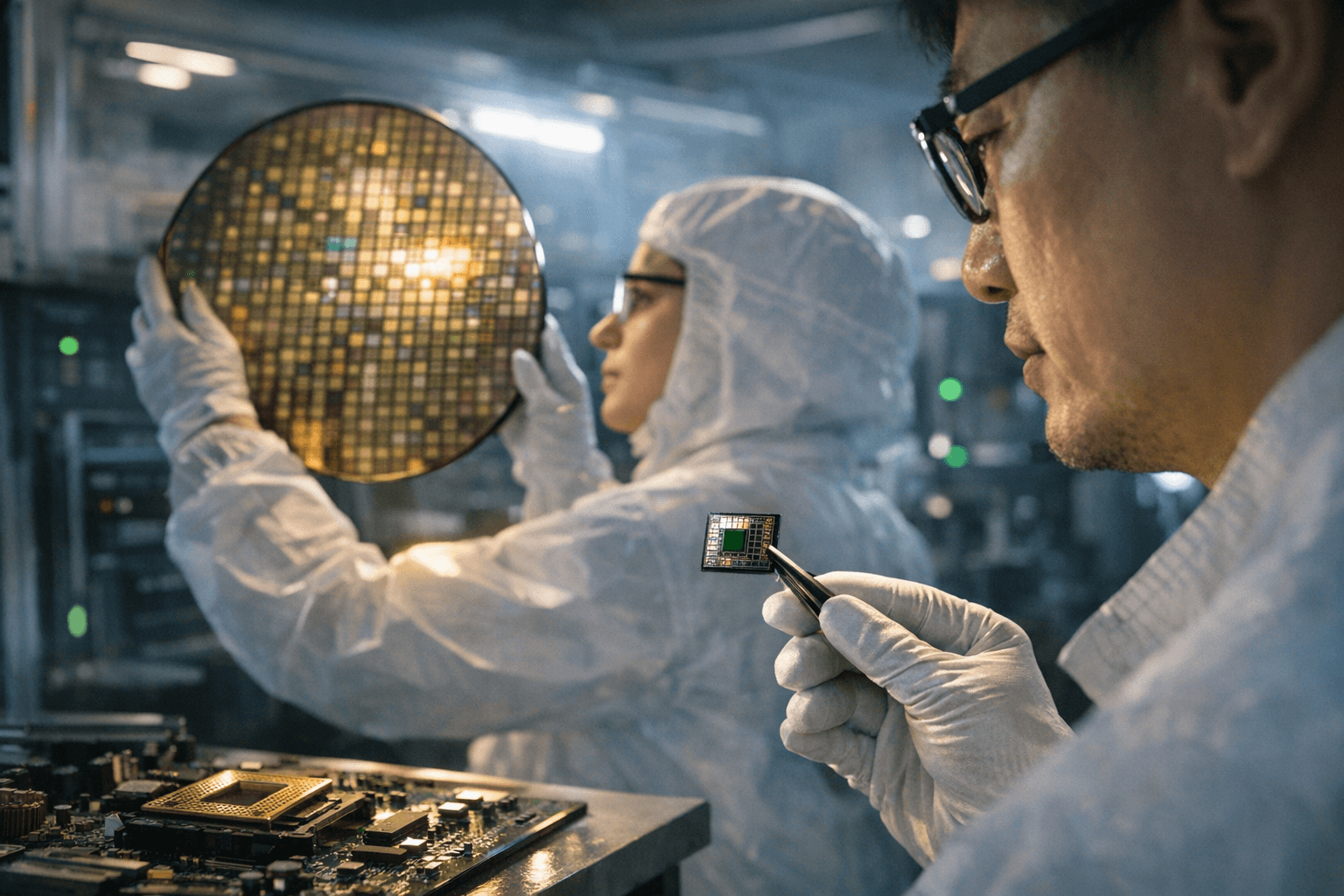

Anthropic Explores Building Custom AI Chips to Reduce Reliance on Outside Suppliers

Anthropic is weighing whether to design its own AI chips, a move that could cost hundreds of millions and reshape how the Claude maker secures computing power.

Anthropic, the San Francisco-based company behind the Claude family of AI models, has been weighing whether to design its own custom AI chips rather than continue relying on third-party suppliers, according to three industry sources familiar with the matter. A company spokesperson declined to comment on the discussions.

The plans, sources said, are "in early stages." No engineering team has been formally committed, no final design selected, and the company may still decide to continue purchasing chips from external vendors rather than developing hardware in-house.

The timing reflects converging supply pressures and financial momentum. Demand for advanced AI accelerators has outstripped supply as generative models have grown more complex and computationally intensive. Anthropic's revenue run-rate surged in 2026, increasing the strategic logic of securing more predictable, cost-efficient compute at scale rather than competing in an increasingly tight market for processors.

Designing competitive AI silicon is neither fast nor cheap. Industry estimates put development costs in the hundreds of millions of dollars, and when software co-design, fabrication ramp, testing, and ecosystem tooling are included, the total investment can exceed one billion dollars. The effort requires deep expertise in both hardware architecture and semiconductor manufacturing, two capabilities Anthropic has not publicly built out.

The company would not be entering unexplored territory. Over the past two years, several of the world's largest technology firms moved toward custom silicon to cut per-inference costs and reduce dependence on dominant chip suppliers. Alphabet designs its own Tensor Processing Units, Amazon has deployed Graviton and Inferentia chips for internal workloads, and Meta has invested heavily in proprietary accelerator hardware. For each of these companies, custom silicon enabled proprietary performance tuning and, over time, lower operating costs per model query.

For Anthropic, owning more of the compute stack could mean tighter control over pricing and product differentiation as Claude's commercial footprint expands. The tradeoff is substantial capital exposure and long lead times; advanced chip architectures typically take years to move from initial design through foundry production to deployment at scale. Any custom silicon program would also compete for a limited pool of chip design engineers and foundry capacity already stretched thin across the industry.

The broader consequences run in two directions. More AI companies pursuing custom silicon could intensify competition for foundry slots and hardware talent, potentially deepening near-term scarcity even as longer-term investment in chip supply grows. Whether Anthropic ultimately commits or continues buying externally, the reported exploration signals how central the chip question has become for any AI lab serious about scaling.

This article was produced by Prism’s automated news system from verified source data, official records, and press releases, then run through automated quality and moderation checks before publishing. The system is built and supervised by the people who set the standards it runs under. Read our full AI policy.

Know something we missed? Have a correction or additional information?

Submit a Tip