Australia engages Anthropic over AI cybersecurity vulnerabilities

Australia is pressing Anthropic over Mythos security risks as regulators and central banks scrutinize whether limited-release AI can outrun oversight.

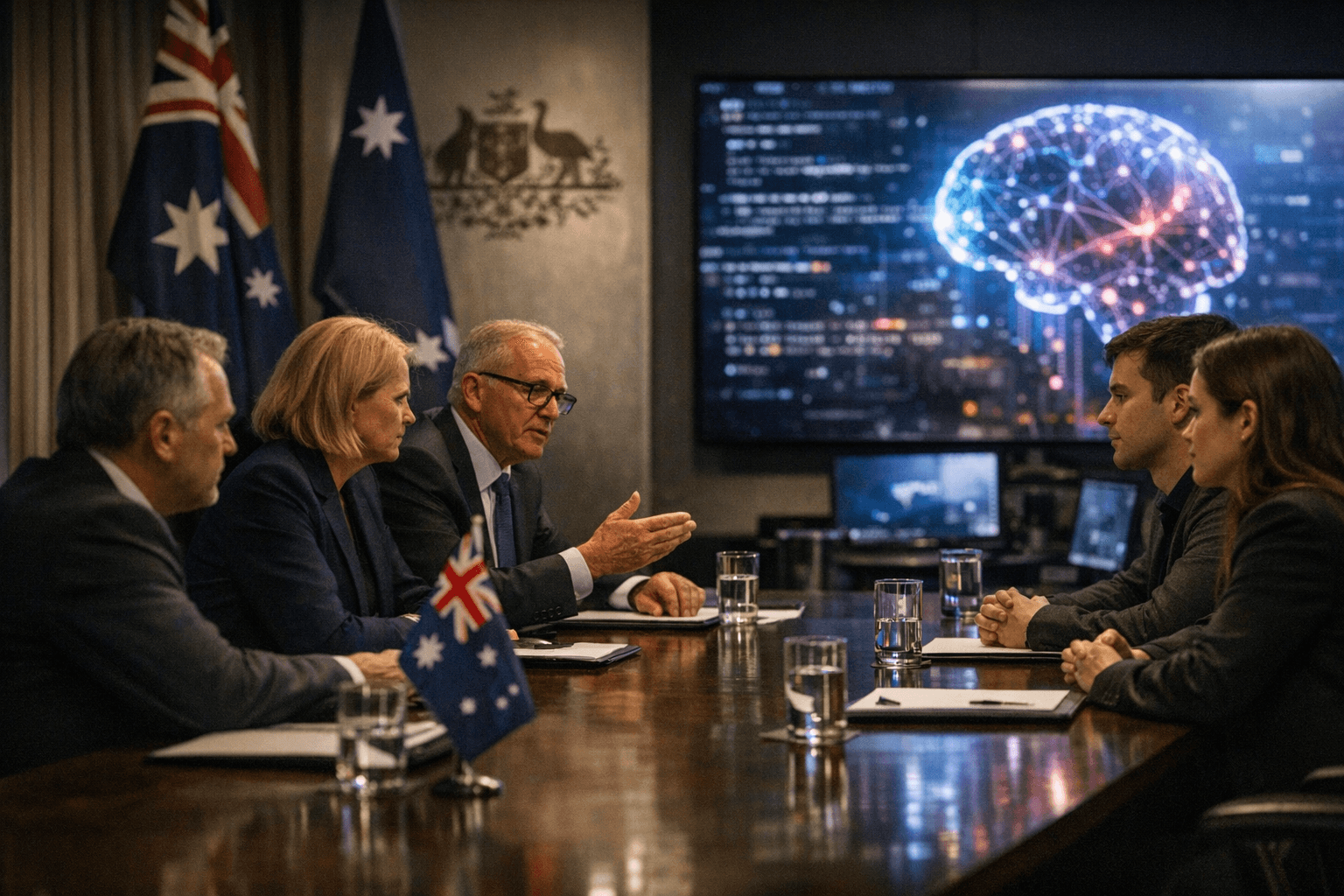

Australia has moved directly into discussions with Anthropic and other software providers over potential cybersecurity vulnerabilities tied to the company’s limited-release Mythos model, a sign that AI oversight is shifting from theory to active national-security management.

A spokesperson for Home Affairs Minister Tony Burke said the government was engaged with software providers over the issue, placing the response inside Australia’s public policy and security apparatus rather than leaving it to a company’s internal safeguards. The concern is not only whether Mythos can defend systems, but whether it can also expose weaknesses faster than those systems can be patched.

The model has already drawn scrutiny beyond Australia. Asian regulators were monitoring it on April 20, and the central banks of Australia and New Zealand were watching the release closely on April 22, reflecting concern that a cybersecurity tool could pose risks to banking systems and other critical networks. ABC News also reported that Australian banks, power providers and infrastructure firms did not have access to test their systems against the model, underscoring a preparedness gap for institutions that would be on the front line if the tool were misused.

Anthropic publicly introduced Claude Mythos Preview on April 7 as a restricted model for cybersecurity work and said it would not be released to the public because of its capabilities. The company has framed Project Glasswing as an effort to secure critical software for the AI era, with launch partners including Amazon Web Services, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorganChase, the Linux Foundation, Microsoft, NVIDIA and Palo Alto Networks. That partner list shows how quickly frontier AI has moved into the center of enterprise security planning, even as access remains tightly controlled.

For Australia, the episode is a test of whether current cyber and AI oversight tools are enough when even a limited deployment can trigger national-security concerns. The model’s potential to find hidden vulnerabilities is exactly what makes it attractive for defense work and dangerous if repurposed. The government’s outreach to Anthropic suggests a new expectation is taking hold: regulators may now need to engage AI developers before a breach, not after one.

Know something we missed? Have a correction or additional information?

Submit a Tip