Chinese Tech Firms Move AI Model Training Overseas, Seek Nvidia Chips

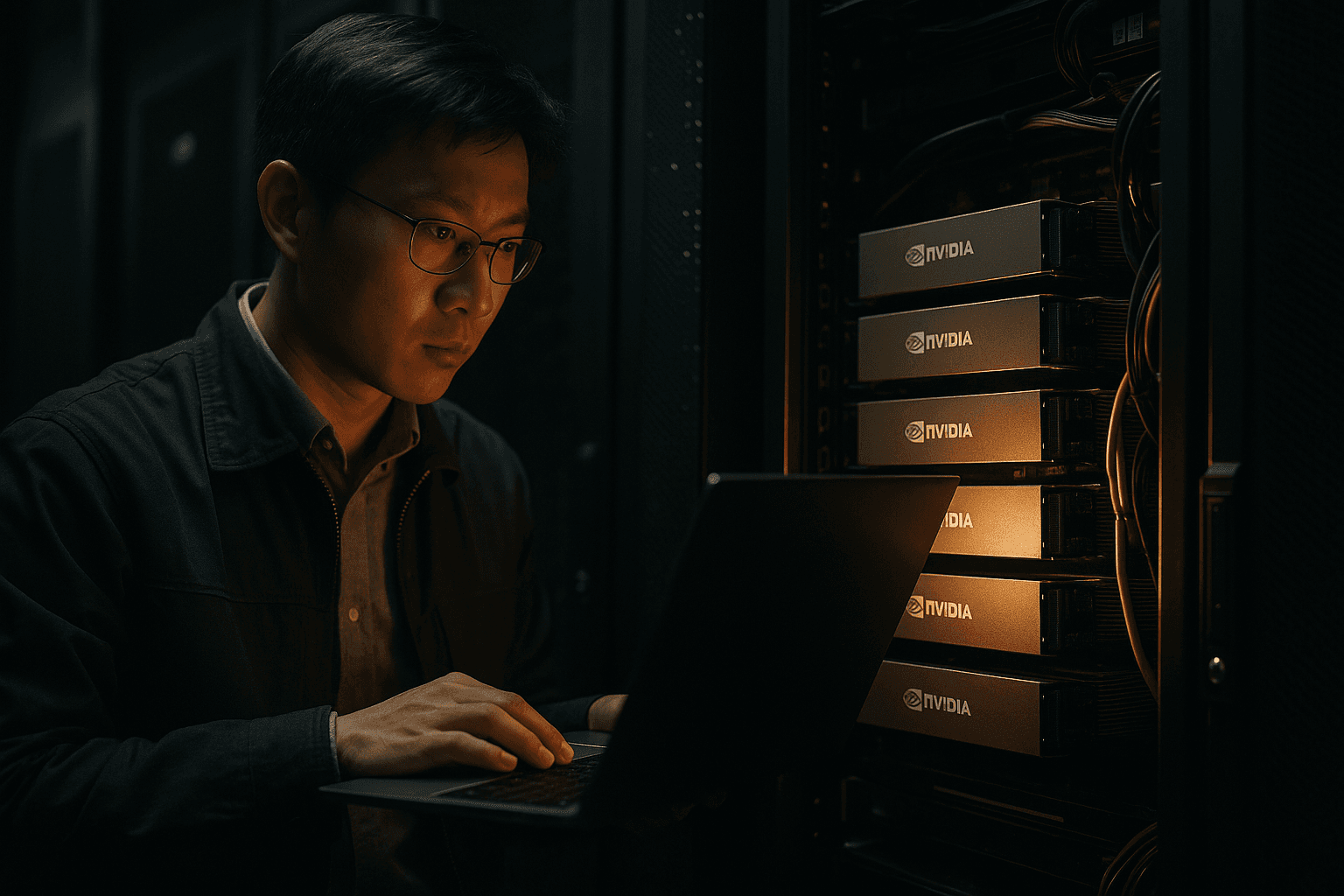

Major Chinese technology companies moved the most compute intensive stages of large AI model training to foreign data centers to gain access to Nvidia accelerators that are harder to procure inside China. The shift, reported by the Financial Times and cited by Reuters, has immediate market consequences for global cloud demand and longer run implications for China’s domestic chip and AI ecosystem.

Top Chinese technology firms began shifting large scale model training operations overseas after encountering limits on access to the most advanced Nvidia accelerators, according to Financial Times reporting cited by Reuters on November 27. Companies moved resource intensive workloads to foreign data centers where H100 and Blackwell class GPUs are available, allowing them to continue developing large models while remaining within the constraints of U.S. export controls.

The technical calculus driving the move is straightforward. Training modern large language models and other generative systems requires clusters of high performance accelerators running for extended periods. Nvidia’s H100 and the later Blackwell class are among the few chips that deliver the throughput and software ecosystem needed for state of the art model development. When those chips are constrained in one market, firms respond by sourcing capacity where the hardware is accessible, often leasing space in third country cloud providers or colocating in overseas facilities.

Commercially, the pattern amounts to a reallocation of computing demand across regions. International cloud providers and data center operators that can supply racks populated with Nvidia accelerators are positioned to capture additional revenue from the Chinese market without selling chips directly into China. For cloud operators, the effect is higher utilization of premium GPU inventory and an uplift in specialized services for model training. For Chinese firms, the approach preserves their ability to scale models quickly, but at higher operational cost and with greater logistical complexity.

The policy implications are significant. U.S. export controls aim to limit Beijing’s access to advanced AI compute, but shifting training abroad introduces a circumvention vector that regulators may find hard to close without broadening the scope of controls to include services and third party intermediaries. The dynamic also intensifies the strategic tradeoffs for China’s tech policy makers, who must weigh the short term benefits of offshore compute against the longer term goal of technological self sufficiency.

Domestically, the move could accelerate investment in Chinese AI chips and data center capacity. Beijing has already signaled support for building an indigenous semiconductor supply chain and expanding local cloud infrastructure. In the medium term, increased overseas training may white space opportunities for Chinese vendors to develop alternatives, but replicating the software stacks and large scale manufacturing capacity built around Nvidia’s ecosystem will take time and capital.

Economically, the pattern reflects a broader trend toward fragmentation of global technology supply chains. In the near term, expect stronger demand for GPU equipped capacity in Southeast Asia, Europe and North America as Chinese model builders seek compliant venues. Over the longer term, the contest between access to global compute and national industrial policy will shape where models are trained, who profits from cloud services, and how quickly China closes its gap in high performance AI hardware. Training cutting edge models can require thousands of accelerators and run into millions of dollars in cloud costs, which makes control over high end chips a central strategic prize for firms and governments alike.

This article was produced by Prism’s automated news system from verified source data, official records, and press releases, then run through automated quality and moderation checks before publishing. The system is built and supervised by the people who set the standards it runs under. Read our full AI policy.

Know something we missed? Have a correction or additional information?

Submit a Tip