Deep Learning Tool Predicts ICU Infections While Explaining Its Reasoning

A UNC and University of Florida team built an ICU infection predictor that shows its work, but experts say prospective validation across diverse hospitals is required before bedside deployment.

The black-box problem in clinical AI has a specific new challenger. Researchers from the University of North Carolina and the University of Florida published a study in PLOS ONE presenting a deep-learning framework that not only predicts postoperative infections in intensive care patients but identifies, with statistical precision, exactly which clinical signals drove each prediction.

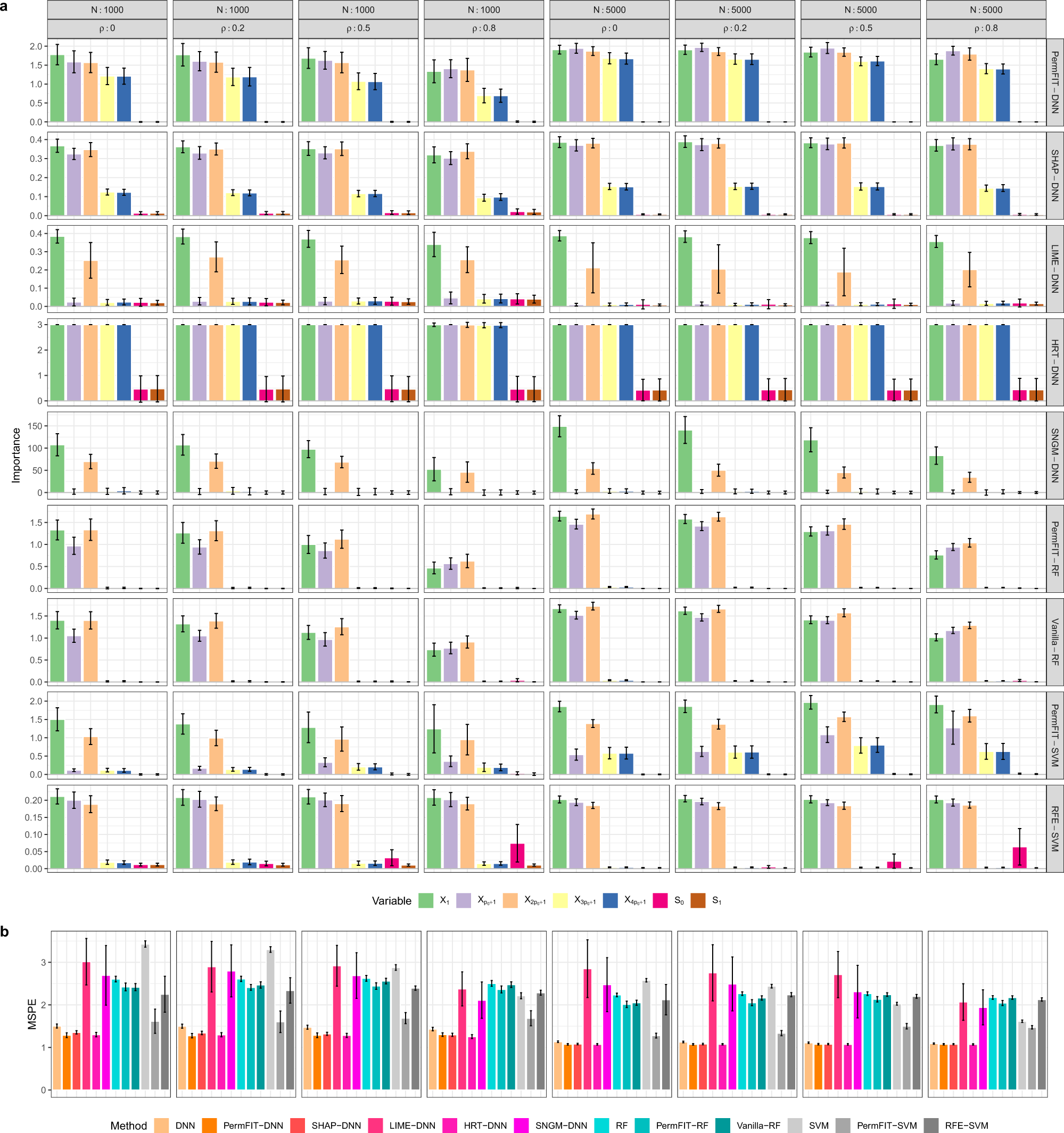

The framework pairs a deep neural network with a method called PermFIT, a permutation-based hypothesis testing approach that assigns explicit p-values and confidence estimates to every candidate predictor in a patient's electronic health record. The result is a ranked list of variables, including particular lab trends, vital signs, perioperative events and medication indicators, that a clinician can inspect before acting on the model's output. As the authors write, "PermFIT rigorously evaluates the impact of each feature on postoperative infection risk," a design choice the team argues can "further enhance" predictive performance when combined with feature selection rather than simply explaining an already-trained model after the fact.

The team, whose listed authors include Wu, Luria, Xiao, Tighe and Zou, trained their model on MIMIC-III, a large de-identified electronic health record dataset drawn from critical-care settings and widely used as a benchmark in clinical machine-learning research. Feeding only the statistically significant features back into the deep neural network, they found the streamlined model matched or improved upon black-box architectures across receiver-operating-characteristic metrics, precision, recall and calibration measures.

But the methodology's reliance on MIMIC-III also marks the study's clearest limitation. The database, though comprehensive, reflects care delivered in a specific set of hospital environments. The authors acknowledge the framework requires prospective validation across multiple hospital systems and diverse patient populations before it could inform real bedside decisions. They also flag the open question of whether model-guided interventions actually change patient outcomes, a gap between statistical performance and clinical effect that a single retrospective dataset cannot close.

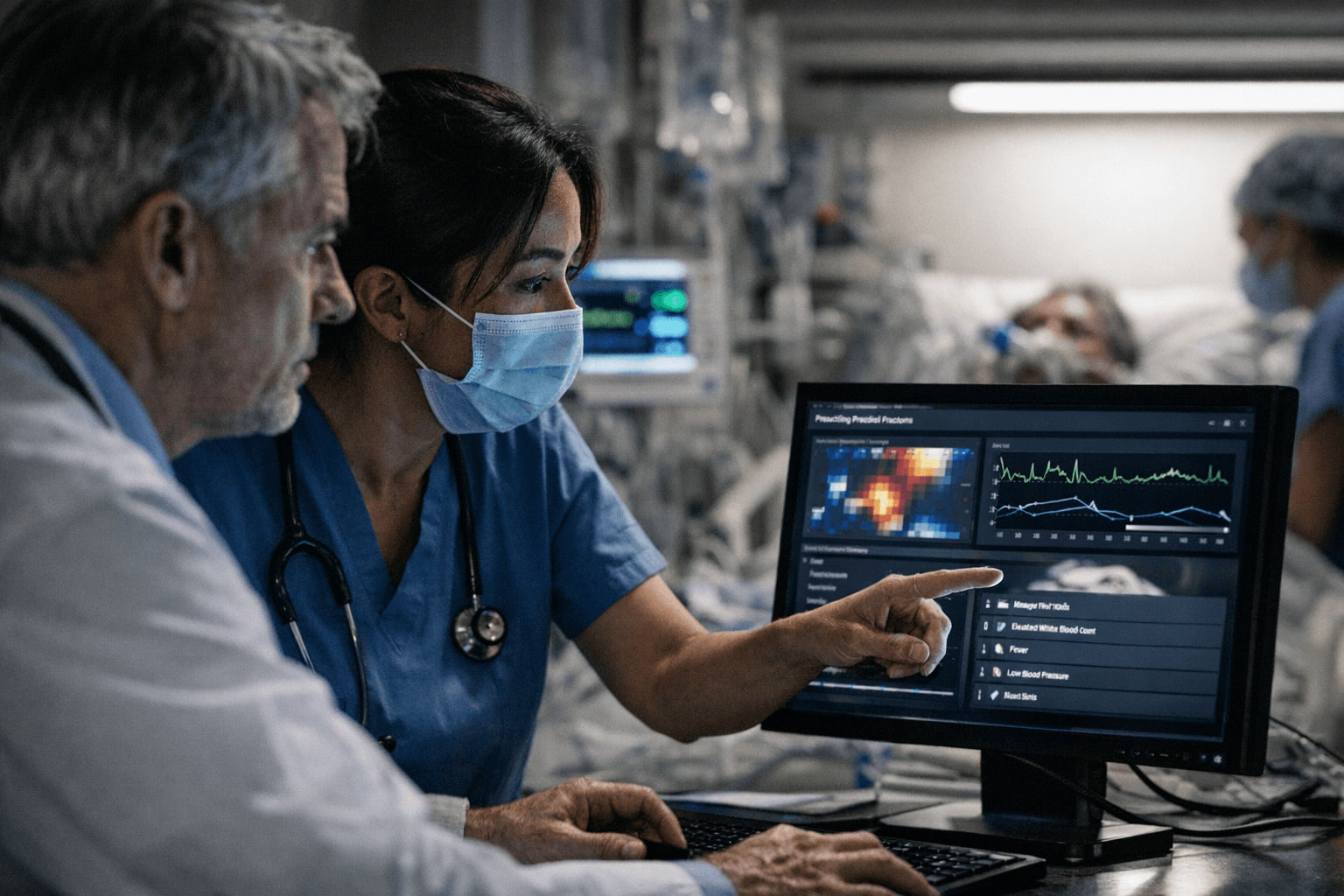

That gap is precisely where trust is won or lost in ICU settings. Alarm fatigue is already a documented crisis in intensive care, where nurses and physicians routinely silence monitors that have cried wolf too often. An interpretable risk model that generates false positives with explicit statistical confidence is marginally more defensible than an opaque one, but it still adds cognitive load in an environment where attention is the scarcest resource. For the framework to earn adoption without worsening that burden, external validation would need to demonstrate not just strong ROC curves but clinically acceptable false-alarm rates across demographically and institutionally varied populations.

The researchers released their code alongside the paper, consistent with PLOS ONE's open-science standards, and the full peer-review history is publicly available. The paper was received in March 2025 and accepted March 25, 2026, reflecting more than a year of peer review. If prospective evaluation follows, the framework's interpretable feature lists could eventually support antibiotic stewardship decisions, targeted infection surveillance and earlier intervention for high-risk postoperative patients. Whether those statistical promises hold when the training data's assumptions meet the next hospital's patient mix is the question no benchmark dataset can answer in advance.

Sources:

Know something we missed? Have a correction or additional information?

Submit a Tip