Google, Marvell reportedly team up on new AI chips for TPUs

Google is weighing two new chips with Marvell, including a memory processor and a TPU built for inference. The aim is lower AI costs and less dependence on Nvidia.

Google is exploring two new custom AI chips with Marvell Technology, a move that would deepen its push to control more of the hardware behind its AI services and reduce reliance on outside chipmakers. The reported work would split across two pieces of silicon: a memory processing unit designed to work with Google’s tensor processing units, and a new TPU built specifically for running AI models.

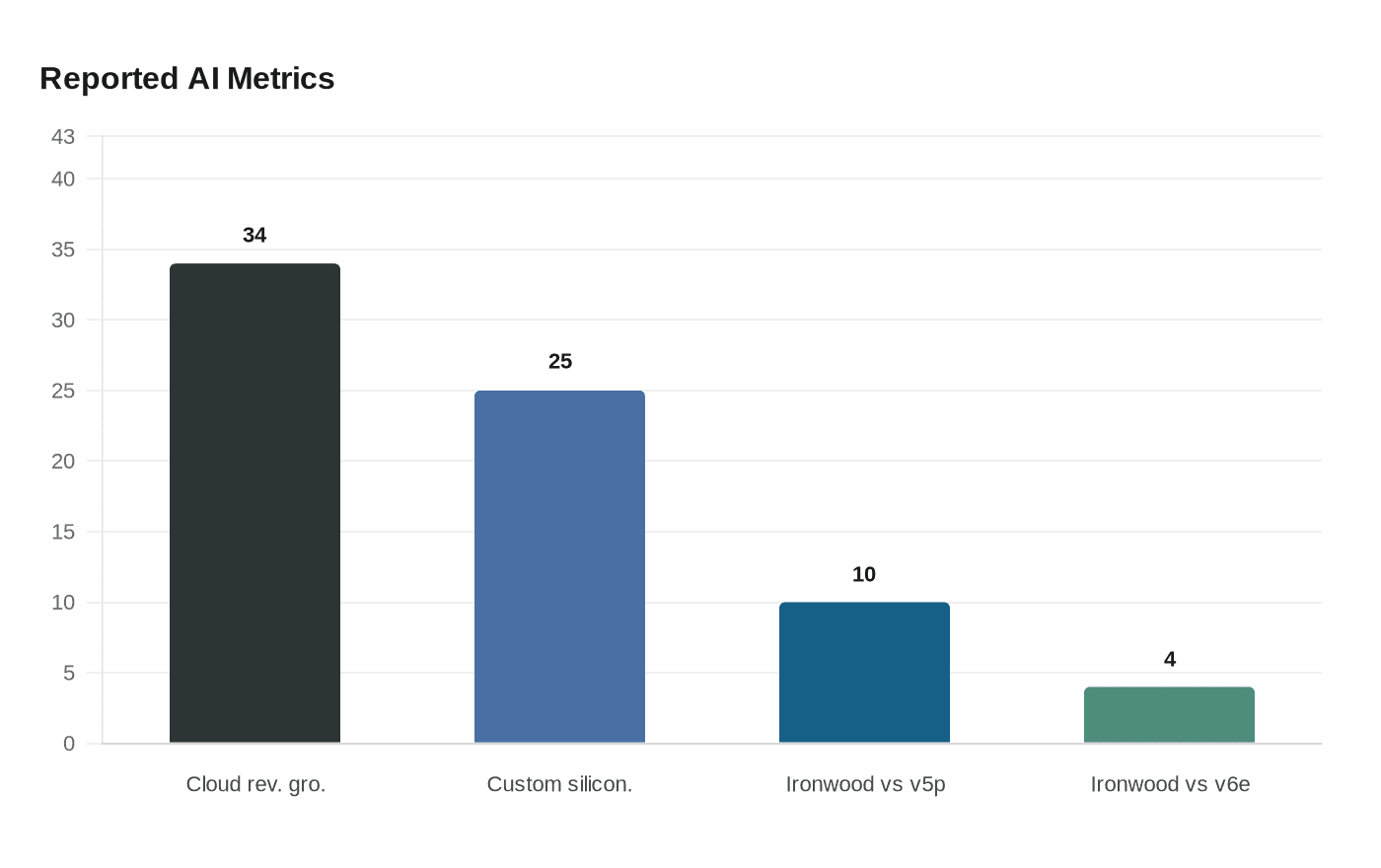

The timing fits Google’s broader effort to prove that its AI spending can translate into durable cloud revenue. Alphabet said Google Cloud revenue rose 34% to about $15.2 billion in the third quarter of 2025, a result that underscored how much demand was flowing into AI infrastructure and services. Google has already been positioning its TPUs as a faster, cheaper route for inference, the stage where a model serves answers to users at scale. In April 2025, the company introduced Ironwood, its seventh-generation TPU and the first Google TPU designed specifically for inference. Google Cloud described Ironwood as built for high-volume, low-latency AI inference and model serving, and later said it delivered about 10 times the peak performance of TPU v5p and more than four times the performance per chip of TPU v6e for training and inference workloads.

That focus matters because inference is becoming a profit test for the AI industry. Google Cloud has described TPU v5e as a cost-efficient inference chip, while Ironwood has been presented as Google’s most energy-efficient custom silicon to date. If Google can push inference costs lower and speed model responses higher, it strengthens the case for TPUs as a real alternative to Nvidia’s dominant graphics processors, especially as hyperscalers look for more control over pricing, supply and performance.

Marvell brings a different set of strengths to that race. The chip designer has publicly cast custom AI infrastructure as a major growth opportunity and said in 2025 that custom silicon could account for roughly 25% of the accelerated-compute market by 2028. It also showed off 2nm silicon for next-generation AI and cloud infrastructure this year, signaling that it wants a larger role in the next wave of data-center hardware. A memory processing unit would fit neatly into that strategy, since memory bandwidth and data movement are often bottlenecks in AI systems.

People familiar with the talks said the companies hoped to finalize the memory processing unit design as soon as next year before sending it to test production, a sign the project was still early but moving forward. Neither Google nor Marvell responded immediately to requests for comment. If the effort advances, it would mark another step in the AI infrastructure arms race, where the companies best able to tailor chips to their own workloads may gain the sharpest edge on cost, speed and independence.

Know something we missed? Have a correction or additional information?

Submit a Tip