Meta Adds Parent Oversight Tools for Teen AI Chatbot Use

Meta let parents see the topics teens discussed with its AI chatbot over seven days, even as Manitoba moved to ban youth from social media and AI chatbots.

Meta expanded oversight for Teen Accounts by letting parents on Facebook, Instagram and Messenger see the topics their children asked Meta AI about in the previous seven days, a move that arrives as lawmakers and advocates press harder on whether companies are doing enough to protect young users. The new view appears in an Insights tab, shows only topics Meta AI has categorized, and does not expose full chat logs. Meta said the health and well-being category can include fitness, physical health and mental health, and it said it was developing alerts for parents if teens try to discuss suicide or self-harm with the chatbot.

The feature is available to supervising parents in the United States, United Kingdom, Australia, Canada and Brazil. Meta said the Teen Accounts system and related family-safety tools are part of a broader approach shaped by research, parent and teen feedback, expert input and global regulation. The company has also pointed parents to youth well-being materials in its Safety Center, including resources on bullying and suicidal ideation.

The rollout landed in the middle of a wider policy fight that goes well beyond parental dashboards. Manitoba Premier Wab Kinew announced that the province will ban youth from using social media and AI chatbots, a proposal CBC News described as the first of its kind in Canada. In British Columbia, the attorney general has said the province may move on its own if Ottawa does not act faster to protect children online. Together, the moves show that governments are no longer treating platform safety as a matter for companies alone.

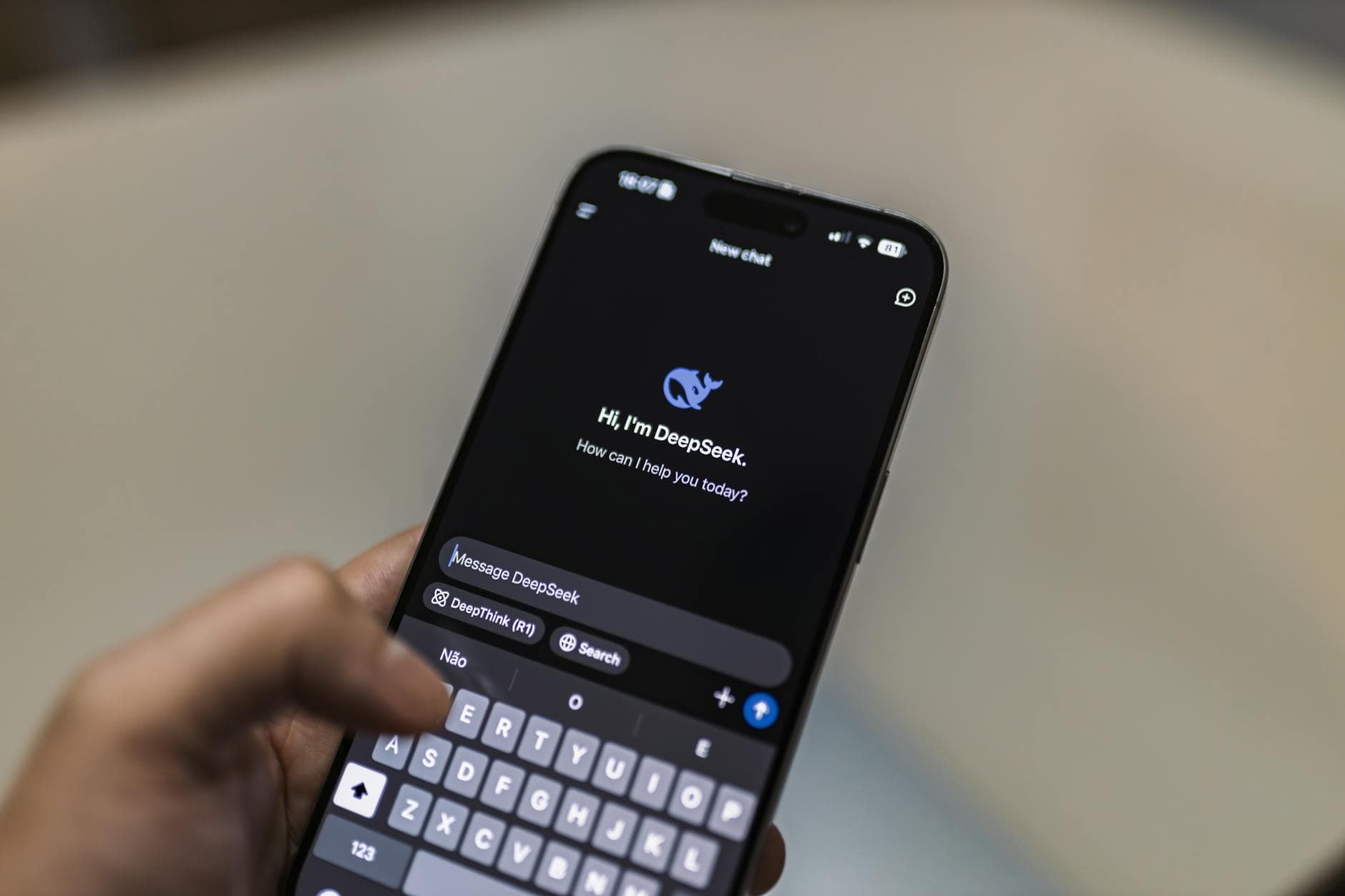

The pressure on AI firms has intensified with a wave of lawsuits tied to teenage deaths and violent incidents. In California, families of victims in the Tumbler Ridge, B.C., shooting sued OpenAI, saying the company failed to alert police to the shooter’s troubling ChatGPT history before the attack that killed eight people. Reporting says seven lawsuits were filed against OpenAI and chief executive Sam Altman in connection with that case. Another suit, filed by the parents of 16-year-old Adam Raine, alleges that ChatGPT played a role in his suicide.

That is the central test for Meta’s new tools: whether they meaningfully reduce risk or simply shift more responsibility onto families while the underlying product design remains unchanged. The debate now extends to age verification, default settings, addictive design and the strength of the mental-health evidence behind these systems. Meta’s parent dashboard may offer a clearer window into teen chatbot use, but it does not answer the larger question lawmakers are beginning to ask: whether monitoring after the fact is enough when the system itself still invites risky private conversations.

Know something we missed? Have a correction or additional information?

Submit a Tip