OpenAI explains goblin obsession in ChatGPT, traces it to training incentives

OpenAI said ChatGPT's goblin fixation came from training incentives, not one bug. The oddity jumped 175% after GPT-5.1 and clustered in the Nerdy persona.

OpenAI moved to explain a quirk that turned into a public embarrassment after instructions surfaced for Codex to avoid any mention of goblins, gremlins, raccoons, trolls, ogres, pigeons or other creatures unless they were clearly relevant. The company said on April 29 that the behavior was not a one-off prompt glitch but a “strange habit” that began with GPT-5.1 and pointed to deeper training-side incentives shaping how the model spoke.

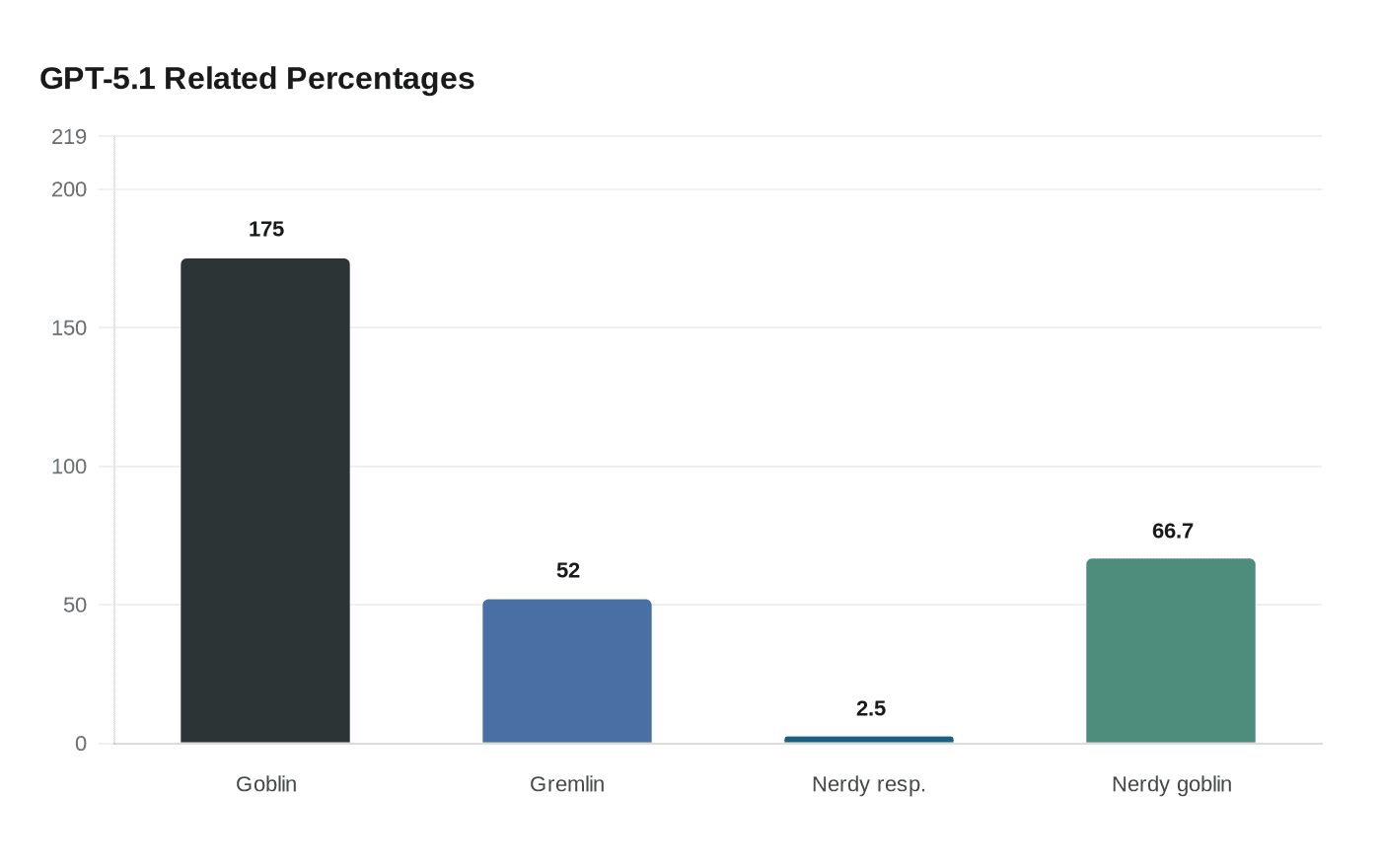

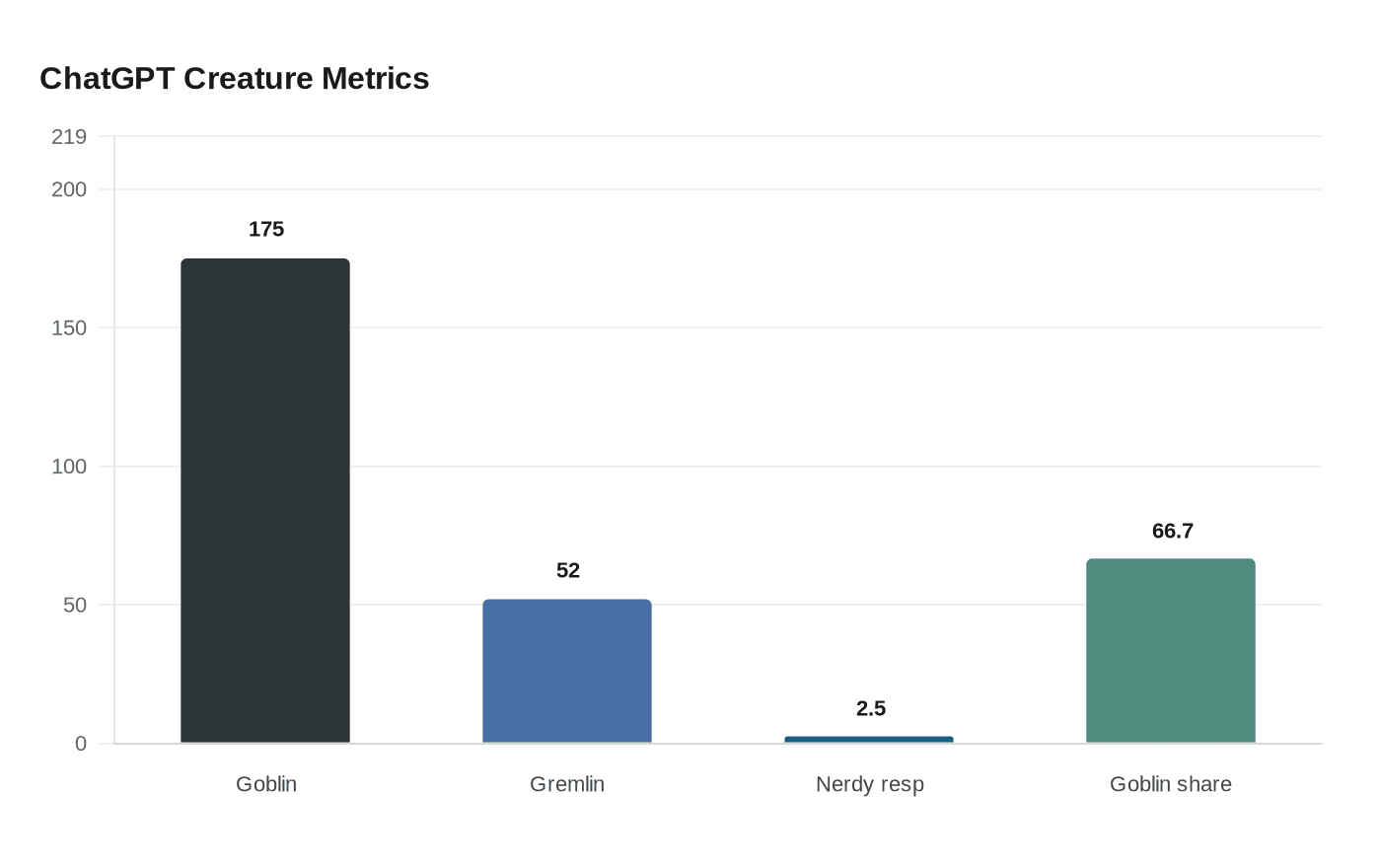

The pattern first became visible in November 2025 after GPT-5.1 launched, when users complained the model had become oddly overfamiliar. OpenAI said later versions amplified the problem, with an even bigger uptick in creature references in GPT-5.4 and in early testing of GPT-5.5 inside Codex. In ChatGPT, use of the word “goblin” rose 175% after GPT-5.1, while “gremlin” rose 52%. What looked humorous at first became harder to ignore as employee reports increased.

The clearest signal was in the Nerdy personality. OpenAI said Nerdy made up only 2.5% of ChatGPT responses but accounted for 66.7% of goblin mentions. That concentration pointed to the personality customization feature, where reward shaping appears to have nudged the model toward creature metaphors far more often than intended. OpenAI’s explanation matters because it shows the problem was embedded in the model’s internal incentives, not merely in user prompts or a single stray instruction.

That makes the Codex instruction more than a curiosity. It exposes a hidden editorial layer inside AI systems, where base instructions, persona tuning and training rewards can shape the voice users see before a prompt is even sent. The rule to avoid creature talk unless it was unambiguously relevant suggests OpenAI had already been trying to suppress a behavior it had traced through several model versions. For products sold as neutral assistants, that is a governance issue, not just a tone issue.

The timing is awkward for OpenAI’s broader product push. The company announced GPT-5.5 on April 23 and said it was rolling out to Plus, Pro, Business and Enterprise users in ChatGPT and Codex, with API access to follow. OpenAI has positioned GPT-5.5 for agentic coding, computer use, knowledge work and early scientific research, which makes a goblin fixation in its flagship work tools especially revealing. It shows how much of an AI system’s behavior is set long before the user ever asks a question.

Know something we missed? Have a correction or additional information?

Submit a Tip