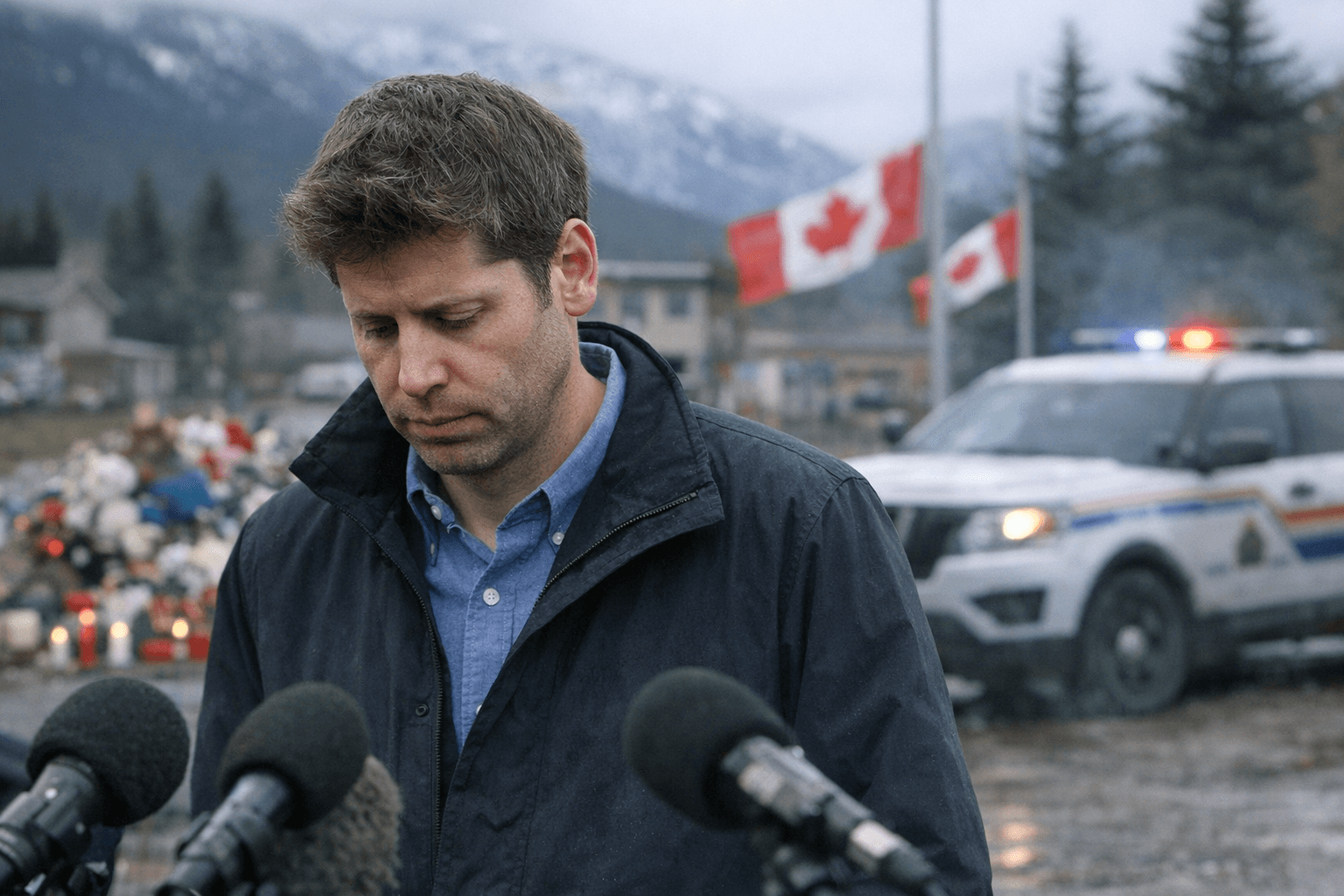

Sam Altman Apologizes to Tumbler Ridge After January Mass Shooting

Altman apologized after OpenAI said it banned a shooter’s ChatGPT account in June 2025 but did not alert RCMP. The case is intensifying pressure for mandatory reporting rules.

Sam Altman apologized to Tumbler Ridge after OpenAI acknowledged that it banned a ChatGPT account tied to the shooter in June 2025 but did not alert the RCMP before the Feb. 10 attack that killed eight people, including six children, in northeastern British Columbia. The company’s handling of the case has become a test of when an AI firm must act on credible threat signals and whether an internal threshold can stand in place of a public warning.

OpenAI’s letter, dated April 23 and shared publicly on April 24, said the company’s automated system detected the account, sent it to human review, and concluded the activity did not meet its referral standard because it did not show “credible and imminent planning.” Altman wrote, “I am deeply sorry that we did not alert law enforcement to the account that was banned in June,” a statement that put the company’s missed decision at the center of the public record.

British Columbia Premier David Eby said the apology was “necessary, and yet grossly insufficient,” after pushing for one in meetings with Altman and Tumbler Ridge Mayor Darryl Krakowka in early March. OpenAI had already said the account was flagged for problematic activity before the shooting but was not escalated to authorities in Canada, and Altman said he delayed the apology so the community could “grieve in their own time.”

The policy pressure is now national. Federal AI Minister Evan Solomon said after meeting Altman that OpenAI agreed to establish a direct point of contact with the RCMP, apply new safety standards retroactively, and review previously flagged cases to see whether other threats should have been reported. OpenAI also said it would strengthen its enhanced law-enforcement referral protocol, a sign that the company is trying to harden its own process even as Canadian officials demand more accountability. The episode is likely to intensify calls for mandatory reporting rules, because the public still has no guarantee that a company’s internal judgment will match the standard of public safety.

Sources:

Know something we missed? Have a correction or additional information?

Submit a Tip