Semrush says brands must optimize sites for AI agents, not just searchers

Brands now have to pass an AI agent’s test: can it understand the site, compare options, and finish the task without friction?

Agentic readiness is the new site-quality test

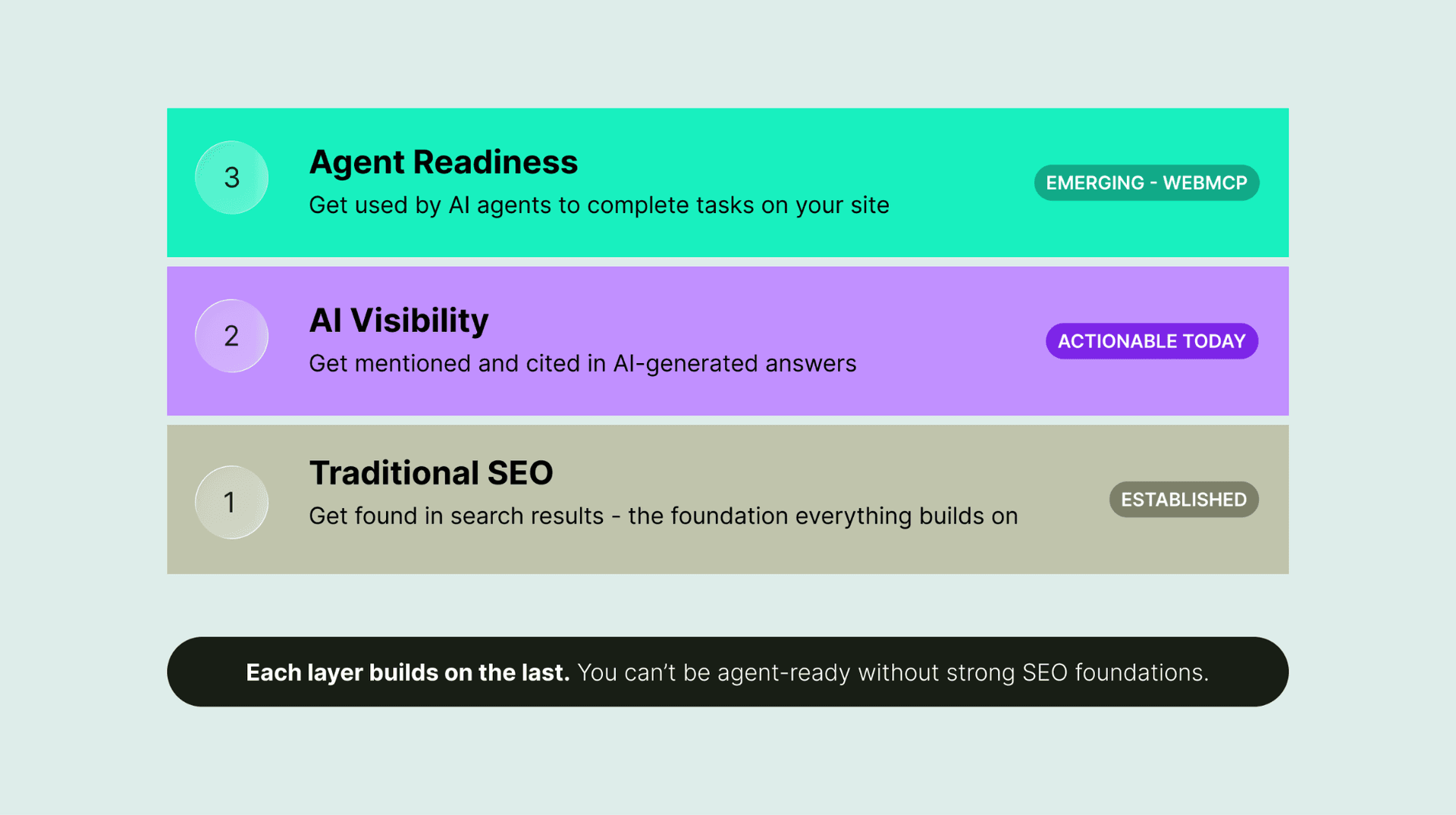

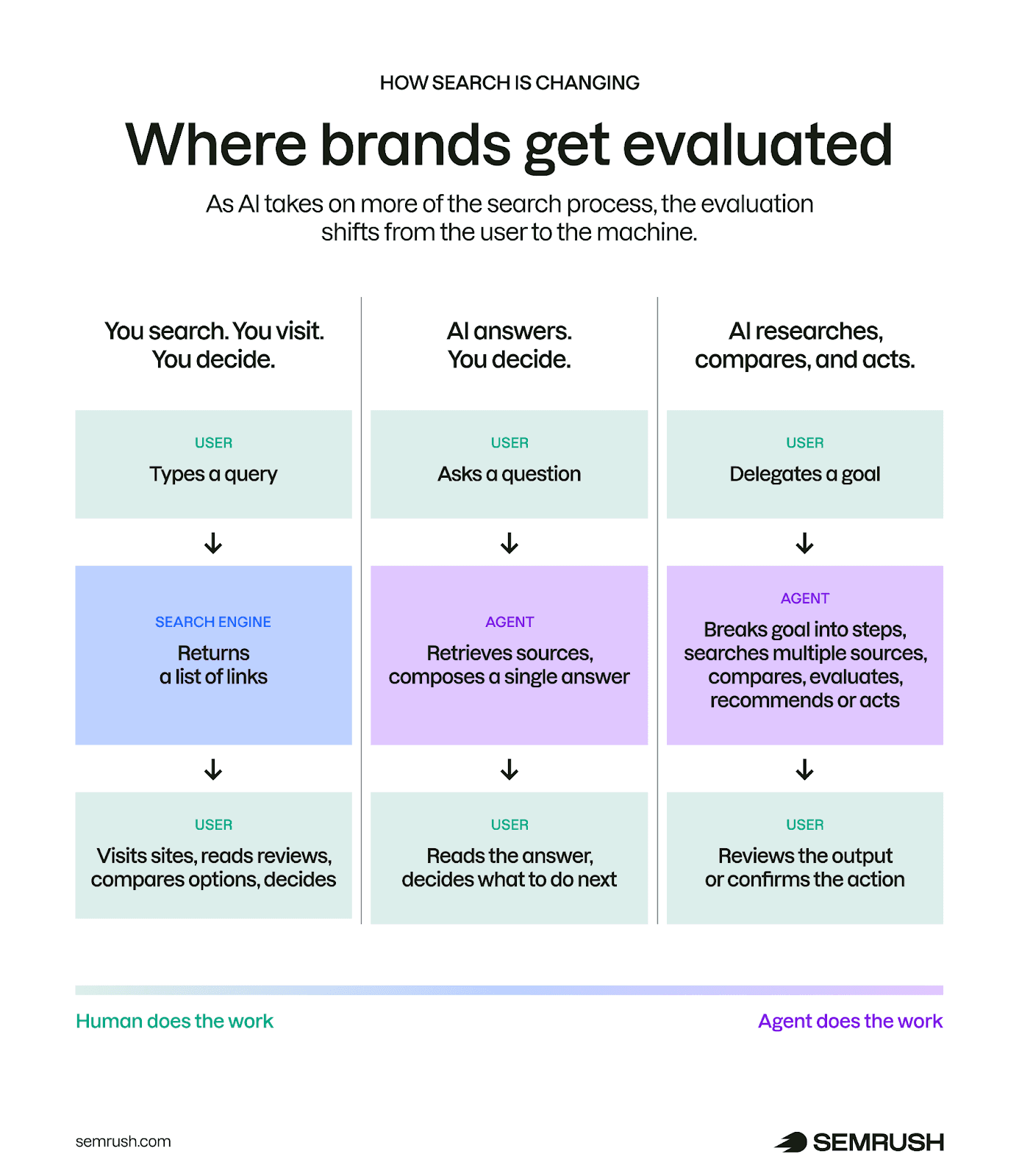

The shift Semrush is pushing is bigger than visibility. A site is no longer being judged only on whether it can win a click; it is being judged on whether an AI agent can land on it, understand it, compare it with alternatives, and complete a workflow on behalf of a user. That changes the work from classic search optimization into something closer to machine usability, where forms, pricing, product detail, and page structure all have to make sense to a system that is trying to act.

Semrush frames agentic search as AI that retrieves, evaluates, and acts on information for users. In practical terms, that can mean consolidating findings, filling out forms, or even making purchases. The company’s broader “agentic web” language pushes the same idea further: the web becomes infrastructure for delegation, where agents do not just summarize information, they move through the journey. That is the heart of agentic readiness, and it is why agencies now have a fresh way to package technical SEO into a business outcome.

What an AI agent needs from a site

The simplest way to think about agentic readiness is to ask three questions. Can the agent reach the content? Can it understand what matters? Can it complete the task? If the answer is no at any step, the site may still be visible to people, but it is not fully usable by the systems that are increasingly doing the comparison work.

Semrush ties that readiness to everyday site elements, not futuristic speculation. Reduced JavaScript obstacles matter because heavy client-side rendering can make pages harder for crawlers and agents to interpret. Clean content matters because an agent needs text it can parse quickly. And workflow friction matters because a page that hides pricing, buries forms, or forces a login wall can stop an agent before it gets to the action a user actually wants.

That is what makes this more than another SEO buzzword. The winning site is not just answerable, it is operable.

Build the checklist around task completion

For agencies, the most useful move is to turn the concept into a readiness audit that mirrors how a human buyer behaves, then ask whether an AI agent can do the same thing without hitting a wall.

- Can the agent access the page without being blocked by robots.txt?

- Can it read the page without depending on heavy JavaScript execution?

- Can it identify core information, such as pricing, product details, and service terms?

- Can it move to the next step, such as a form, checkout, or booking flow?

- Can it trust what it is seeing because the page has structured data, clear navigation, and consistent internal linking?

A practical checklist starts with:

Semrush’s guidance makes the point that these are not separate disciplines anymore. Crawlability, structured data, internal linking, schema markup, crawlable navigation, and even support for llms.txt all sit in the same readiness conversation. The agency value lies in connecting those technical details to a business task, like lead generation or ecommerce conversion, rather than treating them as isolated site fixes.

Blocked bots, login walls, and JavaScript are now business problems

The old technical SEO issues have not gone away, but Semrush is recasting them as blockers to AI action. Its troubleshooting guidance says JavaScript-heavy sites can create problems for crawlers, robots.txt blocking can prevent audits from running, and landing pages hidden behind gateways or login walls may interfere with crawling. In other words, what used to be a technical inconvenience can now become a commercial blind spot if an agent cannot even see the offer.

That is a major shift for agency conversations with clients. A blocked pricing page is no longer just a crawl issue. A slow, script-heavy form is no longer just a performance note. Each one can interrupt the path from discovery to action, which is exactly the path AI agents are designed to travel. If the agent cannot move smoothly, the brand loses the chance to be compared, shortlisted, or purchased.

Machine-readable trust signals are part of the brief

Agentic readiness is not only about access. It is also about whether the site sends trustworthy signals in a form machines can use. Semrush says its AI Search Health score looks at crawlability, structured data, internal linking, and technical blockers, which is a useful way to translate trust into measurable site conditions. A page with clear schema, logical structure, and crawlable navigation is easier for an agent to interpret than a page that relies on visual context alone.

That matters because AI systems are making judgments about which brands deserve to be surfaced, compared, and acted upon. If a site is messy, ambiguous, or incomplete, the agent has less confidence in what it is seeing. Agencies can treat that as a readiness issue and audit for gaps in markup, weak internal paths, inconsistent product information, and missing page-level signals that make a site easier to classify.

Use Semrush’s tools to prove the problem

Semrush has turned this idea into productized diagnostics, which makes it easier to sell and operationalize. Site Audit now includes an AI Search Health widget that measures how optimized a site is for AI-driven visibility. It also includes a “Blocked from AI Search” check that flags pages inaccessible to AI user-agents through robots.txt. Those two features give agencies a concrete way to show where a site is ready and where it is not.

The company’s Log File Analyzer adds another layer by reporting on bot behavior beyond traditional search crawlers. Semrush says its logs now surface AI bots such as ChatGPT, Claude, and Perplexity, alongside classic crawler activity. In enterprise settings, Semrush says log-file coverage spans 30 bots total, including 20 search-engine bots and 10 AI bots. That makes bot analysis more than a diagnostic exercise; it becomes evidence that the site is already being touched by multiple automated systems with different goals.

For agency teams, that evidence is valuable in client meetings. It helps move the conversation from abstract concern about AI search to visible patterns in crawl behavior, blocked sections, and missing machine-facing signals. Once the data is in hand, the fix list becomes easier to prioritize.

Where agencies can turn this into a service line

The business opportunity is straightforward. A brand that is hard for AI agents to interpret may lose visibility in both search and the next wave of AI-assisted buying flows. That opens the door for a readiness package that blends technical SEO, content structure, and bot analysis into one client-facing offer. The work is especially compelling for ecommerce and B2B brands, where pricing, forms, and product detail are central to conversion.

The agencies that win here will be the ones that can explain the shift in plain language: this is no longer only about ranking for people, it is about being usable by systems that act for people. That means every audit should ask whether the site can be crawled, parsed, compared, and acted on. In the agentic era, that is the new standard of site quality, and it is already measurable enough to sell.

Know something we missed? Have a correction or additional information?

Submit a Tip