Technical SEO Emerges as Foundation for Search and AI Visibility

Technical SEO is no longer just cleanup work. It is the layer that decides whether search engines and AI systems can actually find, read, and reuse a site’s content.

Technical SEO now sets the ceiling for everything else

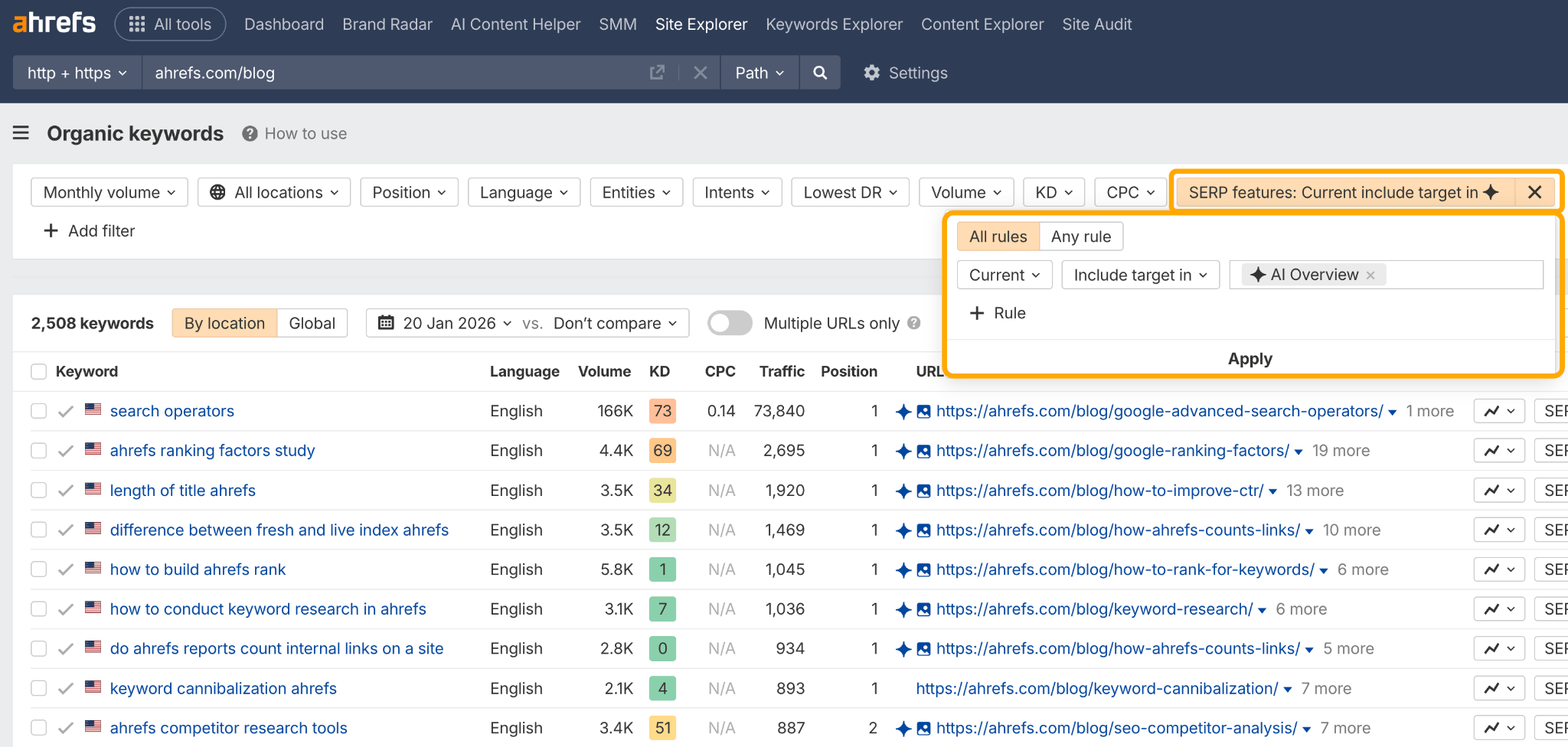

Technical SEO has moved from background maintenance to a direct growth lever. Semrush’s latest guidance frames it as the work of shaping a site’s infrastructure so search engines and AI systems can crawl, render, index, and cite content, which means the technical layer now determines whether strong content ever gets a chance to perform.

That shift matters most for agencies and in-house teams that have spent years chasing volume. If a page cannot be discovered or understood, polished copy and fresh publishing schedules do not matter much. The infrastructure has to support visibility first, because technical problems can quietly block revenue before a ranking strategy ever has a chance to work.

Why crawlability and indexability are the real gatekeepers

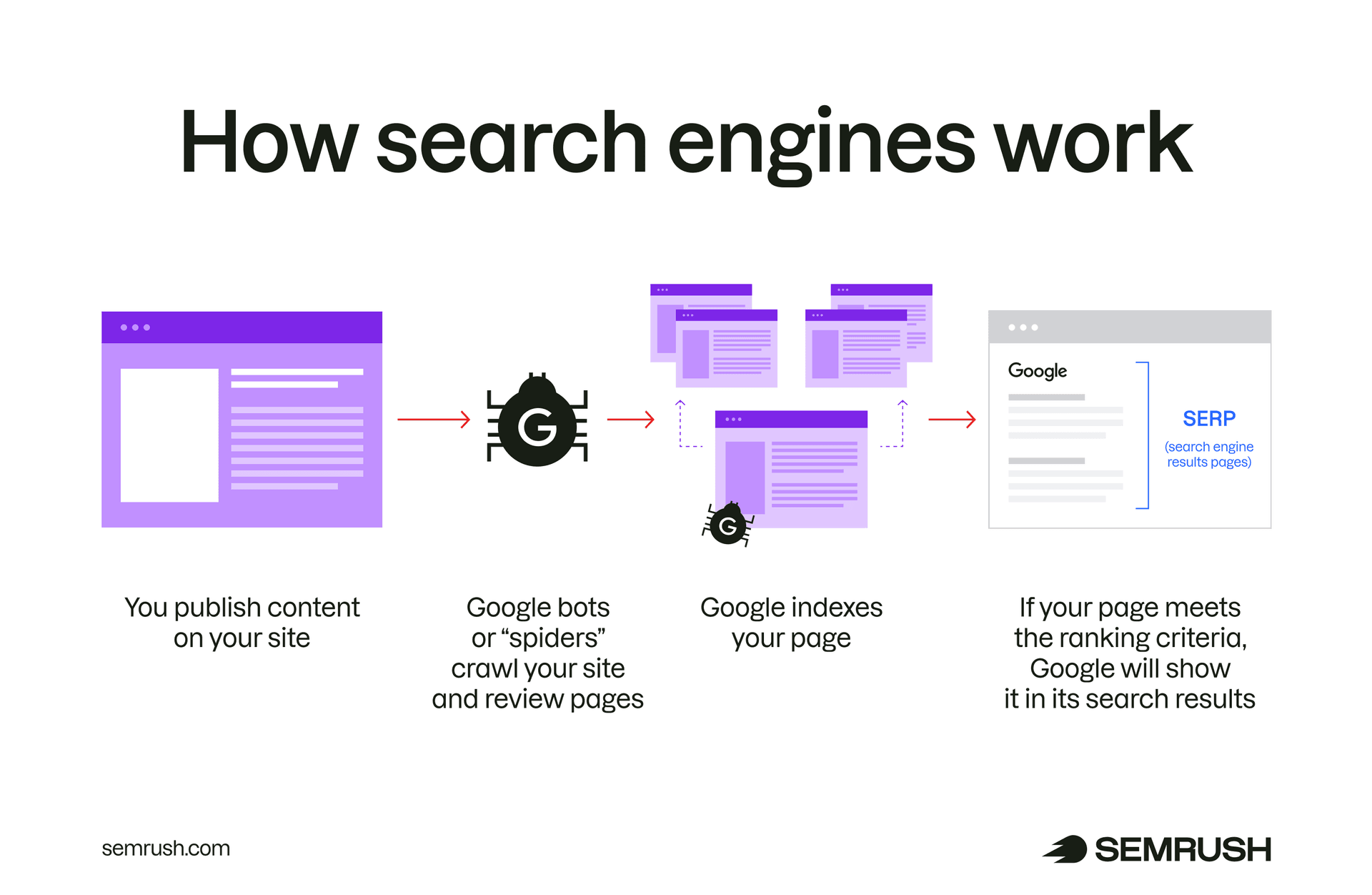

Google’s SEO Starter Guide makes the foundational point plainly: SEO helps search engines crawl, index, and understand content. That simple sequence still defines whether a page enters the search ecosystem at all, and it is now equally relevant to AI systems that rely on accessible, well-structured pages to produce answers.

Semrush’s framing broadens the audience for technical SEO. The same site health issues that frustrate Googlebot can also limit whether systems such as ChatGPT, Claude, and Gemini are able to interpret a page accurately enough to surface it in generated responses. In practice, crawlability and indexability are no longer just classic search concerns, they are visibility concerns across search and AI.

How site structure turns into discoverability

A site’s architecture does more than organize pages for human visitors. Efficient internal linking and clean hierarchy help crawlers find important pages faster, especially on larger sites where every extra click or orphaned URL can slow discovery. That is why architecture, renderability, metadata, and URL hygiene sit at the center of modern technical SEO rather than at the edges.

This is also where agency teams can protect the value of their content work. Strong pages do not help if they are buried behind weak navigation, inconsistent canonicals, broken rendering, or messy URL patterns. Technical fixes often become the prerequisite for every other SEO win, because they preserve equity across the site and reduce the chance that a ranking problem is actually an infrastructure problem in disguise.

The Google tools that expose what is happening

Google Search Console is one of the clearest ways to see how the search engine views a site. Its URL Inspection tool provides crawl, index, and serving information directly from Google’s index, which makes it far more useful than guessing why a page is missing, delayed, or underperforming.

Search Console also alerts owners when issues are detected, which is why it belongs in any ongoing technical workflow rather than only in crisis mode. If a page looks fine in a browser but is not being served the way it should be, URL Inspection can reveal whether the problem lives in crawl access, index status, or serving behavior. That distinction saves time and prevents teams from fixing the wrong layer.

Structured data is about understanding, not magic

Google Search Central also recommends structured data because it helps its systems understand content. That does not mean markup guarantees visibility, but it does create eligibility for rich results when the markup is valid and the page qualifies.

That nuance is important for agencies that treat schema as a shortcut. Structured data is best understood as a clarity tool, not a promise of enhanced appearance. It can help Google interpret what a page is about, which becomes more valuable when the same page also needs to be understood by AI systems that depend on clean signals and unambiguous page context.

When crawl budget actually matters

Google’s crawl-budget guidance adds an important scale check. Crawl-budget optimization is mainly for very large and frequently updated sites, not every website on the web. For sites that are not large or rapidly changing, keeping sitemaps updated and checking index coverage is usually enough.

That distinction keeps technical SEO grounded in business reality. Smaller sites often waste time chasing advanced crawl-efficiency tweaks when the bigger issue is basic discoverability, while very large sites can lose serious momentum if crawl resources are spent on low-value or repetitive URLs. The right strategy depends on scale, update frequency, and how quickly important pages need to be seen.

Sitemaps and the Indexing API still have a role

Sitemaps remain one of the most practical ways to help Google discover and prioritize important pages, especially on fast-moving sites or sites with non-text content. They do not replace strong internal linking, but they reinforce it by giving crawlers a cleaner map of what matters most.

For short-lived pages, Google also recommends the Indexing API, particularly for job postings and livestream videos. That matters because some content types lose value quickly if they are discovered late. In those cases, faster submission and more deliberate technical handling can make the difference between being visible while the content is relevant and missing the window entirely.

The agency lesson: technical SEO protects revenue

This is why technical SEO deserves a different kind of attention inside agencies. It is not just a checklist item for developers or a cleanup phase after content launches. It is the layer that determines whether client assets can be crawled, indexed, understood, and reused at scale.

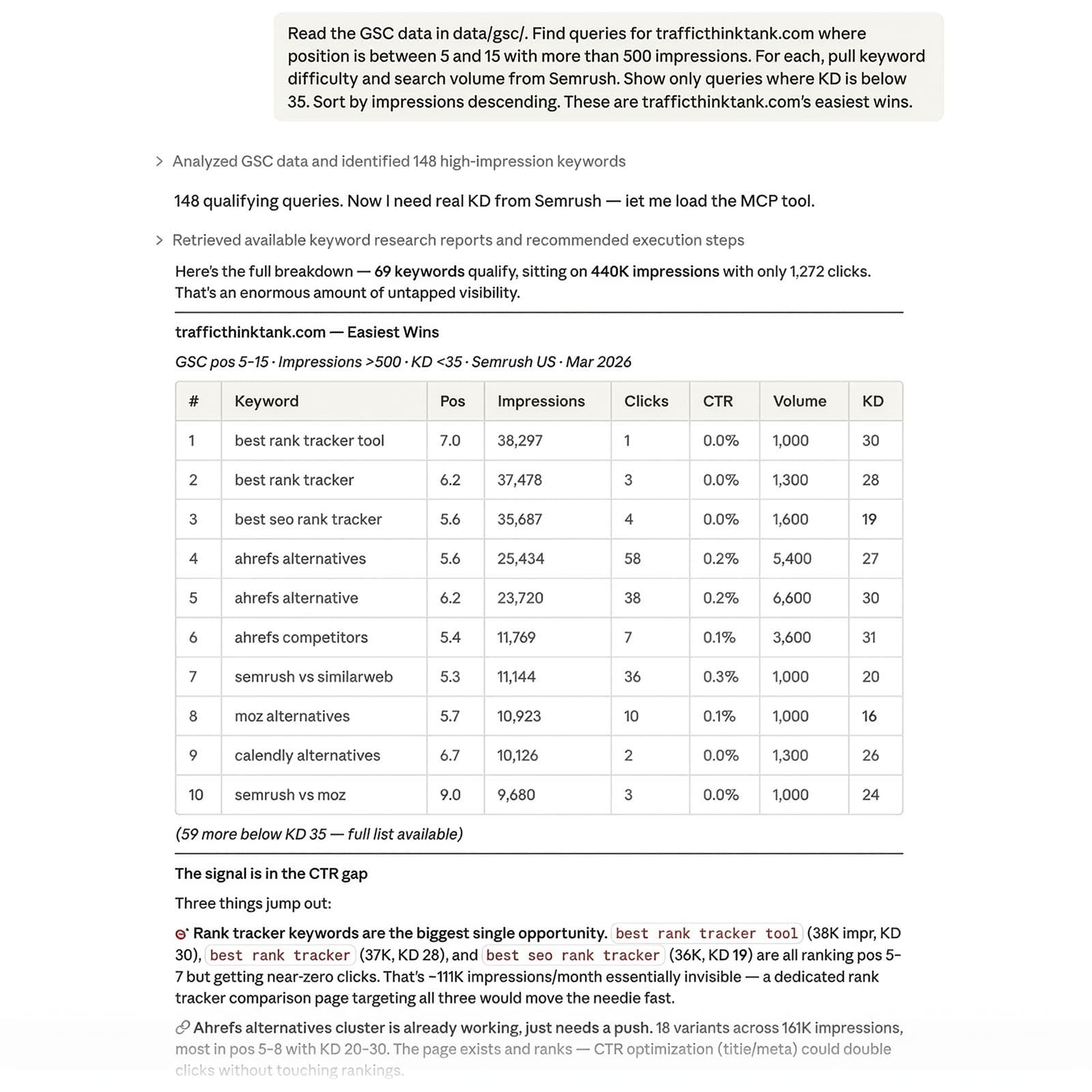

Search Engine Land’s May 2025 survey of 2,302 U.S. adults and 810 marketers underscores how much AI-related search behavior is already shaping discovery habits. As users move between classic search results and AI-generated answers, the same technical foundations keep showing up underneath both experiences. That makes site health more than a maintenance issue. It becomes a revenue safeguard.

- Clean architecture helps crawlers reach the right pages faster.

- Updated sitemaps support discovery on fast-moving sites.

- URL Inspection shows how Google sees crawl, index, and serving status.

- Structured data improves understanding, while rich results remain eligible, not guaranteed.

- The Indexing API is built for short-lived pages that need speed.

Technical SEO now sits at the point where content, infrastructure, and machine understanding meet. Agencies that treat it as the base layer, not the afterthought, are the ones most likely to preserve search performance and AI visibility as both systems keep evolving.

Know something we missed? Have a correction or additional information?

Submit a Tip