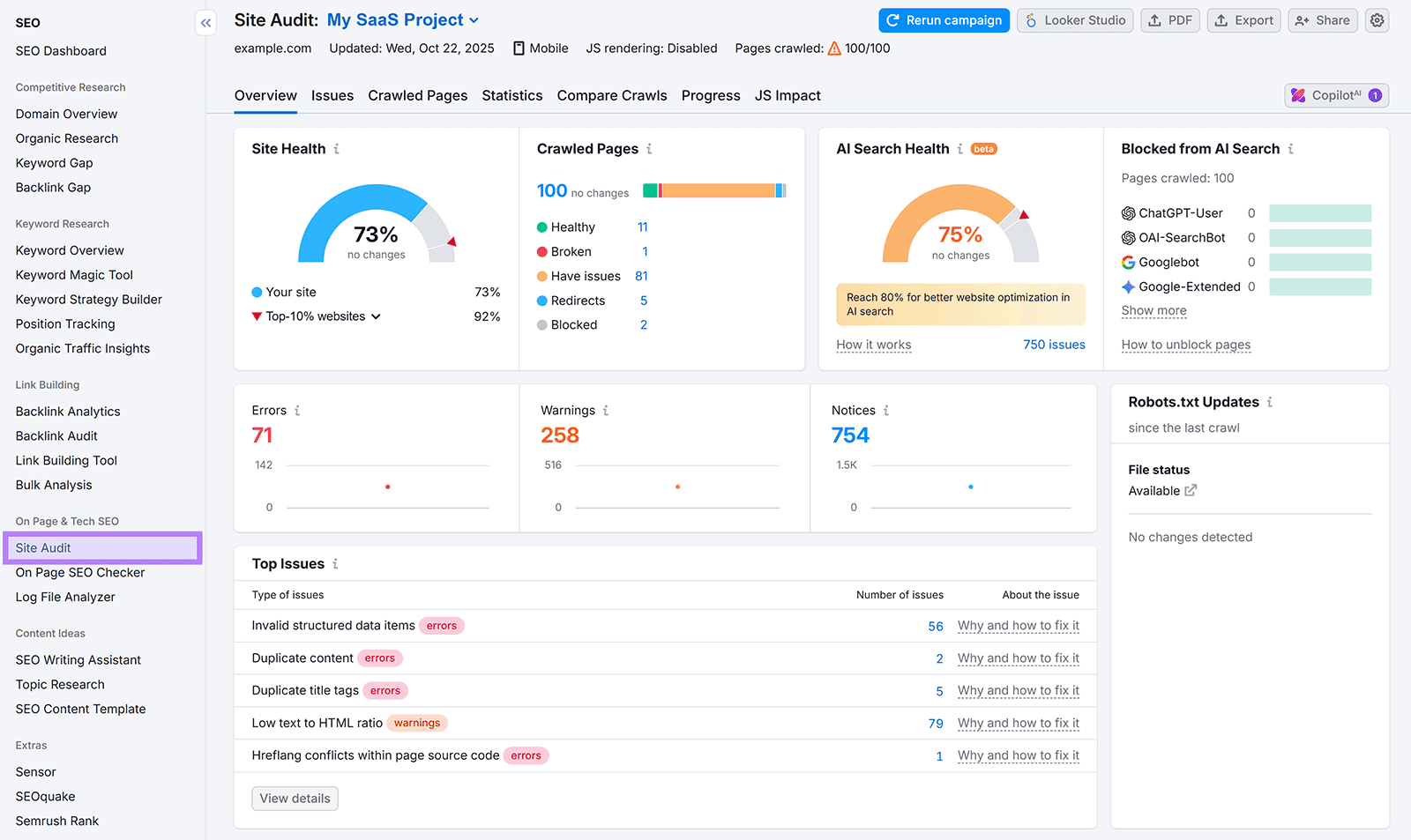

AI crawlers surge across 858,457 sites, driving rising LLM referrals

Duda’s scan of 858,457 sites and 69 million visits showed AI crawlers are fetching live content at scale, while LLM referrals jumped 72.7%.

AI crawlers are moving past novelty and into traffic math. Duda’s analysis of 858,457 websites and 69 million AI crawler visits showed LLM referrals climbing from 93,484 to 161,469, a 72.7% jump, with ChatGPT driving most of the growth.

The clearest pattern in the data was not just volume, but behavior. User fetch, the real-time retrieval used to answer prompts, accounted for 56.9% of all crawler activity, compared with 28.8% for training and 14.3% for discovery. ChatGPT alone was tied to about 39.8 million user-fetch visits, which means AI systems are not simply indexing pages for later use. They are pulling live material when people ask questions.

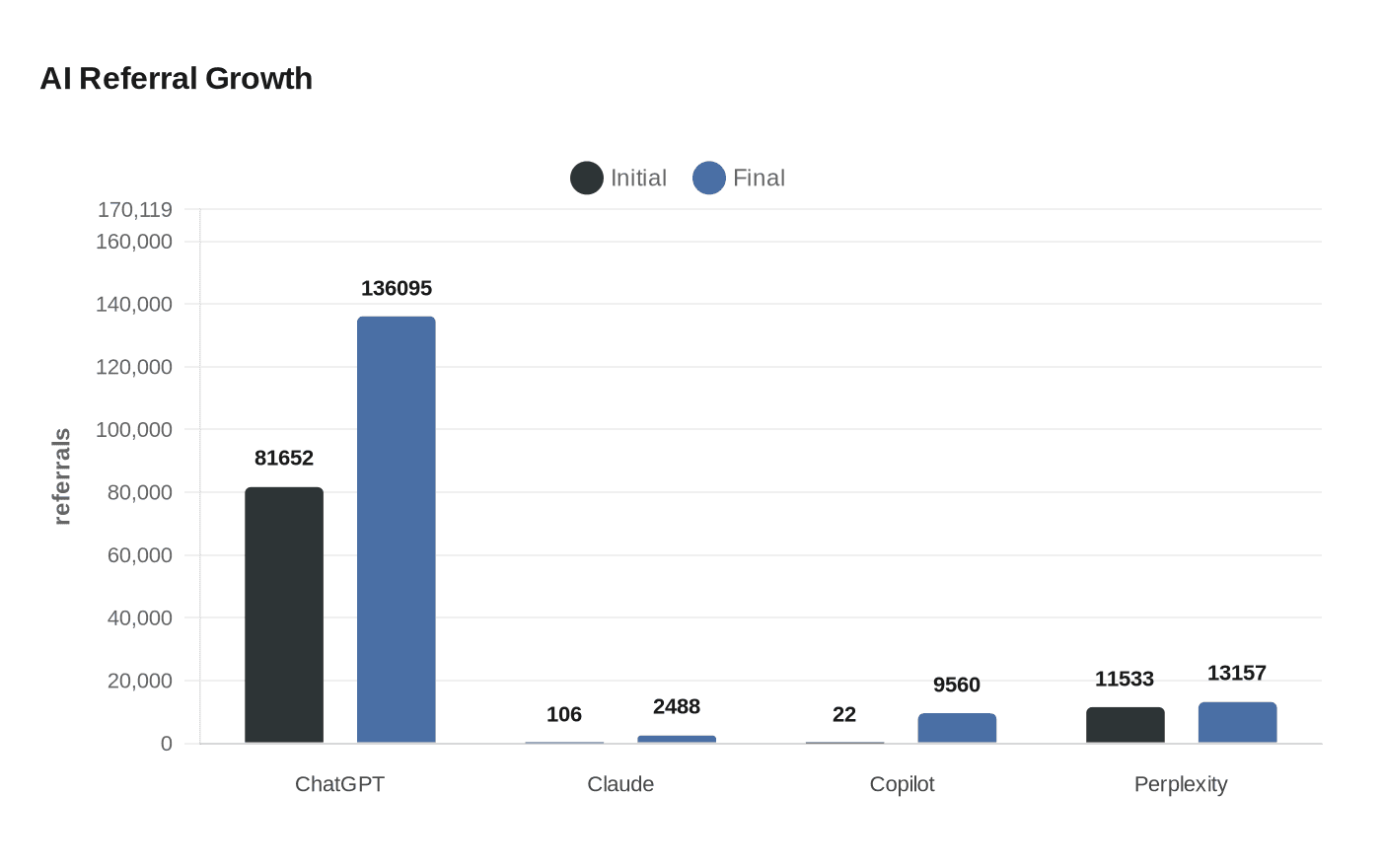

That shift showed up in the referral numbers, too. ChatGPT referrals rose from 81,652 to 136,095, up 66.7%. Claude jumped from 106 to 2,488, while Copilot surged from 22 to 9,560. Perplexity moved from 11,533 to 13,157, a 14.1% increase. A separate April 7 analysis from Search Engine Journal found ChatGPT-User making 3.6 times more requests than Googlebot in a 55-day sample across 69 websites, a stark sign that AI-assisted retrieval is already competing with traditional crawl behavior in some environments.

For site owners, the practical lesson is blunt: content has to be easy for machines to read, segment, and reuse in the moment. Duda said AI-crawled sites generated 320% more human traffic, 270% more form submissions, and 250% more click-to-call events than non-crawled sites. It also found that sites with blogs, local schema, Google Business Profile synchronization, and dynamic pages were crawled 400% more than the median Duda site. Each blog post was associated with a 7% increase in crawler visits, and each additional page was associated with a 4% increase.

The technical side matters just as much. OpenAI’s documentation says GPTBot and OAI-SearchBot can be managed separately through robots.txt, and Anthropic says its crawlers are used for model development, web search, and retrieval at users’ direction. Duda has also started auto-generating llms.txt for published sites, a machine-readable guide meant to tell tools like ChatGPT, Claude, and Perplexity what a site is about in structured language.

The pattern across the data is hard to miss: AI visibility now depends on accessibility, structure, and freshness, not just ranking. The sites that are easiest to crawl are becoming the sites that are easiest to cite, surface, and send traffic back to.

Sources:

Know something we missed? Have a correction or additional information?

Submit a Tip