AI keyword research works only when powered by real search data

AI keyword research only pays off when the model can see real search data. Without that, it is guessing demand, intent, and difficulty instead of measuring them.

The chatbot is not the strategy

AI keyword research gets oversold when people treat prompt-writing like magic. A generic chatbot can brainstorm, but it does not know what people actually search, how often they search it, or how hard it is to rank. That is the whole difference between a clever answer and a useful one: if the model cannot see live search data, it is fabricating demand with confidence.

Ahrefs’ case is blunt on this point. Its workflow only becomes valuable when the AI assistant is connected to a real SEO database, either through Agent A or through a direct MCP connection into tools such as Claude. In that setup, AI stops being a talking head and starts acting like a research layer sitting on top of actual keyword metrics.

Why a data layer changes the job

The practical gain is not just faster brainstorming. Once the model can query live Ahrefs data, it can handle the parts of keyword research that usually eat up a morning: clustering related terms, classifying search intent, and filtering repetitive analysis work. That means the model is no longer guessing whether an idea has volume or whether a page should target informational versus commercial intent.

Ahrefs’ own MCP use cases go further than ideas. The connected workflow can surface keyword metrics like search volume, traffic potential, growth trend, and keyword difficulty without forcing you to stitch together separate tools by hand. That matters because keyword research is not just about finding phrases, it is about knowing which phrases justify content, which deserve a support article, and which are too competitive to chase right now.

Agent A, Claude, and the new workflow

Ahrefs frames the workflow around two practical paths. One is its AI marketing assistant, Agent A. The other is a similar setup in a third-party assistant such as Claude, connected through MCP. In both cases, the point is the same: the AI should be able to ask real questions of a real database instead of free-associating its way through a content brief.

That distinction is why the newer MCP-based workflow matters. Ahrefs says its remote MCP server launched in October 2025 and is hosted by Ahrefs, which means you can connect ChatGPT, Claude, or Copilot to live Ahrefs data without self-hosting anything. Ahrefs’ Claude Desktop and web docs also show that MCP queries can return live Ahrefs data in table form, which is exactly the kind of output a researcher can actually use.

What MCP changes inside the conversation

MCP, or Model Context Protocol, is the infrastructure that makes this shift possible. Anthropic introduced it on November 25, 2024, as an open standard for connecting AI assistants to the systems where data lives. Claude’s connectors are powered by MCP, which is why this is no longer a theoretical integration story, it is a live product direction.

In practice, that means the AI is no longer working from memory alone. It can reach into the systems where your SEO data sits, pull back current information, and then reason on top of it. That is a very different workflow from asking a chatbot for “SEO ideas” and hoping it invents something useful. The prompt is still the front end, but the data connection is where the real work happens.

When AI speeds research up, and when it just makes things up

The easiest rule is simple: if the task depends on live demand signals, AI only helps when it is connected to search data. If you are asking for keyword clusters, intent grouping, traffic potential, keyword difficulty, or competitors around a topic, the model needs the database. Without that layer, it can still write well, but it cannot know whether the topic is worth targeting.

That is also why the article’s warning lands so well. A standalone chatbot can sound persuasive while inventing a keyword opportunity that does not exist. A data-connected workflow can still move fast, but now it is accelerating analysis instead of hallucinating market demand. For content planning, that is the line that matters.

Why this is bigger than keyword research

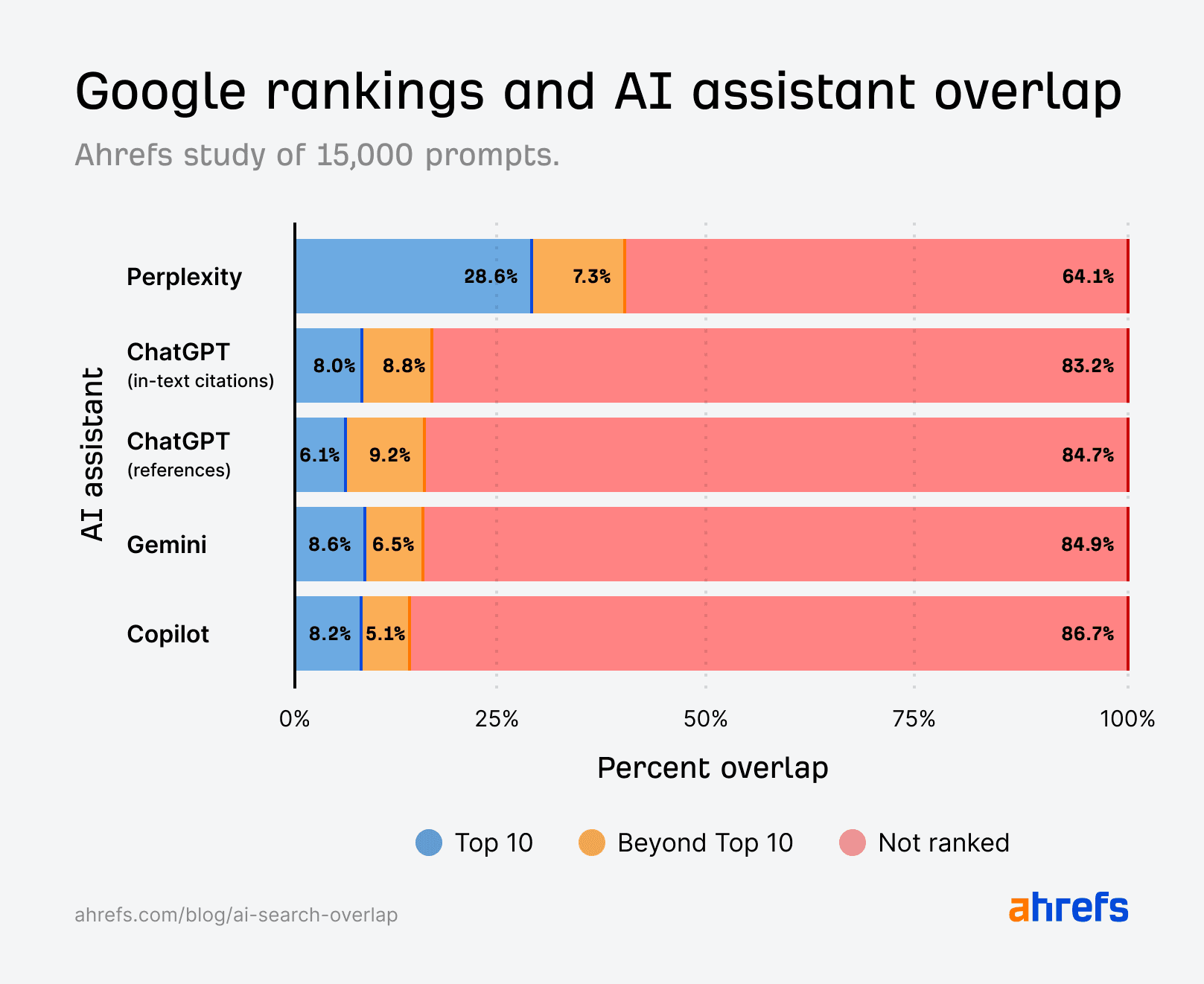

This shift is not happening in a vacuum. Ahrefs defines AI visibility as discoverability across ChatGPT, Claude, Google AI Overviews, Google AI Mode, and Perplexity. In other words, the keyword workflow sits upstream of a much larger contest over which sources AI systems surface, summarize, and cite.

Ahrefs also breaks down how AI gets information into three layers: training data, retrieval systems, and live tools such as APIs and MCPs. That framework explains why prompt craft alone is not enough. If your data layer is weak, the model may still answer, but it will answer from incomplete context, stale assumptions, or whatever it can infer from training data rather than current search reality.

Google’s AI Mode makes the same point from a different angle. Google says it uses query fan-out, breaking a question into subtopics and running many searches simultaneously. That is a clue that old-school single-keyword thinking is getting less useful. Search systems are now operating across clusters of intent, not just isolated head terms.

Legacy SEO still feeds the new stack

The other detail that should keep everyone honest is how much classic SEO still matters. Ahrefs says it has nearly 8,000 citations across Google’s AI experiences and LLMs with zero AI-specific optimization. That is a strong reminder that inherited visibility still exists: strong organic presence can flow into AI discovery even before anyone builds a dedicated AI visibility program.

So this is not a replacement story. It is an extension of the old game, with more surfaces to track and more ways to measure success. Teams now need to watch mentions, citations, and share of voice across AI systems, while also keeping an eye on the upstream work that shapes those outcomes: topic selection, keyword clustering, and the quality of the underlying data.

The practical takeaway

The cleanest way to think about AI keyword research is this: the model is useful when it is wired into trustworthy search data, and noisy when it is not. Agent A, Ahrefs’ MCP workflow, and connected assistants like Claude all point in the same direction, which is a move away from generic chatbot output and toward tool-connected research.

That is the real reality check. Prompts can speed up the work, but they do not create demand, measure intent, or estimate rank difficulty on their own. The leverage comes from connecting AI to live search data, because only then does keyword research become scalable without becoming fiction.

Know something we missed? Have a correction or additional information?

Submit a Tip