ChatGPT Search citation diversity drops after OpenAI model switch

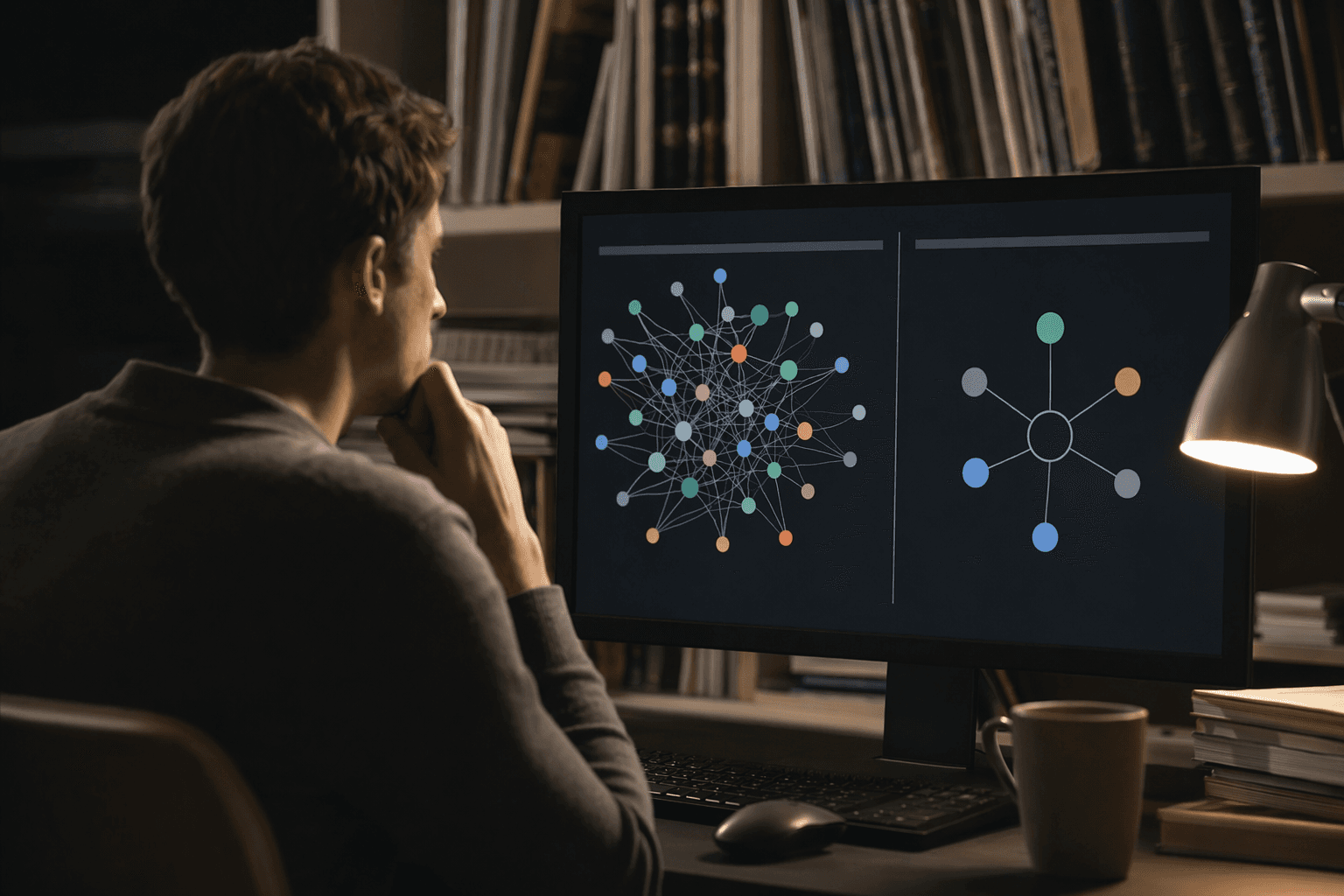

ChatGPT Search is rewriting queries into tighter fan-out searches, and the March 4 model switch made the citation pool visibly smaller.

The answer is built from queries, not one query

ChatGPT Search does not just retrieve a page and stop. OpenAI says it rewrites your prompt into one or more targeted queries, sends those to search providers, and then returns timely answers with links to relevant web sources. OpenAI’s Academy materials and deep research docs point the same way: search and deep research are now core tools for pulling current information from the public web, and deep research can go further by searching specific sites for multi-step tasks.

That is the mechanics-not-magic part journalists and marketers need to internalize. The user’s first keyword is only the starting point; the system is already rewriting that query into related angles, then deciding which sources survive into the final answer. If you only optimize for one phrase, you are optimizing for the wrong machine.

The March 4 switch narrowed the citation surface

The core dataset here is blunt. The authors tracked 400 daily prompts over 14 weeks using monitoring data from Meteoria, and after OpenAI’s March 4 default-model switch, the average number of unique websites cited per response fell from 19 to 15. Unique URLs per response also dropped, from 24 to 19, which is a decline of more than 20 percent in the number of sites getting visibility in a typical answer.

That matters because ChatGPT is no longer a niche surface. OpenAI says ChatGPT has more than 900 million weekly active users and more than 50 million consumer subscribers, so even a small reduction in citation diversity can change brand discovery at scale. Fewer sites inside the answer means fewer chances for a publisher to be seen, linked, and remembered.

Why fan-out matters more than rank tracking

The real shift is not just that the citation count dropped. The article says GPT-5.4 Thinking appears to distribute queries across more than 10 fan-out searches per response, and it uses site: operators to restrict searches to trusted domains. In other words, ChatGPT is hunting through a narrower, more selective set of sources, and that makes trust signals, topical coverage, and source fit more important than a single keyword ranking.

That also explains why some pages may never make it back into the answer even if they are technically relevant. Independent log analysis cited in the notes shows ChatGPT-User crawl volume settled at a lower level after the model switch, which suggests some pages are simply not being crawled the way they were before. If the crawler never revisits a page, the citation pipeline gets cut off before the answer is even assembled.

OpenAI still frames search as a discovery product

OpenAI’s public positioning has not changed in the broad sense: SearchGPT was introduced as a way to connect people with original, high-quality content from the web and help them discover publishers and websites. OpenAI also says SearchGPT is separate from generative model training, so a site can still surface in search even if it opts out of training. That is the official promise, and it lines up with the idea that search visibility is now a discovery and trust problem, not just a crawl problem.

There is also a practical referral layer publishers can actually measure. OpenAI’s publishers FAQ says sites that allow OAI-SearchBot can track referral traffic in analytics tools, and ChatGPT automatically adds utm_source=chatgpt.com to referral URLs. So the system is not purely about citation counts inside the answer, it also leaves a traffic trail when publishers are set up to receive it.

What this means for AI visibility strategy

The obvious mistake is to treat ChatGPT Search like old-school rank tracking with a fresh coat of paint. The better read is that it behaves more like a retrieval and synthesis system that rewards coverage breadth, corroboration, and entity adjacency. The study cited in the notes found that broad topical coverage and cluster-based models outperform the old one-keyword, one-page approach, which is exactly what you would expect if the model is rewriting prompts into related sub-queries before choosing sources.

In practice, that means building for the questions around the question. If the main term is only one slice of the user’s intent, the page also needs the surrounding entities, variants, and supporting facts that a fan-out search might chase next. The goal is not to stuff more keywords onto a page, it is to make the page credible enough, and complete enough, to be selected across several related retrieval steps.

- Cover the primary intent and the adjacent intents on the same topic, so a rewritten query still lands on something useful.

- Make key entities explicit, including product names, institutions, dates, standards, and companion terms that might appear in follow-up queries.

- Build content that can be corroborated by other trusted sources, because ChatGPT is clearly pulling from a smaller trust-weighted pool.

The bottom line for journalists and marketers

This is the part that should change how you brief your team. ChatGPT Search is getting more selective, not more generous, and the March 4 model switch made that visible in the citation counts. The brands that win will not just be findable, they will be the ones ChatGPT considers trustworthy enough to rewrite into, fan out across, and keep citing when the answer gets assembled.

Know something we missed? Have a correction or additional information?

Submit a Tip