HubSpot says AEO metrics must track visibility, citations, and accuracy

Clicks are no longer the whole story in AI search. HubSpot’s answer is a new scoreboard built on visibility, citations, and accuracy.

The scoreboard has changed

Answer engines do not behave like classic search, and that is the whole measurement problem in one sentence. They are probabilistic, they do not expose fixed rankings, and they do not guarantee predictable clicks, so the old habit of judging success by traffic alone is already too small for the job.

HubSpot’s message is blunt: if AI systems like ChatGPT, Perplexity, and Copilot are where discovery happens, then marketers need metrics that show whether a brand is actually present inside the answer. That means tracking how often it appears, how prominently it appears, and whether it appears accurately, instead of pretending a single visits report can explain everything.

Why clicks now give false confidence

The trap is easy to fall into. A brand can show up constantly in an answer engine and still send very little traffic, or it can earn a citation on a few high-value prompts without ever appearing consistently enough to build durable visibility. In both cases, a healthy-looking click chart can hide the real story.

That is why legacy SEO KPIs can be so misleading in this environment. Rankings were already a blunt instrument, but AI answer engines make the gap even wider because there is no fixed blue-link position to defend. If your team is still waiting for a quarterly traffic report before deciding whether content is working, you are looking in the rearview mirror while the answer gets assembled in real time.

The metrics that actually matter

The most useful AEO metrics are the ones that tell you whether you are being chosen, cited, and described correctly. Visibility is the first layer: how often your brand appears across the prompt sets that matter. Share of voice pushes that further by showing how you stack up against competitors on the same prompts, which is a far better test of presence than an isolated mention count.

Citation frequency is the second layer, and it matters because visibility and influence are not the same thing. A brand can be mentioned without being cited, and it can be cited without showing up consistently enough to feel reliable. In practical terms, citation tracking shows whether the answer engine is using your content as evidence, not just mentioning your name in passing.

Accuracy is the third layer, and it is the one too many dashboards ignore. It is not enough for a brand to appear if the answer engine describes the product, service, or category incorrectly. The real test is whether the right content assets surface for the right topics and whether the language in the response matches how you want the market to understand you.

What HubSpot is trying to turn into a system

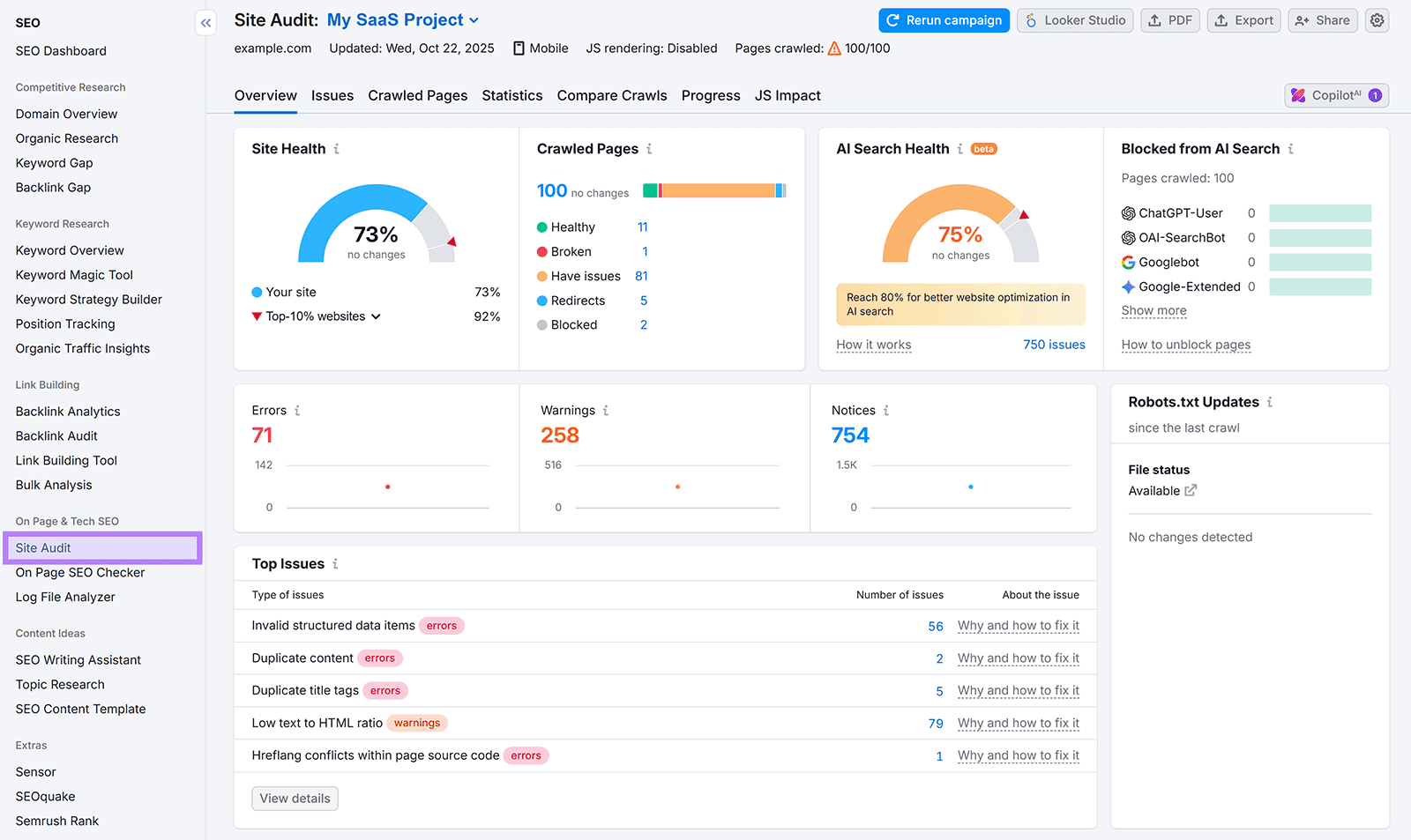

HubSpot’s own AEO tooling reflects that shift. Its product page says the tool includes a visibility score, prompt tracking, citation analysis, and prioritized recommendations, which is basically the kind of dashboard marketers have been asking for since answer engines started swallowing more of the discovery journey. The company also says its dashboard tracks visibility trends across ChatGPT, Gemini, and Perplexity while showing competitive share-of-voice metrics.

That matters because AEO measurement cannot live as a vanity report. The useful version connects discovery to business outcomes, showing where the brand is weak, where competitors are stronger, and which content pages need work. A dashboard that only tells you you are “showing up” is not enough if it cannot tell you whether you are showing up in the right way.

How to use the data without fooling yourself

The best AEO teams will treat prompt tracking like an operating system for iteration. Start with a set of prompts that reflect real buyer questions, category comparisons, and product-use cases, then watch which ones return your brand, which ones return competitors, and which ones return nothing useful at all. That is far more actionable than staring at a traffic graph and guessing what changed.

The practical loop

1. Build prompt sets around the topics that drive discovery and intent.

2. Check visibility and share of voice across those prompts, not just one-off queries.

3. Track citation frequency to see whether your content is being used as source material.

4. Audit accuracy to catch bad descriptions, outdated facts, or mismatched pages.

5. Rewrite weak pages for clarity, structure, and authority, then measure again.

That workflow is where AEO stops being theory. If a page is invisible, the fix may be better topic coverage. If it is visible but not cited, the content may need stronger structure, clearer sourcing cues, or a more authoritative answer format. If it is cited but inaccurate, the page is probably not framed tightly enough for the model to map it cleanly.

Why this reset is happening now

This is not happening in a vacuum. OpenAI says ChatGPT search can return fast, timely answers with links to relevant web sources, and its help materials say responses that use search may include inline citations and a Sources panel. Microsoft says Copilot Search in Bing gives summarized answers with cited sources and suggestions for further exploration. Perplexity describes itself as an AI-powered answer engine, and its help materials say every answer includes direct links to original sources.

That source-based design changes the job of measurement. If the answer itself is now filled with cited material, then the marketer’s problem is not just getting found, it is getting selected as part of the answer. The old SEO scoreboard was built for a world where the click was the main prize. The new one has to account for answers that may satisfy the user before the click ever happens.

There is also clear demand behind the shift. Adobe Express reported that 77% of Americans who use ChatGPT treat it as a search engine, and about one in four prefer ChatGPT over Google for discovery. That is a big enough behavior change to justify a new measurement model all by itself, especially when you consider how broad the usage is across Gen X, Gen Z, Millennials, and Baby Boomers.

The practical takeaway is simple: AI search is changing how discovery works, so AEO metrics have to change how teams keep score. Visibility, citations, share of voice, and accuracy are the numbers that tell the real story now. Clicks still matter, but they are no longer the whole scoreboard, and treating them like they are will leave too many brands feeling successful right up until they vanish from the answer.

Know something we missed? Have a correction or additional information?

Submit a Tip