Managed WordPress hosts block AI bots, hiding sites from AI search visibility

Managed WordPress hosting can quietly block AI crawlers, leaving sites visible in Google Search but missing from ChatGPT, Gemini, Claude, and other AI answers.

Managed WordPress hosting is becoming an infrastructure chokepoint for AI visibility. A site can look healthy in Google Search Console, keep its traffic steady, and still disappear from AI answers if host-level bot rules, robots directives, or CDN settings shut the door on crawlers before any schema or prompt work has a chance to matter.

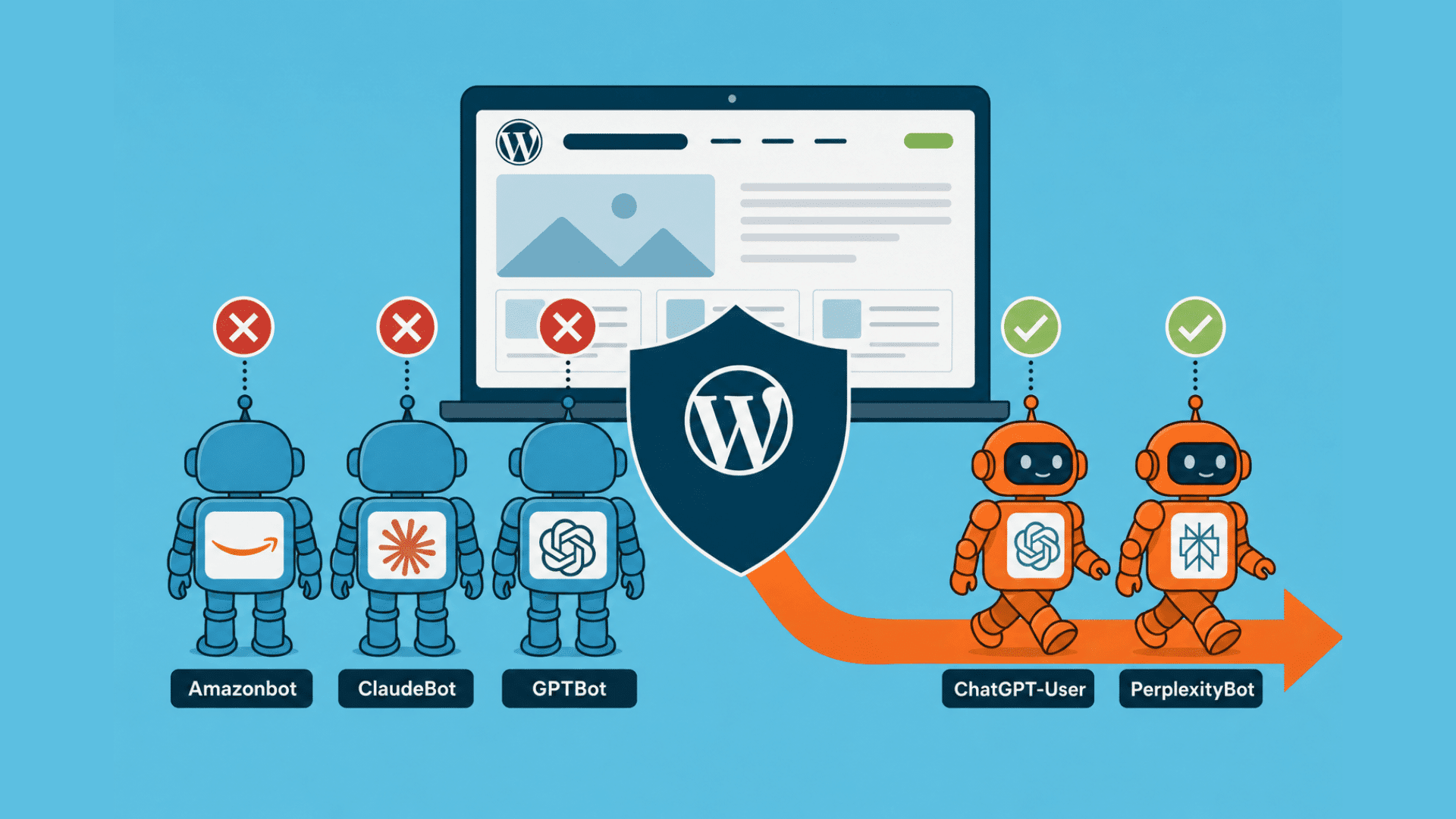

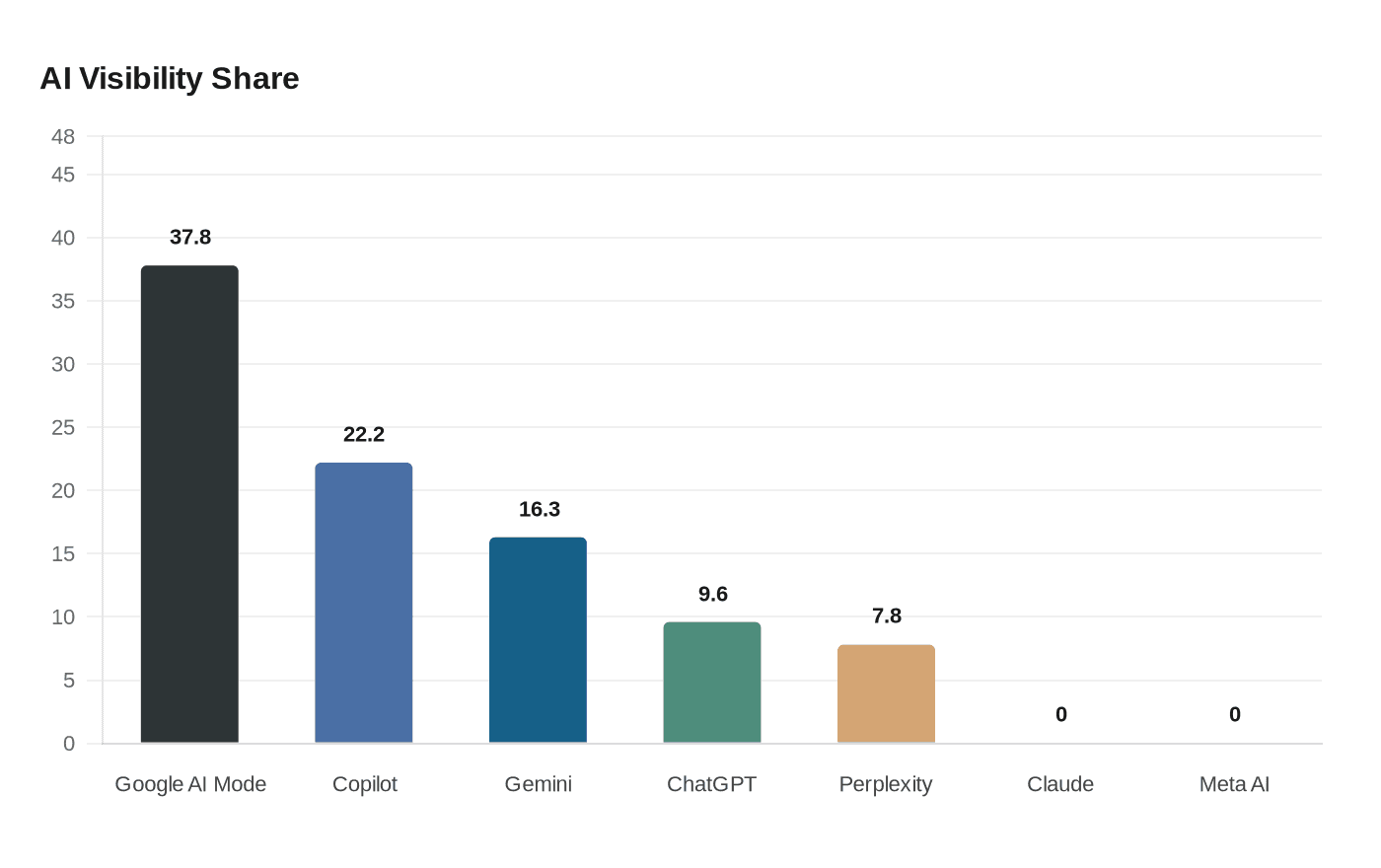

The clearest warning came from searchinfluence.com, where AI visibility over the prior 30 days broke out unevenly: Google AI Mode at 37.8%, Copilot at 22.2%, Google Gemini at 16.3%, ChatGPT at 9.6%, and Perplexity at 7.8%, while Claude and Meta AI both sat at 0.0%. Seven days of Cloudflare logs from April 4 through April 10 showed 29,099 bot requests, and 65.8% of them came from AI bots. Several crawlers were being throttled or stopped at the edge: Amazonbot was rate-limited 51% of the time, ClaudeBot 29%, GPTBot 29%, and Bytespider 61% blocked. ChatGPT-User and PerplexityBot were not rate-limited.

That gap matters because crawl volume does not equal referral value. Cloudflare’s Q1 2026 crawl-to-referral analysis showed ClaudeBot at 20,583 crawl requests for every referral, GPTBot at 1,255 to 1, Perplexity at 111 to 1, and Google at 5 to 1. The numbers explain why hosting platforms and CDNs have started tightening access. Cloudflare launched pay per crawl on July 1, 2025, in private beta, with site owners able to allow, charge, or block crawlers using HTTP responses such as 200, 402, and 403.

The practical check comes before any AEO polish. Google says robots.txt is mainly for managing crawler traffic, not for reliably keeping pages out of Google Search. OpenAI says OAI-SearchBot powers search and that sites disallowing it will not appear in ChatGPT search answers, though they can still show up as navigational links; OpenAI also says GPTBot is separate and used for training foundation models. WP Engine adds another layer of caution, saying it restricts search engine traffic on wpengine.com environments and that staging and development sites will not reflect custom robots.txt changes. For publishers, the job is now to verify crawl access in live server logs, host policies, and CDN rules first, then spend on schema, content, and prompt-facing tweaks.

Know something we missed? Have a correction or additional information?

Submit a Tip