Marketers must audit content for Google rankings and AI citations

The old traffic-only audit is broken. In 2026, winning means being legible to Google and useful enough for AI answers to cite.

Marketers are staring at the wrong dashboard if they treat a traffic dip as the whole story. Search Engine Land’s April 22, 2026 guide makes the sharper point: a page can lose clicks and still gain influence if AI systems start citing it, or it can rank well and still disappear from generative answers if models cannot parse it cleanly.

The new job is serving two search surfaces

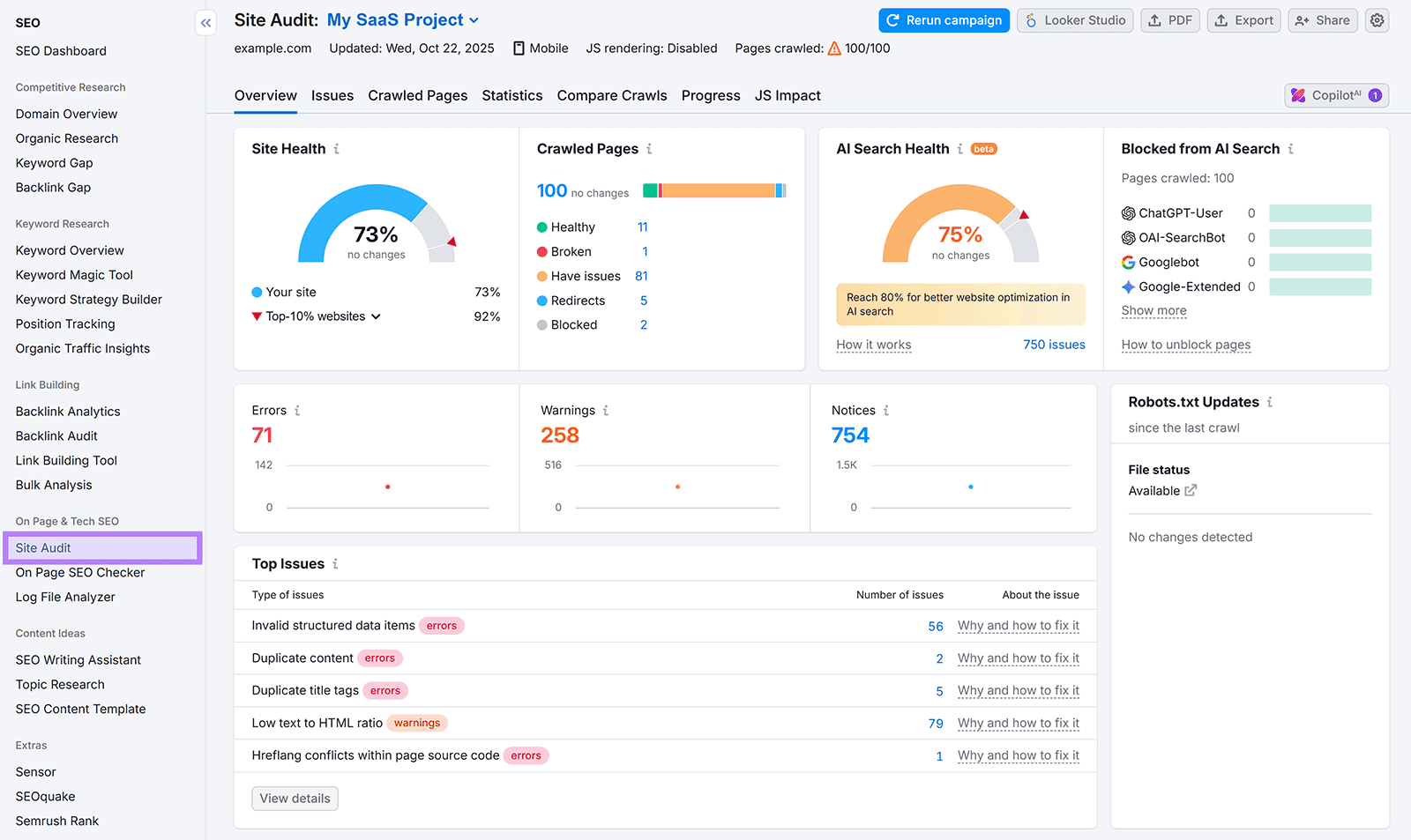

The big change is not that search fundamentals died. It is that content now has to work in two places at once: classic Google results and AI-generated answers from systems like ChatGPT and Perplexity. That means the old habit of auditing only rankings, organic clicks, and conversions is not enough anymore. A useful content audit now needs to measure both organic performance and LLM visibility, because the page is doing two different jobs, and those jobs do not always rise or fall together.

This is the part teams keep missing. Google still cares about backlinks, technical hygiene, and the usual signals that tell it a page deserves a blue-link spot. But AI answer engines are judging something else alongside that: topical depth, credibility, and whether the page can be turned into a clean, trustworthy answer. In practical terms, a guide that never got the click before can still become a repeated citation inside AI answers if it is structured well and speaks clearly to the topic.

What actually changed in 2026

The 2026 shift is not about abandoning SEO instincts. It is about structure, retrievability, and citation-worthiness becoming first-class editorial concerns. Search Engine Land’s framing is useful precisely because it rejects the lazy idea that AI visibility is a side project. The better model is to build one operating system for content, then score it on both click performance and representation inside AI answers.

That matters because the old definition of success was too narrow. For years, the win was a blue-link click. Now a page can sit below the fold in Google and still show up again and again in AI answers. The reverse is also true: a page can rank, collect impressions, and still fail in generative search if it is messy, vague, or missing clear entity authority. In 2026, that is not a weird edge case. It is the new normal.

Google’s own expansion of AI Overviews made the stakes obvious. In October 2024, Google said AI Overviews would reach more than 1 billion global users per month and expand to more than 100 countries and territories. That is not an experiment on the fringe. It is a major search surface that changed how people encounter answers, and Google later described AI Overviews as one of its most popular Search features.

Why answer engines change the audit

ChatGPT and Perplexity are pushing the same point from a different angle. OpenAI says ChatGPT search connects people with original, high-quality web content and brings it into conversation with citations. OpenAI’s research guidance also says ChatGPT can gather and synthesize information into structured reports that include citations. Perplexity describes itself as an AI-powered answer engine that searches the web in real time and gives answers backed by citations users can verify.

That product behavior changes how you judge a content library. If an answer engine is summarizing the web, then the page has to be easy to retrieve, easy to quote, and easy to trust. Dense prose without structure, weak page labeling, thin topical coverage, and fuzzy ownership all hurt you more now than they did when the only job was to rank a page and hope for a click. You are not just writing for searchers anymore. You are writing for systems that need to extract meaning without guessing.

What a real content audit needs to measure

A serious audit now needs two columns side by side. On the Google side, you still track clicks, rankings, and conversions. On the AI side, you track citation frequency, mention consistency, and platform coverage. That gives you a fuller read on whether a page is merely underperforming or whether it has quietly become more influential in a different search environment.

- Does the page still earn organic traffic?

- Is it cited in ChatGPT or Perplexity answers?

- Does the same topic show up consistently across platforms?

- Is the page clear enough that a model can identify the entity, the answer, and the supporting detail quickly?

- Does the page still convert once people arrive, or has it become a dead end?

A good scorecard should ask blunt questions:

That last point matters because not every page is supposed to do the same job. Some pages should win direct traffic. Some should establish authority. Some should be the source that AI systems repeatedly reuse. The mistake is judging all of them by the same metric and then calling the content broken when the function changed.

How to make pages more citation-worthy

The practical fix is not mystical. It starts with making every page more legible to both humans and machines. Tighten the page purpose. Clarify what question it answers. Make the main point obvious in the opening, then support it with specifics, named entities, and clean structure. If a page is trying to cover too much, split it. If it buries the answer, surface it.

The best content systems in 2026 are not built around volume. They are built around usefulness and clarity, the same traits that have always mattered, but now with a stronger premium on machine readability. Topical authority still matters. So does technical health. So does genuinely useful writing. What changed is that these qualities now need to be expressed in a format that can be cited cleanly by AI systems, not just indexed by Google.

The smarter way to read a traffic drop

This is the mindset shift that keeps teams from panicking. A drop in visits is no longer a simple verdict on content quality. It may mean the page lost rankings. It may mean AI Overviews are intercepting the click. It may mean search behavior changed. It may even mean the page is still shaping discovery, just through citations instead of visits.

That is why the two-surface strategy is the right way to think about content now. Do not split your editorial operation into a “SEO team” and an “AI team” as if they are solving different problems. Build one content system, audit it against both Google rankings and AI citations, and keep the pages that still do real work even when the click stops telling the whole story.

Know something we missed? Have a correction or additional information?

Submit a Tip