Similarweb says prompt research is replacing keyword research in AI search

Prompt research is overtaking keyword research as AI search turns demand into full questions, not fragments, and the shortlist is being built before search ever opens.

Why prompts, not keywords, are the new unit of demand

The old SEO game was built around short phrases. The new one is built around full questions. Similarweb’s case is blunt: the average ChatGPT prompt is around 60 words, while the average Google search is about 3.4 words, and that gap changes everything about how discovery works.

Shai Belinsky’s point is that a keyword is usually stripped down to a category, while a prompt carries context, constraints, and intent. In AI search, people are not just asking for pages anymore. They are asking for comparisons, recommendations, trade-offs, and next steps, which means the real battleground has moved from matching terms to answering decisions.

What prompt research actually means

Prompt research is the practice of finding the full natural-language questions people ask in ChatGPT, Perplexity, and Google AI Mode, then mapping where a brand already appears and where it disappears from the answer. Similarweb frames it as a visibility exercise, but the underlying shift is strategic: the content plan has to follow decision context, not keyword clusters.

That matters because the query is no longer a simple search phrase like a product name or category label. It is a longer, more specific request that can include budget limits, feature comparisons, use cases, or brand preferences. If a page only answers the shallow version of that question, it is easy for the AI to leave it out.

The traffic pattern is moving upstream

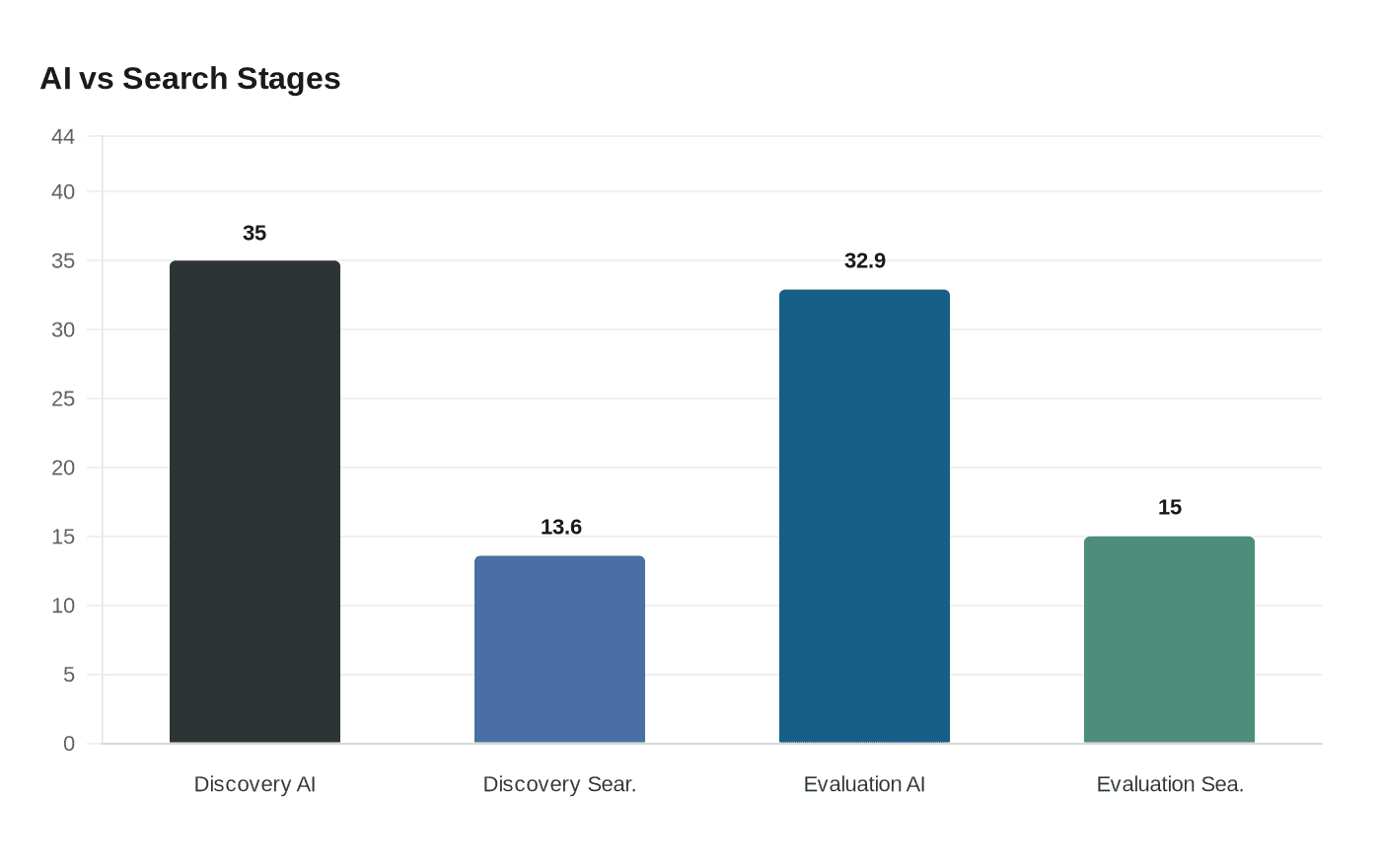

Similarweb’s 2026 GenAI statistics make the shift hard to ignore. It says 35% of U.S. consumers use AI at the product discovery stage, compared with 13.6% who use search. At the evaluation stage, the split is 32.9% AI versus 15% search.

That is the important part: by the time a user lands in a traditional search engine, the shortlist is often already forming inside an AI answer. Similarweb’s 2026 Generative AI Brand Visibility Index was built around that reality, benchmarking 113 brands across six industries with more than 11,000 prompts to show which companies show up when people are still choosing, not just researching.

How AI-style queries behave differently

Independent data backs up the same pattern. A Nectiv study of more than 8,500 prompts found that ChatGPT performed a web search in 31% of prompts. When it did, the average search query length was 5.48 words, and the system averaged 2.17 searches per prompt.

Search Engine Land reported that more than three-quarters of those search queries were five words or longer. That is a very different animal from classic keyword research. Instead of a single head term, the model is fanning out into a cluster of longer, more specific queries that mirror how people actually think through a purchase or problem.

A practical five-step prompt research process

Similarweb’s guide points to a five-step process, and the useful part is that it starts with the questions, not the pages.

1. Collect real prompts from AI search surfaces.

Look inside ChatGPT, Perplexity, and Google AI Mode for the questions people actually ask around your category. You are not hunting for one-word keywords here. You are looking for the full phrasing users rely on when they want a recommendation, a comparison, or a constrained answer.

2. Group prompts by decision context.

Separate early discovery prompts from evaluation prompts. A discovery prompt is broad and exploratory; an evaluation prompt is usually more commercial, more specific, and closer to conversion. That difference matters because the content that earns visibility in one stage may not cover the other.

3. Use Google Search Console as a prompt clue engine.

Similarweb calls out a method many teams overlook: Search Console can reveal query patterns that hint at prompt-level intent, even if they are still showing up as search traffic. If the queries are long, specific, and problem-driven, they often point to the same language people are using in AI tools.

4. Map where you appear and where you are absent.

This is where Similarweb’s AI Visibility and Prompt Tracking modules come in. The point is to identify which prompts already surface your brand, which prompts surface competitors, and which high-value questions leave you out entirely.

5. Close the content gap with the right answer format.

If the model is choosing among products, the answer needs comparison tables, clear specs, and trustworthy context. If it is recommending a service, it needs proof points, reviews, and language that aligns with the way the AI summarizes the category.

The tools matter because the answer surface is changing

Similarweb says its AI Search Intelligence suite tracks prompts, citations, sentiment, and AI chatbot traffic. That is a big deal because AI visibility is not just about being mentioned. It is about how the model frames the brand, what it cites alongside it, and whether the tone is neutral, favorable, or skeptical.

Prompt Tracking is especially useful because it can show the top 30 brands for each topic you track. That gives teams a live view of who is actually winning the prompt, not just who owns a keyword report. In the old SEO world, rank was the headline. In the prompt world, presence inside the answer is the headline.

What replaces keyword clustering

The strategic shift is simple to say and painful to execute: move from keyword clustering to decision-context mapping. Instead of asking what terms rank, ask what prompts trigger a shortlist, what language the AI uses to compare options, and which content gaps keep the brand out of the answer.

That changes the work on the page, too. Product feeds need to be clean, category pages need sharper distinctions, reviews matter more, and trusted third-party coverage can carry real weight because AI systems are assembling answers from multiple sources. The new visibility game is not only about being indexed. It is about being legible inside the answer itself.

Why this is more than a tactic shift

Similarweb’s broader 2026 reporting shows that AI search is not a side channel anymore. The Brand Visibility Index, the GenAI statistics, and the prompt research playbook all point in the same direction: discovery is moving into conversational systems before users ever reach a classic search result.

That is why prompt research is becoming the new discipline. Keywords still matter, but they are no longer the full unit of demand. The brands that will stay visible are the ones that understand the questions behind the question, the constraints inside the prompt, and the exact answer format the model prefers when it builds the shortlist.

Know something we missed? Have a correction or additional information?

Submit a Tip